Virtual Computation Cube

Project description

VirtuGhan

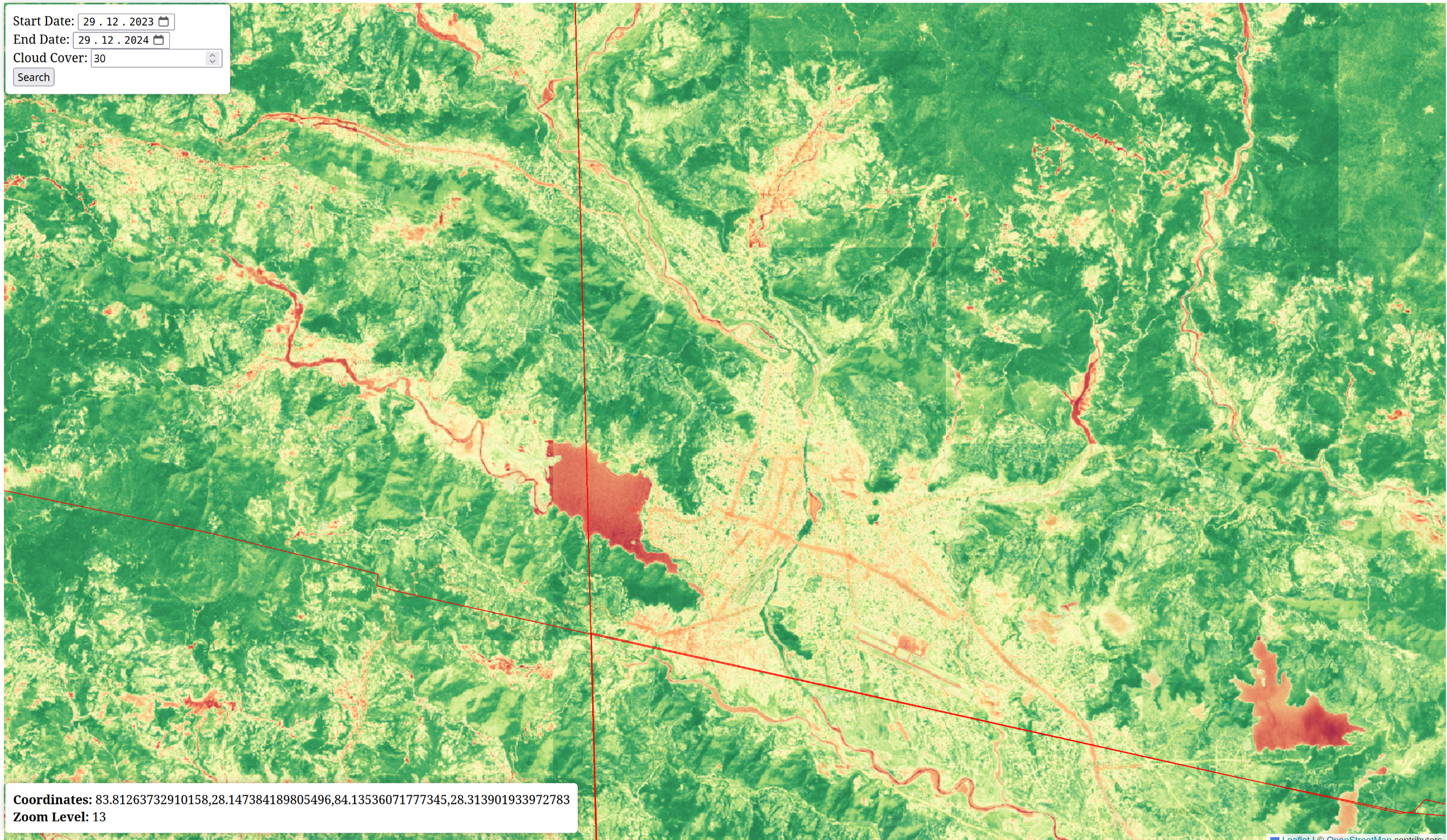

Name is combination of two words virtual & cube , where cube translated to Nepali word घन, also known as virtual computation cube. You can test demo of this project for Sentinel2 data at : https://virtughan.live/

Background

We started initially by looking at how Google Earth Engine (GEE) computes results on-the-fly at different zoom levels on large-scale Earth observation datasets. We were fascinated by the approach and felt an urge to replicate something similar on our own in an open-source manner. We knew Google uses their own kind of tiling, so we started from there.

Initially, we faced a challenge – how could we generate tiles and compute at the same time without pre-computing the whole dataset? Pre-computation would lead to larger processed data sizes, which we didn’t want. And so, the exploration began and the concept of on the fly tiling computation introduced

At university, we were introduced to the concept of data cubes and the advantages of having a time dimension and semantic layers in the data. It seemed fascinating, despite the challenge of maintaining terabytes of satellite imagery. We thought – maybe we could achieve something similar by developing an approach where one doesn’t need to replicate data but can still build a data cube with semantic layers and computation. This raised another challenge – how to make it work? And hence come the virtual data cube

We started converting Sentinel-2 images to Cloud Optimized GeoTIFFs (COGs) and experimented with the time dimension using Python’s xarray to compute the data. We found that AWS’s effort to store Sentinel images as COGs made it easier for us to build virtual data cubes across the world without storing any data. This felt like an achievement and proof that modern data cubes should focus on improving computation rather than worrying about how to manage terabytes of data.

We wanted to build something to show that this approach actually works and is scalable. We deliberately chose to use only our laptops to run the prototype and process a year’s worth of data without expensive servers.

Install

https://pypi.org/project/VirtuGhan/

pip install VirtuGhan

Purpose

1. Efficient On-the-Fly Tile Computation

This research explores how to perform real-time calculations on satellite images at different zoom levels, similar to Google Earth Engine, but using open-source tools. By using Cloud Optimized GeoTIFFs (COGs) with Sentinel-2 imagery, large images can be analyzed without needing to pre-process or store them. The study highlights how this method can scale well and work efficiently, even with limited hardware. Our main focus is on how to scale the computation on different zoom-levels without introducing server overhead

Example python usage

import mercantile

from PIL import Image

from io import BytesIO

from vcube.tile import TileProcessor

lat, lon = 28.28139, 83.91866

zoom_level = 12

x, y, z = mercantile.tile(lon, lat, zoom_level)

tile_processor = TileProcessor()

image_bytes, feature = await tile_processor.cached_generate_tile(

x=x,

y=y,

z=z,

start_date="2020-01-01",

end_date="2025-01-01",

cloud_cover=30,

band1="red",

band2="nir",

formula="(band2-band1)/(band2+band1)",

colormap_str="RdYlGn",

)

image = Image.open(BytesIO(image_bytes))

print(f"Tile: {x}_{y}_{z}")

print(f"Date: {feature['properties']['datetime']}")

print(f"Cloud Cover: {feature['properties']['eo:cloud_cover']}%")

image.save(f'tile_{x}_{y}_{z}.png')

2. Virtual Computation Cubes: Focusing on Computation Instead of Storage

We believe that instead of focusing on storing large images, data cube systems should prioritize efficient computation. COGs make it possible to analyze images directly without storing the entire dataset. This introduces the idea of virtual computation cubes, where images are stacked and processed over time, allowing for analysis across different layers ( including semantic layers ) without needing to download or save everything. So original data is never replicated. In this setup, a data provider can store and convert images to COGs, while users or service providers focus on calculations. This approach reduces the need for terra-bytes of storage and makes it easier to process large datasets quickly.

Example python usage

Example NDVI calculation

from vcube.engine import VCubeProcessor

processor = VCubeProcessor(

bbox=[83.84765625, 28.22697003891833, 83.935546875, 28.304380682962773],

start_date="2023-01-01",

end_date="2025-01-01",

cloud_cover=30,

formula="(band2-band1)/(band2+band1)",

band1="red",

band2="nir",

operation="median",

timeseries=True,

output_dir="virtughan_output",

workers=16

)

processor.compute()

3. Cloud Optimized GeoTIFF and STAC API for Large Earth Observation Data

This research introduces methods on how to use COGs, the SpatioTemporal Asset Catalog (STAC) API, and NumPy arrays to improve the way large Earth observation datasets are accessed and processed. The method allows users to focus on specific areas of interest, process data across different bands and layers over time, and maintain optimal resolution while ensuring fast performance. By using the STAC API, it becomes easier to search for and only process the necessary data without needing to download entire images ( not even the single scene , only accessing the parts ) The study shows how COGs can improve the handling of large datasets, not only making the access faster but also making computation efficient, and scalable across different zoom levels .

Learn about COG and how to generate one for this project Here

Local Setup

This project has FASTAPI and Plain JS Frontend.

Inorder to setup project , follow here

Resources and Credits

- https://registry.opendata.aws/sentinel-2-l2a-cogs/ COGS Stac API for sentinel-2

Copyright © 2024 – Concept by Kshitij and Upen , Distributed under GNU General Public License v3.0

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file virtughan-0.3.1.tar.gz.

File metadata

- Download URL: virtughan-0.3.1.tar.gz

- Upload date:

- Size: 25.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.0.0 CPython/3.11.11 Linux/6.5.0-1025-azure

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

235bf560d4d3c04aa1958efdebeef80219e9205e0474f8700bcb970877be5222

|

|

| MD5 |

c4f28b97d7333f10e5534917cd377845

|

|

| BLAKE2b-256 |

354bdd2e6115a7fe25b1e923135fbd4b8374b82334ee12aaeb9520b96f9627b5

|

File details

Details for the file virtughan-0.3.1-py3-none-any.whl.

File metadata

- Download URL: virtughan-0.3.1-py3-none-any.whl

- Upload date:

- Size: 25.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.0.0 CPython/3.11.11 Linux/6.5.0-1025-azure

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

48f72758a7bc44ca371092b0555dbe723546e613b5c7d6f46b063dc2357541de

|

|

| MD5 |

9eca989afd8d6715a34683e30990cfc6

|

|

| BLAKE2b-256 |

ed7068a9dbf4b1e78013c55a576beb5e8a1c6709ab62c7c31050c2a970b5b508

|