AI-Powered Vulnerability Detection using Large Language Models

Project description

A tool to identify remotely exploitable vulnerabilities using LLMs and static code analysis.

World's first autonomous AI-discovered 0day vulnerabilities

Description

Vulnhuntr leverages the power of LLMs to automatically create and analyze entire code call chains starting from remote user input and ending at server output for detection of complex, multi-step, security-bypassing vulnerabilities that go far beyond what traditional static code analysis tools are capable of performing. See all the details including the Vulnhuntr output for all the 0-days here: Protect AI Vulnhuntr Blog

Vulnerabilities Found

[!TIP] Found a vulnerability using Vulnhuntr? Submit a report to huntr.com to get $$ and submit a PR to add it to the list below!

[!NOTE] This table is just a sample of the vulnerabilities found so far. We will unredact as responsible disclosure periods end.

| Repository | Stars | Vulnerabilities |

|---|---|---|

| gpt_academic | 67k | LFI, XSS |

| ComfyUI | 66k | XSS |

| Langflow | 46k | RCE, IDOR |

| FastChat | 37k | SSRF |

| Ragflow | 31k | RCE |

| LLaVA | 21k | SSRF |

| gpt-researcher | 17k | AFO |

| Letta | 14k | AFO |

Limitations

- Only Python codebases are supported.

- Can only identify the following vulnerability classes:

- Local file include (LFI)

- Arbitrary file overwrite (AFO)

- Remote code execution (RCE)

- Cross site scripting (XSS)

- SQL Injection (SQLI)

- Server side request forgery (SSRF)

- Insecure Direct Object Reference (IDOR)

Installation

[!IMPORTANT] Vulnhuntr requires Python 3.10-3.13 due to dependencies on Jedi for Python code parsing. It will not work reliably with Python 3.9 or earlier, or Python 3.14+.

Recommended: Virtual Environment with pip/uv

We recommend installing in a virtual environment to isolate dependencies:

Using venv and pip:

python3.10 -m venv venv

source venv/bin/activate # On Windows: venv\Scripts\activate

pip install vulnhuntr

Using uv (faster):

uv venv --python 3.10

source .venv/bin/activate # On Windows: .venv\Scripts\activate

uv pip install vulnhuntr

Alternative: pipx or Docker

Using pipx:

pipx install vulnhuntr --python python3.10

Using Docker:

docker build -t vulnhuntr https://github.com/diaz3618/vulnhuntr.git#main

Development Installation

Clone and install from source:

git clone https://github.com/diaz3618/vulnhuntr

cd vulnhuntr

python3.10 -m venv venv

source venv/bin/activate

pip install -e ".[dev]"

Usage

This tool analyzes GitHub repositories for potential remotely exploitable vulnerabilities using LLMs and static code analysis.

API Key Configuration

You can provide API keys in two ways:

Option 1: Environment variables

export ANTHROPIC_API_KEY="sk-ant-..."

export OPENAI_API_KEY="sk-..."

Option 2: .env file (recommended for development)

Create a .env file in your project directory or working directory:

# .env

ANTHROPIC_API_KEY=sk-ant-...

OPENAI_API_KEY=sk-...

OPENROUTER_API_KEY=sk-or-...

Vulnhuntr will automatically load environment variables from .env files.

[!CAUTION] Always set spending limits or closely monitor costs with the LLM provider you use. This tool has the potential to rack up hefty bills as it tries to fit as much code in the LLMs context window as possible.

[!TIP] We recommend using Claude for the LLM. Through testing we have had better results with it over GPT. For free testing, try OpenRouter with free models like

qwen/qwen3-coder:free.

Command Line Interface

usage: vulnhuntr [-h] -r ROOT [-a ANALYZE] [-l {claude,gpt,ollama,openrouter}] [-v]

[--dry-run] [--budget BUDGET] [--resume [RESUME]] [--no-checkpoint]

[--sarif PATH] [--html PATH] [--json PATH] [--csv PATH] [--markdown PATH]

[--export-all DIR] [--create-issues] [--webhook URL]

[--webhook-format {json,slack,discord,teams}] [--webhook-secret SECRET]

Analyze a GitHub project for vulnerabilities. Set API keys via environment variables or .env file.

options:

-h, --help show this help message and exit

-r ROOT, --root ROOT Path to the root directory of the project

-a ANALYZE, --analyze ANALYZE

Specific path or file within the project to analyze

-l {claude,gpt,ollama,openrouter}, --llm {claude,gpt,ollama,openrouter}

LLM client to use (default: claude). OpenRouter provides access to free models.

-v, --verbosity Increase output verbosity (-v for INFO, -vv for DEBUG)

Cost Management:

--dry-run Estimate costs without running analysis

--budget BUDGET Maximum budget in USD (stops analysis when exceeded)

--resume [RESUME] Resume from checkpoint (default: .vulnhuntr_checkpoint)

--no-checkpoint Disable checkpointing

Report Generation:

--sarif PATH Output SARIF 2.1.0 report to specified file

--html PATH Output HTML report to specified file

--json PATH Output JSON report to specified file

--csv PATH Output CSV report to specified file

--markdown PATH Output Markdown report to specified file

--export-all DIR Export all report formats to specified directory

Integrations:

--create-issues Create GitHub issues for findings

--webhook URL Send findings to webhook URL

--webhook-format Webhook payload format (json, slack, discord, teams)

Cost Management Features

Vulnhuntr includes built-in cost tracking and budget controls:

Estimate costs before analysis:

vulnhuntr -r /path/to/repo --dry-run

Set a maximum budget:

vulnhuntr -r /path/to/repo --budget 5.0 # Stop at $5.00

Resume interrupted analysis:

# Analysis automatically checkpoints progress

vulnhuntr -r /path/to/repo --resume

# Continue with higher budget if needed

vulnhuntr -r /path/to/repo --resume --budget 10.0

Examples

Basic analysis with .env file:

Create .env file:

# .env

ANTHROPIC_API_KEY=sk-ant-...

Run analysis:

vulnhuntr -r /path/to/target/repo/

[!TIP] We recommend analyzing specific files that handle remote user input rather than entire repositories for better results and lower costs.

Analyze a specific file with budget control:

vulnhuntr -r /path/to/repo -a server.py --budget 3.0 -v

Generate HTML report with cost estimation:

# First estimate costs

vulnhuntr -r /path/to/repo -a api/ --dry-run

# Then run with budget and generate report

vulnhuntr -r /path/to/repo -a api/ --budget 5.0 --html report.html -v

Using environment variables (alternative to .env):

export OPENAI_API_KEY="sk-..."

vulnhuntr -r /path/to/target/repo/ -a server.py -l gpt

Docker installation with volume mount:

docker run --rm \

-e ANTHROPIC_API_KEY=sk-ant-... \

-v /path/to/target/repo:/repo \

vulnhuntr:latest -r /repo -a server.py

Free testing with OpenRouter:

# .env

OPENROUTER_API_KEY=sk-or-...

# Run with free model

vulnhuntr -r /path/to/repo -a api.py -l openrouter --budget 0.50

Experimental

Ollama is included as an option, however we haven't had success with the open source models structuring their output correctly.

export OLLAMA_BASE_URL=http://localhost:11434/api/generate

export OLLAMA_MODEL=llama3.2

vulnhuntr -r /path/to/target/repo/ -a server.py -l ollama

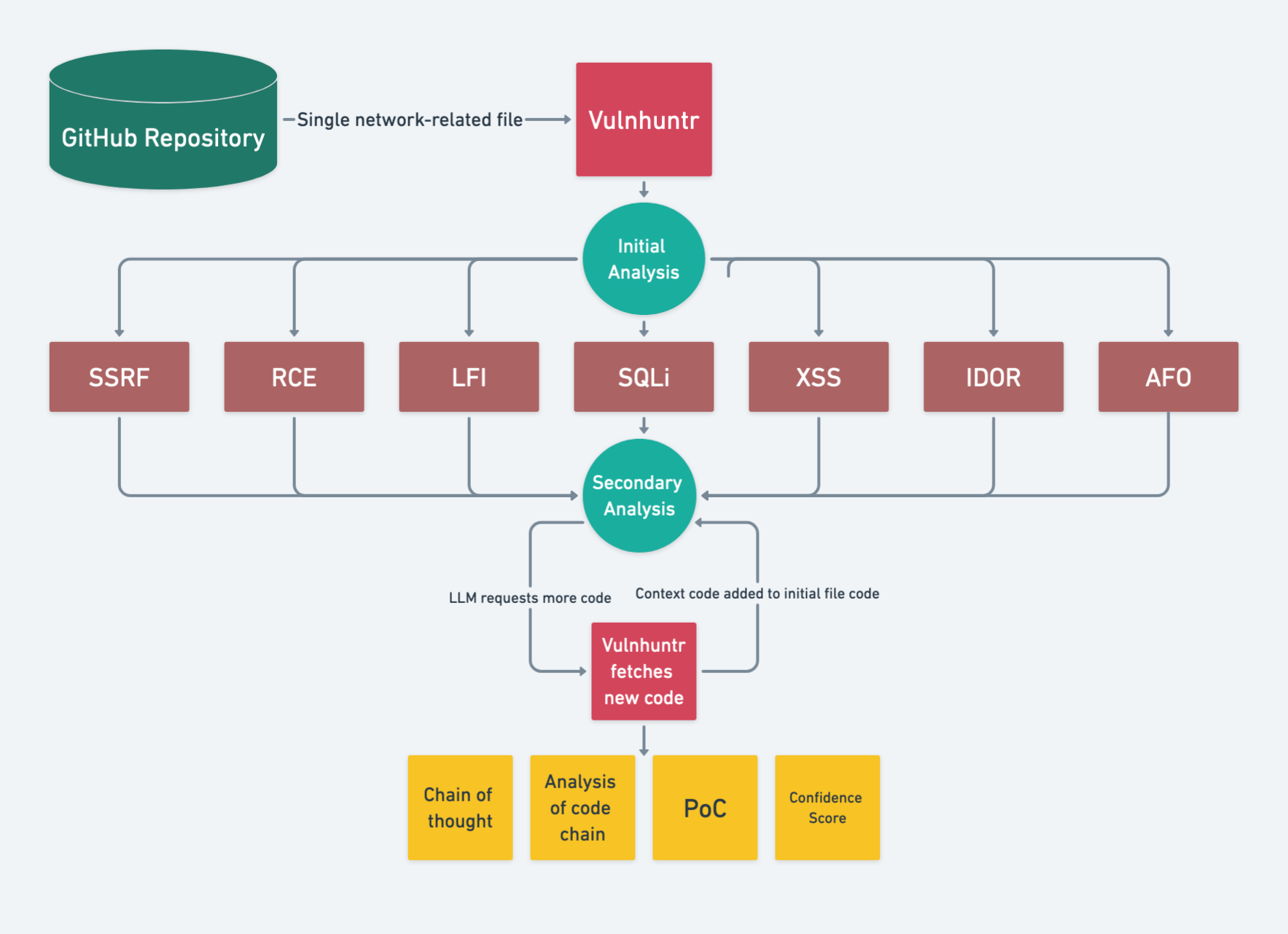

Logic Flow

- LLM summarizes the README and includes this in the system prompt

- LLM does initial analysis on an entire file and reports any potential vulnerabilities

- Vulnhuntr then gives the LLM a vulnerability-specific prompt for secondary analysis

- Each time the LLM analyzes the code, it requests additional context functions/classes/variables from other files in the project

- It continues doing this until the entire call chain from user input to server processing is complete then gives a final analysis

- The final analysis consists of it's reasoning, a proof of concept exploit, and a confidence score

[!TIP] Generally confidence scores < 7 mean there's unlikely a vulnerability. Confidence scores of 7 mean it should be investigated, and confidence scores of 8+ mean it is very likely to be a valid vulnerability.

Output

The tool generates detailed reports in multiple formats:

- SARIF 2.1.0: For integration with GitHub Security, IDE security extensions, and CI/CD pipelines

- HTML: Interactive web-based reports with vulnerability details

- JSON: Machine-readable format for custom integrations

- CSV: Spreadsheet-compatible for tracking and analysis

- Markdown: Documentation-friendly format

Example: Generate Multiple Report Formats

vulnhuntr -r /path/to/repo --sarif report.sarif --html report.html --json report.json

Example: Export All Formats at Once

vulnhuntr -r /path/to/repo --export-all ./reports/

Console Output

The tool also outputs detailed findings to the console. Below is an example of a Vulnhuntr report describing a 0-day remote code execution vulnerability in Ragflow (now fixed):

scratchpad:

1. Analyzing the add_llm function in llm_app.py. 2. Identified user input used as keys to access dictionaries: EmbeddingModel, ChatModel, RerankModel,

CvModel, and TTSModel. 3. These dictionaries contain class references, which are instantiated with user-supplied parameters. 4. The factory variable is

directly used from user input (req['llm_factory']) without proper validation. 5. The llm_name is also derived from user input in most cases. 6. The

instantiation of these classes occurs in try-except blocks, but exceptions are only used for error messages, not preventing execution. 7. The api_key

and other sensitive information are passed directly to these instantiated classes. 8. There's no comprehensive input validation or sanitization for the

factory or llm_name variables. 9. The vulnerability is in a route decorated with @login_required, indicating it's accessible to authenticated users. 10.

Reviewing the EmbeddingModel, ChatModel, RerankModel, CvModel, and TTSModel dictionaries to understand their contents and potential for exploitation.

----------------------------------------

analysis:

The add_llm function in llm_app.py contains a critical Remote Code Execution (RCE) vulnerability. The function uses user-supplied input

(req['llm_factory'] and req['llm_name']) to dynamically instantiate classes from the EmbeddingModel, ChatModel, RerankModel, CvModel, and TTSModel

dictionaries. This pattern of using user input as a key to access and instantiate classes is inherently dangerous, as it allows an attacker to

potentially execute arbitrary code. The vulnerability is exacerbated by the lack of comprehensive input validation or sanitization on these

user-supplied values. While there are some checks for specific factory types, they are not exhaustive and can be bypassed. An attacker could potentially

provide a malicious value for 'llm_factory' that, when used as an index to these model dictionaries, results in the execution of arbitrary code. The

vulnerability is particularly severe because it occurs in a route decorated with @login_required, suggesting it's accessible to authenticated users,

which might give a false sense of security.

----------------------------------------

poc:

POST /add_llm HTTP/1.1

Host: target.com

Content-Type: application/json

Authorization: Bearer <valid_token>

{

"llm_factory": "__import__('os').system",

"llm_name": "id",

"model_type": "EMBEDDING",

"api_key": "dummy_key"

}

This payload attempts to exploit the vulnerability by setting 'llm_factory' to a string that, when evaluated, imports the os module and calls system.

The 'llm_name' is set to 'id', which would be executed as a system command if the exploit is successful.

----------------------------------------

confidence_score:

8

----------------------------------------

vulnerability_types:

- RCE

----------------------------------------

Logging

The tool logs the analysis process and results in a file named vulhuntr.log. This file contains detailed information about each step of the analysis, including the initial and secondary assessments.

Authors

Original Authors (Protect AI):

- Dan McInerney: dan@protectai.com, @DanHMcinerney

- Marcello Salvati: marcello@protectai.com, @byt3bl33d3r

Current Developer for this Fork:

- Daniel Diaz Santiago: daniel.diaz.stg@gmail.com

License

This project is licensed under the GNU Affero General Public License v3.0 (AGPL-3.0).

See the LICENSE file for full details. Original project by Protect AI.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file vulnhuntr-1.1.3.tar.gz.

File metadata

- Download URL: vulnhuntr-1.1.3.tar.gz

- Upload date:

- Size: 146.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cb7705ac4c2f0f0905154c777611466acdf389720afac313984188af42f87878

|

|

| MD5 |

e35f6ddba4878e44716b0f806563fee8

|

|

| BLAKE2b-256 |

07e63540e56814cb53636c97ff6ed065fe6fcfeae398b4a8cd4da629c315743c

|

File details

Details for the file vulnhuntr-1.1.3-py3-none-any.whl.

File metadata

- Download URL: vulnhuntr-1.1.3-py3-none-any.whl

- Upload date:

- Size: 117.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

48c0790561d4fe8e38dafb5066d188b69af6efa6ebae0b9e29b23c276f2b9460

|

|

| MD5 |

605b4ff2785b9ead0e8b2a29da068f58

|

|

| BLAKE2b-256 |

1f891ff0a7426ccbb715f25c9400c2dd77c21c1eadb815409381f28476350c7a

|