Wappalyzer-based tech stack detection library

Project description

Wappalyzer Next

This project is a command line tool and python library that uses the Wappalyzer browser extension and its fingerprints to detect technologies. Other projects that emerged after the discontinuation of the official open-source project are using outdated fingerprints and lack accuracy on dynamic web apps. This project bypasses those limitations by running the extension in Chromium through Playwright.

Installation

After installing the Python package, install Playwright's Chromium browser:

python -m playwright install chromium

In minimal Linux containers, install Chromium's system dependencies as well:

python -m playwright install-deps chromium

Install as a command-line tool

pipx install wappalyzer

pipx run --spec playwright playwright install chromium

Install as a library

To use it as a library, install it with pip inside an isolated container e.g. venv or docker. You may also --break-system-packages to do a 'regular' install but it is not recommended.

pip install wappalyzer

python -m playwright install chromium

Install with docker

Steps

- Clone the repository:

git clone https://github.com/s0md3v/wappalyzer-next.git

cd wappalyzer-next

- Build and run with Docker Compose:

docker compose build

- To scan URLs using the Docker container:

- Scan a single URL:

docker compose run --rm wappalyzer -i https://example.com

- Scan multiple URLs from a file:

docker compose run --rm wappalyzer -i urls.txt -w 3 -oJ output.json

For Users

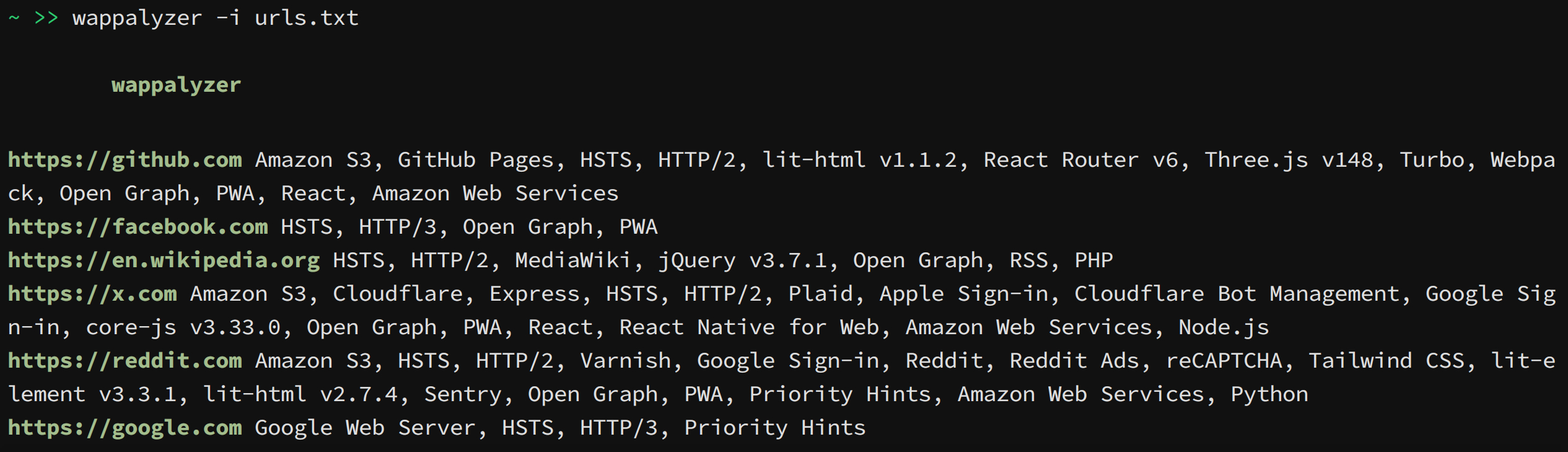

Some common usage examples are given below, refer to list of all options for more information.

- Scan a single URL:

wappalyzer -i https://example.com - Scan multiple URLs from a file:

wappalyzer -i urls.txt -w 3 - Set page-load timeout for full scans:

wappalyzer -i urls.txt -t 15 - Scan with authentication:

wappalyzer -i https://example.com -c "sessionid=abc123; token=xyz789" - Export results to JSON:

wappalyzer -i https://example.com -oJ results.json - Export JSON to stdout:

wappalyzer -i https://example.com -oJ

When an output flag is used without a file, the report is written to stdout. Status lines, banner text, and errors are written to stderr.

Options

Note: For accuracy use 'full' scan type (default). 'fast' and 'balanced' do not use browser emulation.

-i: Input URL or file containing URLs (one per line)--scan-type: Scan type (default: 'full')fast: Quick HTTP-based scan (sends 1 request)balanced: HTTP-based scan with more requestsfull: Complete scan using wappalyzer extension

-w, --workers: Number of concurrent workers (default: 5; full scans are capped at 3)-t, --timeout: Maximum seconds to wait for a page load in full scans (default: 30)-oJ [file]: JSON output file path, or stdout when the file is omitted or set to--oC [file]: CSV output file path, or stdout when the file is omitted or set to--oH [file]: HTML output file path, or stdout when the file is omitted or set to--c, --cookie: Cookie header string for authenticated scans

For Developers

The python library is available on pypi as wappalyzer and can be imported with the same name.

Using the Library

Use Wappalyzer when scanning more than one URL. The browser is started once, reused, and closed when the with block exits.

from wappalyzer import Wappalyzer

with Wappalyzer(workers=3, timeout=30) as scanner:

results = scanner.analyze_many([

'https://example.com',

'https://github.com',

'https://python.org',

])

for url, technologies in results.items():

print(url)

for name, data in technologies.items():

version = f" {data['version']}" if data['version'] else ""

print(f" {name}{version}")

The same scanner can also scan one URL at a time without reopening Chromium:

from wappalyzer import Wappalyzer

with Wappalyzer(workers=3, timeout=30) as scanner:

github = scanner.analyze('https://github.com')

python = scanner.analyze('https://python.org')

For a single URL, analyze() is shorter. It creates its own scanner, runs one scan, and closes it.

from wappalyzer import analyze

results = analyze(

url='https://example.com',

scan_type='full', # 'fast', 'balanced', or 'full'

cookie='sessionid=abc123',

timeout=30

)

Do not call the top-level analyze() function in a loop for large jobs. Use Wappalyzer.analyze_many() or Wappalyzer.analyze() on a reused scanner so Chromium and the Wappalyzer extension are not reloaded for every URL.

analyze() Function Parameters

url(str): The URL to analyzescan_type(str, optional): Type of scan to perform'fast': Quick HTTP-based scan'balanced': HTTP-based scan with more requests'full': Complete scan including JavaScript execution (default)

workers(int, optional): Number of browser workers to create for full scans (default: 1)cookie(str, optional): Cookie header string for authenticated scanstimeout(int, optional): Maximum seconds to wait for a page load in full scans (default: 30)

Return Value

Returns a dictionary with the URL as key and detected technologies as value:

{

"https://github.com": {

"Amazon S3": {

"version": "",

"confidence": 100,

"categories": ["CDN"],

"groups": ["Servers"]

},

"React Router": {

"version": "6",

"confidence": 100,

"categories": ["JavaScript frameworks"],

"groups": ["Web development"]

}

},

"https://example.com": {}

}

FAQ

Why Chromium and Playwright?

The full scanner runs the Wappalyzer extension in Chromium through Playwright. Chromium extension support in Playwright is direct and does not require geckodriver or Selenium.

What is the difference between 'fast', 'balanced', and 'full' scan types?

- fast: Sends a single HTTP request to the URL. Doesn't use the extension.

- balanced: Sends additional HTTP requests to .js files, /robots.txt and does DNS queries. Doesn't use the extension.

- full: Uses the official Wappalyzer extension to scan the URL in a headless browser.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file wappalyzer-2.0.0.tar.gz.

File metadata

- Download URL: wappalyzer-2.0.0.tar.gz

- Upload date:

- Size: 34.6 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f2d79e31cf390c08d3fd27a4d0257ac5198fec0f80e10e379f7b56eb6af3926d

|

|

| MD5 |

4bb6054fe7f6c7ae3a629a39379fb7ea

|

|

| BLAKE2b-256 |

d0e34afa2f1b3d14a7f5e040b68959b7d0819f9735f92cf4493a94811287cbdf

|

Provenance

The following attestation bundles were made for wappalyzer-2.0.0.tar.gz:

Publisher:

pypi.yml on s0md3v/wappalyzer-next

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

wappalyzer-2.0.0.tar.gz -

Subject digest:

f2d79e31cf390c08d3fd27a4d0257ac5198fec0f80e10e379f7b56eb6af3926d - Sigstore transparency entry: 1422255584

- Sigstore integration time:

-

Permalink:

s0md3v/wappalyzer-next@6c5fa8a0032f188497e2a345288465c82282c716 -

Branch / Tag:

refs/tags/2.0.0 - Owner: https://github.com/s0md3v

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

pypi.yml@6c5fa8a0032f188497e2a345288465c82282c716 -

Trigger Event:

push

-

Statement type:

File details

Details for the file wappalyzer-2.0.0-py3-none-any.whl.

File metadata

- Download URL: wappalyzer-2.0.0-py3-none-any.whl

- Upload date:

- Size: 34.6 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

86d8085b446a401fe09d1c3ea3d542355df4f07fe98c15488107469a3fda16c8

|

|

| MD5 |

dfd0af7b6ef337831a922415fa32139b

|

|

| BLAKE2b-256 |

f382449350e8c9a6fe0c865ad282101fa4fcd27ed9f66ad33c27809e66ea8299

|

Provenance

The following attestation bundles were made for wappalyzer-2.0.0-py3-none-any.whl:

Publisher:

pypi.yml on s0md3v/wappalyzer-next

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

wappalyzer-2.0.0-py3-none-any.whl -

Subject digest:

86d8085b446a401fe09d1c3ea3d542355df4f07fe98c15488107469a3fda16c8 - Sigstore transparency entry: 1422255674

- Sigstore integration time:

-

Permalink:

s0md3v/wappalyzer-next@6c5fa8a0032f188497e2a345288465c82282c716 -

Branch / Tag:

refs/tags/2.0.0 - Owner: https://github.com/s0md3v

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

pypi.yml@6c5fa8a0032f188497e2a345288465c82282c716 -

Trigger Event:

push

-

Statement type: