Latent Semantic Analysis package based on "the standard" Latent Semantic Indexing theory.

Project description

Latent Semantic Analysis (LSA) Python package

In brief

This Python package, LatentSemanticAnalyzer, has different functions for computations of

Latent Semantic Analysis (LSA) workflows

(using Sparse matrix Linear Algebra.) The package mirrors

the Mathematica implementation [AAp1].

(There is also a corresponding implementation in R; see [AAp2].)

The package provides:

- Class

LatentSemanticAnalyzer - Functions for applying Latent Semantic Indexing (LSI) functions on matrix entries

- "Data loader" function for obtaining a

pandasdata frame ~580 abstracts of conference presentations

Installation

To install from GitHub use the shell command:

python -m pip install git+https://github.com/antononcube/Python-packages.git#egg=LatentSemanticAnalyzer\&subdirectory=LatentSemanticAnalyzer

To install from PyPI:

python -m pip install LatentSemanticAnalyzer

LSA workflows

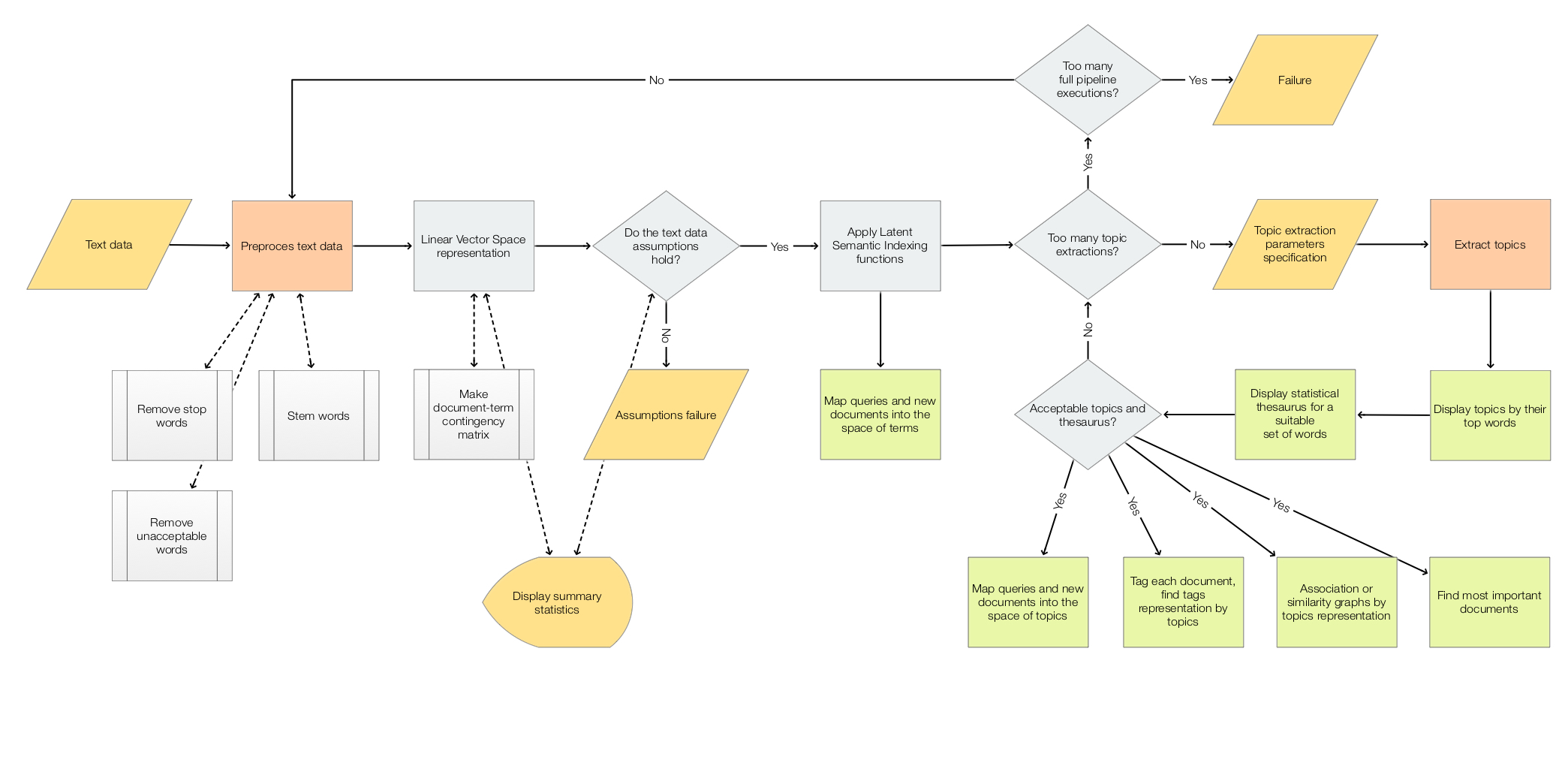

The scope of the package is to facilitate the creation and execution of the workflows encompassed in this flow chart:

For more details see the article "A monad for Latent Semantic Analysis workflows", [AA1].

Usage example

Here is an example of a LSA pipeline that:

- Ingests a collection of texts

- Makes the corresponding document-term matrix using stemming and removing stop words

- Extracts 40 topics

- Shows a table with the extracted topics

- Shows a table with statistical thesaurus entries for selected words

import random

from LatentSemanticAnalyzer.LatentSemanticAnalyzer import *

from LatentSemanticAnalyzer.DataLoaders import *

import snowballstemmer

# Collection of texts

dfAbstracts = load_abstracts_data_frame()

docs = dict(zip(dfAbstracts.ID, dfAbstracts.Abstract))

# Stemmer object (to preprocess words in the pipeline below)

stemmerObj = snowballstemmer.stemmer("english")

# Words to show statistical thesaurus entries for

words = ["notebook", "computational", "function", "neural", "talk", "programming"]

# Reproducible results

random.seed(12)

# LSA pipeline

lsaObj = (LatentSemanticAnalyzer()

.make_document_term_matrix(docs=docs,

stop_words=True,

stemming_rules=True,

min_length=3)

.apply_term_weight_functions(global_weight_func="IDF",

local_weight_func="None",

normalizer_func="Cosine")

.extract_topics(number_of_topics=40, min_number_of_documents_per_term=10, method="NNMF")

.echo_topics_interpretation(number_of_terms=12, wide_form=True)

.echo_statistical_thesaurus(terms=stemmerObj.stemWords(words),

wide_form=True,

number_of_nearest_neighbors=12,

method="cosine",

echo_function=lambda x: print(x.to_string())))

Related Python packages

This package is based on the Python package

SSparseMatrix, [AAp3]

TBF...

Related Mathematica and R packages

Mathematica

The Python pipeline above corresponds to the following pipeline for the Mathematica package [AAp1]:

lsaObj =

LSAMonUnit[aAbstracts]⟹

LSAMonMakeDocumentTermMatrix["StemmingRules" -> Automatic, "StopWords" -> Automatic]⟹

LSAMonEchoDocumentTermMatrixStatistics["LogBase" -> 10]⟹

LSAMonApplyTermWeightFunctions["IDF", "None", "Cosine"]⟹

LSAMonExtractTopics["NumberOfTopics" -> 20, Method -> "NNMF", "MaxSteps" -> 16, "MinNumberOfDocumentsPerTerm" -> 20]⟹

LSAMonEchoTopicsTable["NumberOfTerms" -> 10]⟹

LSAMonEchoStatisticalThesaurus["Words" -> Map[WordData[#, "PorterStem"]&, {"notebook", "computational", "function", "neural", "talk", "programming"}]];

R

The package

LSAMon-R,

[AAp2], implements a software monad for LSA workflows.

References

Articles

[AA1] Anton Antonov, "A monad for Latent Semantic Analysis workflows", (2019), MathematicaForPrediction at WordPress.

Mathematica and R Packages

[AAp1] Anton Antonov, Monadic Latent Semantic Analysis Mathematica package, (2017), MathematicaForPrediction at GitHub.

[AAp2] Anton Antonov, Latent Semantic Analysis Monad in R (2019), R-packages at GitHub/antononcube.

Python packages

[AAp3] Anton Antonov, SSparseMatrix Python package, (2021), PyPI.

[AAp4] Anton Antonov, SparseMatrixRecommender Python package, (2021), PyPI.

[AAp5] Anton Antonov, RandomDataGenerators Python package, (2021), PyPI.

[AAp6] Anton Antonov, RandomMandala Python package, (2021), PyPI.

[MZp1] Marinka Zitnik and Blaz Zupan, Nimfa: A Python Library for Nonnegative Matrix Factorization, (2013-2019), PyPI.

[SDp1] Snowball Developers, SnowballStemmer Python package, (2013-2021), PyPI.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file LatentSemanticAnalyzer-0.1.1.tar.gz.

File metadata

- Download URL: LatentSemanticAnalyzer-0.1.1.tar.gz

- Upload date:

- Size: 188.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

aadafb1405ca35849924232b54298756ef65129e3e306506c2ab621252e38684

|

|

| MD5 |

67b093bcb61eae1d7e084c75ca0f7c81

|

|

| BLAKE2b-256 |

4521ba601c2373b83256ea28f5ceca10b3f6fd00df9a42527b6075f13bed1633

|

File details

Details for the file LatentSemanticAnalyzer-0.1.1-py3-none-any.whl.

File metadata

- Download URL: LatentSemanticAnalyzer-0.1.1-py3-none-any.whl

- Upload date:

- Size: 187.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a35254c40c3b9e9c72c9872a646af7f7982a4943c5f1446db6334fdf31bb4910

|

|

| MD5 |

65e39e213da8a58aae53a5b806aa3b9f

|

|

| BLAKE2b-256 |

5e9194042d60f1fce6b4677eb5b780ff7535f559c313772f809a534d5bd4438e

|