Binary distribution of the CBC MILP solver (COIN-OR Branch and Cut)

Project description

cbcbox

cbcbox is a high-performance, self-contained Python distribution of the CBC MILP solver (COIN-OR Branch and Cut), built from the latest COIN-OR master branch.

On x86_64 (Linux, macOS, Windows) the wheel ships both a Haswell-optimised binary (AVX2/FMA) for maximum speed and a generic build with runtime CPU dispatch for compatibility with any x86_64 machine — selected automatically. All dynamic dependencies (OpenBLAS, libgfortran, etc.) are bundled; no system libraries or separate installation steps are needed.

Highlights

-

Haswell-optimised & generic builds — on x86_64 Linux, macOS, and Windows the wheel ships two complete solver stacks: a Haswell build (OpenBLAS AVX2/FMA kernel) for maximum throughput, and a generic build (

DYNAMIC_ARCHruntime dispatch) for compatibility with any x86_64 CPU. The best available variant is selected automatically at import time (see Build variants). -

Parallel branch-and-cut — built with

--enable-cbc-parallel. Use-threads=Nto distribute the search tree across N threads, giving significant speedups on multi-core machines for hard MIP instances. -

AMD fill-reducing ordering — SuiteSparse AMD is compiled in, enabling the high-quality

UniversityOfFloridaCholesky factorization for Clp's barrier (interior point) solver. AMD reordering produces much less fill-in on large sparse problems than the built-in native Cholesky, making barrier substantially faster. Activate with-cholesky UniversityOfFlorida -barrier(see barrier usage).

Performance (x86_64)

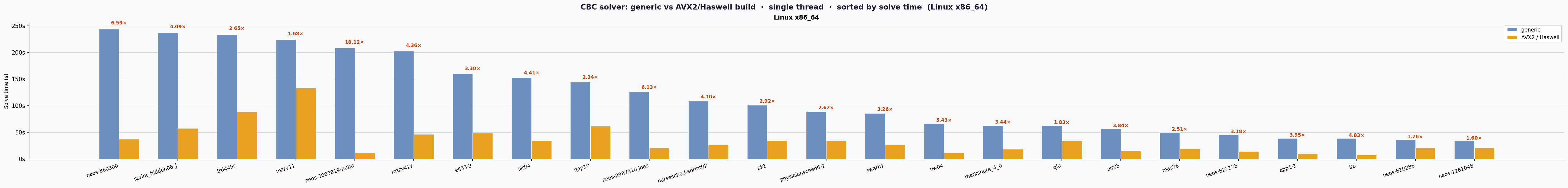

Auto-updated by CI after each successful workflow run. Single-threaded solve time — lower is better.

The AVX2/Haswell build is ~3.2× faster than the generic build on average (geometric mean across 20 instances, 2 x86_64 platforms: Darwin x86_64, Windows AMD64).

Single-threaded solve time across benchmark instances on Linux x86_64. Speedup factor shown above each pair. Lower is better.

See also: Windows AMD64 + macOS x86_64 summary

Build variants

On x86_64 Linux, macOS, and Windows, the wheel ships two complete sets of binaries:

| Variant | OpenBLAS kernel | Clp SIMD | Minimum CPU |

|---|---|---|---|

generic |

DYNAMIC_ARCH=1 (runtime dispatch, Nehalem–Zen targets) |

standard | any x86_64 |

avx2 |

DYNAMIC_ARCH=1 + DYNAMIC_LIST=HASWELL SKYLAKEX |

-march=haswell -DCOIN_AVX2=4 |

Haswell (2013+) |

At import time cbcbox automatically selects avx2 when it is available and

the running CPU supports AVX2; otherwise it falls back to generic.

You can override this selection with the CBCBOX_BUILD environment variable:

# Force generic (portable) build

CBCBOX_BUILD=generic cbc mymodel.mps -solve -quit

# Force AVX2-optimised build (raises an error if not available)

CBCBOX_BUILD=avx2 cbc mymodel.mps -solve -quit

When CBCBOX_BUILD is set, a short summary of the selected build is printed to

stdout on every call — useful for tagging experiment results:

[cbcbox] CBCBOX_BUILD=avx2

[cbcbox] binary : .../cbcbox/cbc_dist_avx2/bin/cbc

[cbcbox] lib dir : .../cbcbox/cbc_dist_avx2/lib

[cbcbox] libs : libCbc.so.3, libClp.so.3, libopenblas.so.0

Non-x86_64 platforms (Linux aarch64, macOS arm64) ship the

genericbuild only.CBCBOX_BUILD=avx2will raise aRuntimeErroron those platforms.

Local debug builds

The released wheels are fully optimised and stripped. To debug CBC itself

(e.g. with GDB or LLDB), use the scripts in scripts/ to build a local

debug-enabled binary. These produce the same full feature set as the release

wheels (OpenBLAS, AMD, Nauty, pthreads) but compiled with -O1 -g and, on

x86_64, with -march=haswell -DCOIN_AVX2=4 so you can debug AVX2-specific

code paths.

| Script | Platform | Environment | Output directory |

|---|---|---|---|

scripts/build_debug.sh |

Linux, macOS | native (host compiler) | cbc_dist_debug_avx2/ (x86_64) or cbc_dist_debug/ (ARM64) |

scripts/build_debug_manylinux.sh |

Linux | Docker — manylinux2014 container (exact CI parity) | same as above |

scripts/build_debug_windows.ps1 |

Windows | MSYS2 / MinGW64 | cbc_dist_debug_avx2\ |

Quick start

Linux / macOS (native build):

# x86_64 → debug + AVX2 → cbc_dist_debug_avx2/bin/cbc

# ARM64 → debug only → cbc_dist_debug/bin/cbc

./scripts/build_debug.sh

# With AddressSanitizer:

./scripts/build_debug.sh --asan

# With ThreadSanitizer:

./scripts/build_debug.sh --tsan

# Force a clean rebuild from scratch (required when switching sanitizers):

./scripts/build_debug.sh --asan --clean

Linux (manylinux2014 container — matches CI exactly):

# Requires Docker; the script prints install instructions if it is missing.

./scripts/build_debug_manylinux.sh

./scripts/build_debug_manylinux.sh --asan

./scripts/build_debug_manylinux.sh --tsan

Windows (PowerShell):

# Requires MSYS2 at C:\msys64. Note: sanitizers are not supported on Windows/MinGW.

.\scripts\build_debug_windows.ps1

.\scripts\build_debug_windows.ps1 -Clean # force full rebuild

Debugging

# GDB (Linux):

gdb cbc_dist_debug_avx2/bin/cbc

(gdb) run mymodel.mps -solve -quit

# LLDB (macOS):

lldb cbc_dist_debug/bin/cbc

(lldb) run mymodel.mps -solve -quit

Sanitizer tips

| Sanitizer | Flag | What it catches | Runtime env var |

|---|---|---|---|

| AddressSanitizer | --asan |

heap/stack buffer overflows, use-after-free, memory leaks | ASAN_OPTIONS=detect_leaks=0 to suppress system-lib false positives |

| ThreadSanitizer | --tsan |

data races between threads | TSAN_OPTIONS=halt_on_error=0 to log races without aborting |

ASan and TSan are mutually exclusive. Neither is available on Windows/MinGW.

Always pass --clean when switching from one sanitizer to another to avoid

linking mismatched object files.

OpenBLAS is always built without sanitizer flags to avoid false positives from hand-optimised BLAS assembly; only the COIN-OR stack is instrumented.

Note: Debug binaries are not included in the published wheels because of their size. They are intended for local development only.

Supported platforms

| Platform | Wheel tag |

|---|---|

| Linux x86_64 | manylinux2014_x86_64 |

| Linux aarch64 | manylinux2014_aarch64 |

| macOS arm64 (Apple Silicon) | macosx_11_0_arm64 |

| macOS x86_64 | macosx_10_9_x86_64 |

| Windows AMD64 | win_amd64 |

Installation

pip install cbcbox

Usage

Command line

After installation, CBC is available directly as the cbc command (pip installs

the entry point into the environment's bin/ on Linux/macOS or Scripts/ on Windows,

which is already on PATH):

cbc mymodel.lp -solve -quit

cbc mymodel.mps.gz -solve -quit

cbc mymodel.mps -seconds 60 -timem elapsed -solve -quit

cbc mymodel.mps -dualp pesteep -solve -quit

Alternatively, invoke via the Python module entry point:

python -m cbcbox mymodel.lp -solve -quit

CBC accepts LP, MPS and compressed MPS (.mps.gz) files. Pass -help for the

full list of options, or -quit to exit after solving.

Parallel branch-and-cut

This build includes parallel branch-and-cut (--enable-cbc-parallel).

Use -threads=N to distribute the search tree across N threads:

cbc mymodel.mps -threads=4 -solve -quit

Barrier (interior-point) solver

Clp's barrier solver can be faster than simplex for large LP relaxations.

This build includes SuiteSparse AMD, which enables the high-quality

UniversityOfFlorida Cholesky factorization — significantly reducing fill-in

compared to the built-in native Cholesky:

# Solve LP relaxation with barrier + AMD Cholesky, then crossover to simplex basis

cbc mymodel.mps -cholesky UniversityOfFlorida -barrier -solve -quit

# Useful as a root-node strategy inside MIP (let CBC use simplex for B&B):

cbc mymodel.mps -cholesky UniversityOfFlorida -barrier -solve -quit

Without AMD, only -cholesky native (less efficient) is available.

Python API

The package exposes helpers to locate the installed files:

import cbcbox

import subprocess

# Path to the cbc binary (cbc.exe on Windows).

cbcbox.cbc_bin_path()

# e.g. '/home/user/.venv/lib/python3.13/site-packages/cbcbox/cbc_dist/bin/cbc'

# Directory containing the shared libraries.

cbcbox.cbc_lib_dir()

# e.g. '.../cbcbox/cbc_dist/lib'

# Directory containing the COIN-OR C/C++ headers.

cbcbox.cbc_include_dir()

# e.g. '.../cbcbox/cbc_dist/include/coin'

# Run CBC programmatically.

result = subprocess.run(

[cbcbox.cbc_bin_path(), "mymodel.mps", "-solve", "-quit"],

capture_output=True, text=True,

)

print(result.stdout)

What is built

The build pipeline compiles all components from source inside the CI runner, in the following order:

| Component | Version / branch | Purpose |

|---|---|---|

| Cbc | master | Branch-and-cut MIP solver |

| Cgl | master | Cut generation library |

| Clp | master | Simplex LP solver (used as the MIP node relaxation) |

| Osi | master | Open Solver Interface |

| CoinUtils | master | Utility library (shared by all COIN-OR packages) |

| Nauty | 2.8.9 | Symmetry detection for MIP presolve |

| AMD (SuiteSparse v7.12.2) | v7.12.2 | Sparse matrix fill-reducing ordering |

| OpenBLAS | v0.3.31 | Optimised BLAS/LAPACK for LP basis factorisation |

On x86_64 Linux, macOS, and Windows the entire stack is compiled twice: once for the

generic variant (OpenBLAS DYNAMIC_ARCH=1 with a broad set of x86_64 targets for

runtime dispatch) and once for the avx2 variant (OpenBLAS DYNAMIC_ARCH=1 restricted

to Haswell/Skylake targets via DYNAMIC_LIST, COIN-OR compiled with

-march=haswell -DCOIN_AVX2=4). Both variants use NO_CBLAS=1 (COIN-OR only calls

the Fortran BLAS interface). AMD and Nauty are built only once (they are pure

combinatorial code with no BLAS dependency) and reused by both COIN-OR variants.

All COIN-OR components are built as shared (.so / .dylib / .dll)

libraries. The shared libraries are patched with

self-relative RPATHs and bundled inside the wheel, making them directly usable

via cffi or ctypes without any system installation.

Wheel contents

The wheel installs under cbcbox/ inside the site-packages directory.

On x86_64 Linux, macOS, and Windows it contains two dist trees; other platforms

contain only cbc_dist/:

cbc_dist/ ← generic build (all platforms)

cbc_dist_avx2/ ← AVX2-optimised build (x86_64 Linux/macOS/Windows)

├── bin/

│ ├── cbc # CBC MIP solver binary (cbc.exe on Windows)

│ └── clp # Clp LP solver binary (clp.exe on Windows)

├── lib/

│ ├── libCbc.so / libCbc.dylib / libCbc.dll # CBC solver

│ ├── libCbcSolver.so ...

│ ├── libClp.so ... # Clp LP solver

│ ├── libCgl.so ... # Cut generation

│ ├── libOsi.so ... # Solver interface

│ ├── libOsiClp.so ... # Clp OSI binding

│ ├── libOsiCbc.so ... # CBC OSI binding (where available)

│ ├── libCoinUtils.so ...

│ ├── libopenblas.so / .dylib / .dll # OpenBLAS BLAS/LAPACK

│ ├── pkgconfig/ # .pc files for all libraries

│ └── <bundled runtime shared libs> # Platform-specific — see below

└── include/

├── coin/ # COIN-OR headers (CoinUtils, Osi, Clp, Cgl, Cbc)

├── nauty/ # Nauty headers

└── *.h # SuiteSparse / AMD headers

Bundled dynamic libraries

Because OpenBLAS links to the Fortran runtime, the following shared libraries are bundled inside the wheel and their paths are rewritten so no system installation is required.

Linux (lib/ directory, RPATH set to $ORIGIN)

| Library | Description |

|---|---|

libopenblas.so.0 |

OpenBLAS BLAS/LAPACK |

libgfortran.so.5 |

GNU Fortran runtime |

libquadmath.so.0 |

Quad-precision math (dependency of libgfortran) |

macOS (lib/ directory, install names rewritten to @rpath/)

| Library | Description |

|---|---|

libopenblas.dylib |

OpenBLAS BLAS/LAPACK |

libgfortran.5.dylib |

GNU Fortran runtime |

libgcc_s.1.1.dylib |

GCC runtime |

libquadmath.0.dylib |

Quad-precision math |

Windows (bin/ directory, DLLs placed next to the executable)

| Library | Description |

|---|---|

libopenblas.dll |

OpenBLAS BLAS/LAPACK |

libgfortran-5.dll |

GNU Fortran runtime |

libgcc_s_seh-1.dll |

GCC SEH runtime |

libquadmath-0.dll |

Quad-precision math |

libstdc++-6.dll |

C++ standard library (MinGW64) |

libwinpthread-1.dll |

POSIX thread emulation |

CI / build pipeline

Wheels are built and tested automatically via GitHub Actions using

cibuildwheel. The workflow

(.github/workflows/wheel.yml) runs independent compile jobs in parallel,

then packages each platform:

| Compile jobs | Runner | Produces |

|---|---|---|

compile-linux-x64-generic + compile-linux-x64-avx2 |

ubuntu-latest |

manylinux2014_x86_64 wheel |

compile-linux-arm64-generic |

ubuntu-24.04-arm |

manylinux2014_aarch64 wheel |

compile-macos-arm64-generic |

macos-15 |

macosx_11_0_arm64 wheel |

compile-macos-intel-generic + compile-macos-intel-avx2 |

macos-15-intel |

macosx_10_9_x86_64 wheel |

compile-windows-generic + compile-windows-avx2 |

windows-latest |

win_amd64 wheel |

Each platform's compile jobs run in parallel. Once all compile jobs for a

platform finish, the corresponding package-* job assembles the wheel via

cibuildwheel and runs the test suite against the installed wheel.

A final combine_reports job collects per-platform performance results and

commits the updated README.md to the repository.

Integration tests

The test suite (pytest) solves 21 MIP instances and checks the optimal

objective values, in both single-threaded and parallel (3-thread) modes.

On x86_64 Linux, macOS, and Windows each test is run twice — once against

the generic binary and once against the avx2 binary — and a side-by-side

performance comparison is recorded:

| Instance | Expected optimal | Time limit |

|---|---|---|

pp08a |

7 350 | 2000 s |

sprint_hidden06_j |

130 | 2000 s |

air03 |

340 160 | 2000 s |

air04 |

56 137 | 2000 s |

air05 |

26 374 | 2000 s |

nw04 |

16 862 | 2000 s |

mzzv11 |

−21 718 | 2000 s |

trd445c |

−153 419.078836 | 2000 s |

nursesched-sprint02 |

58 | 2000 s |

stein45 |

30 | 2000 s |

neos-810286 |

2 877 | 2000 s |

neos-1281048 |

601 | 2000 s |

j3050_8 |

1 | 2000 s |

qiu |

−132.873136947 | 2000 s |

gesa2-o |

25 779 856.3717 | 2000 s |

pk1 |

11 | 2000 s |

mas76 |

40 005.054142 | 2000 s |

app1-1 |

−3 | 2000 s |

eil33-2 |

934.007916 | 2000 s |

fiber |

405 935.18 | 2000 s |

neos-2987310-joes |

−607 702 988.291 | 2000 s |

Time limits are generous to avoid false failures on slow CI runners.

Performance results

Auto-updated by CI after each successful workflow run.

Summary

Geometric mean solve time (seconds) across all test instances.

1 thread

| Platform | generic (s) | avx2 (s) | avx2 speedup |

|---|---|---|---|

| Darwin x86_64 | 55.74 | 17.44 | 3.20× |

| Darwin arm64 | 32.54 | — | — |

| Windows AMD64 | 48.17 | 15.14 | 3.18× |

3 threads

| Platform | generic (s) | avx2 (s) | avx2 speedup |

|---|---|---|---|

| Darwin x86_64 | 50.58 | 13.34 | 3.79× |

| Darwin arm64 | 35.03 | — | — |

| Windows AMD64 | 38.47 | 14.79 | 2.60× |

Per-instance results

pp08a

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 4.92 | 8.97 | 0.55× |

| Darwin x86_64 | generic | 9.01 | 8.58 | 1.05× |

| Darwin arm64 | generic | 9.05 | 14.72 | 0.62× |

| Windows AMD64 | avx2 | 4.91 | 8.14 | 0.60× |

| Windows AMD64 | generic | 12.52 | 22.82 | 0.55× |

sprint_hidden06_j

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 52.21 | 44.13 | 1.18× |

| Darwin x86_64 | generic | 167.56 | 218.53 | 0.77× |

| Darwin arm64 | generic | 116.90 | 120.40 | 0.97× |

| Windows AMD64 | avx2 | 57.59 | 54.94 | 1.05× |

| Windows AMD64 | generic | 246.50 | 210.51 | 1.17× |

air03

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 1.68 | 1.68 | 1.00× |

| Darwin x86_64 | generic | 6.03 | 7.80 | 0.77× |

| Darwin arm64 | generic | 3.86 | 3.86 | 1.00× |

| Windows AMD64 | avx2 | 2.32 | 2.39 | 0.97× |

| Windows AMD64 | generic | 5.90 | 6.08 | 0.97× |

air04

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 52.16 | 32.47 | 1.61× |

| Darwin x86_64 | generic | 113.43 | 99.69 | 1.14× |

| Darwin arm64 | generic | 101.85 | 76.95 | 1.32× |

| Windows AMD64 | avx2 | 33.51 | 27.09 | 1.24× |

| Windows AMD64 | generic | 154.46 | 88.84 | 1.74× |

air05

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 24.58 | 16.39 | 1.50× |

| Darwin x86_64 | generic | 56.03 | 53.86 | 1.04× |

| Darwin arm64 | generic | 46.16 | 47.12 | 0.98× |

| Windows AMD64 | avx2 | 17.96 | 23.19 | 0.77× |

| Windows AMD64 | generic | 57.44 | 48.23 | 1.19× |

nw04

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 13.02 | 12.41 | 1.05× |

| Darwin x86_64 | generic | 34.71 | 44.80 | 0.77× |

| Darwin arm64 | generic | 32.80 | 35.57 | 0.92× |

| Windows AMD64 | avx2 | 15.87 | 15.83 | 1.00× |

| Windows AMD64 | generic | 57.51 | 54.34 | 1.06× |

mzzv11

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 124.54 | 78.60 | 1.58× |

| Darwin x86_64 | generic | 501.14 | 475.71 | 1.05× |

| Darwin arm64 | generic | 241.20 | 166.05 | 1.45× |

| Windows AMD64 | avx2 | 118.42 | 146.32 | 0.81× |

| Windows AMD64 | generic | 254.40 | 265.07 | 0.96× |

trd445c

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 107.75 | 93.87 | 1.15× |

| Darwin x86_64 | generic | 244.45 | 258.53 | 0.95× |

| Darwin arm64 | generic | 173.44 | 194.91 | 0.89× |

| Windows AMD64 | avx2 | 100.53 | 109.16 | 0.92× |

| Windows AMD64 | generic | 229.88 | 228.59 | 1.01× |

nursesched-sprint02

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 39.21 | 29.25 | 1.34× |

| Darwin x86_64 | generic | 93.62 | 116.17 | 0.81× |

| Darwin arm64 | generic | 82.99 | 91.06 | 0.91× |

| Windows AMD64 | avx2 | 26.54 | 26.53 | 1.00× |

| Windows AMD64 | generic | 113.09 | 84.91 | 1.33× |

stein45

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 10.41 | 9.72 | 1.07× |

| Darwin x86_64 | generic | 25.82 | 22.01 | 1.17× |

| Darwin arm64 | generic | 18.54 | 13.80 | 1.34× |

| Windows AMD64 | avx2 | 8.42 | 7.49 | 1.12× |

| Windows AMD64 | generic | 26.32 | 17.88 | 1.47× |

neos-810286

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 15.12 | 11.36 | 1.33× |

| Darwin x86_64 | generic | 43.72 | 51.83 | 0.84× |

| Darwin arm64 | generic | 24.62 | 26.27 | 0.94× |

| Windows AMD64 | avx2 | 13.01 | 13.83 | 0.94× |

| Windows AMD64 | generic | 35.83 | 39.27 | 0.91× |

neos-1281048

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 23.55 | 7.22 | 3.26× |

| Darwin x86_64 | generic | 118.08 | 20.31 | 5.81× |

| Darwin arm64 | generic | 38.11 | 34.21 | 1.11× |

| Windows AMD64 | avx2 | 13.61 | 19.31 | 0.71× |

| Windows AMD64 | generic | 36.23 | 21.55 | 1.68× |

j3050_8

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 6.00 | 3.30 | 1.82× |

| Darwin x86_64 | generic | 7.64 | 9.95 | 0.77× |

| Darwin arm64 | generic | 7.41 | 6.79 | 1.09× |

| Windows AMD64 | avx2 | 2.17 | 2.33 | 0.93× |

| Windows AMD64 | generic | 8.42 | 7.00 | 1.20× |

qiu

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 47.14 | 12.33 | 3.82× |

| Darwin x86_64 | generic | 66.16 | 33.00 | 2.00× |

| Darwin arm64 | generic | 84.15 | 27.90 | 3.02× |

| Windows AMD64 | avx2 | 23.97 | 11.98 | 2.00× |

| Windows AMD64 | generic | 80.02 | 38.81 | 2.06× |

gesa2-o

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 5.17 | 4.82 | 1.07× |

| Darwin x86_64 | generic | 13.17 | 12.38 | 1.06× |

| Darwin arm64 | generic | 9.15 | 9.72 | 0.94× |

| Windows AMD64 | avx2 | 3.38 | 3.24 | 1.04× |

| Windows AMD64 | generic | 10.98 | 10.72 | 1.02× |

pk1

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 37.42 | 31.92 | 1.17× |

| Darwin x86_64 | generic | 92.01 | 83.60 | 1.10× |

| Darwin arm64 | generic | 63.56 | 52.95 | 1.20× |

| Windows AMD64 | avx2 | 33.24 | 36.52 | 0.91× |

| Windows AMD64 | generic | 102.32 | 68.82 | 1.49× |

mas76

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 20.28 | 46.06 | 0.44× |

| Darwin x86_64 | generic | 55.18 | 96.05 | 0.57× |

| Darwin arm64 | generic | 40.46 | 53.86 | 0.75× |

| Windows AMD64 | avx2 | 19.48 | 32.70 | 0.60× |

| Windows AMD64 | generic | 53.11 | 68.40 | 0.78× |

app1-1

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 6.37 | 7.42 | 0.86× |

| Darwin x86_64 | generic | 728.44 | 578.89 | 1.26× |

| Darwin arm64 | generic | 13.51 | 231.96 | 0.06× |

| Windows AMD64 | avx2 | 20.44 | 7.18 | 2.85× |

| Windows AMD64 | generic | 81.69 | 23.39 | 3.49× |

eil33-2

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 45.60 | 17.84 | 2.56× |

| Darwin x86_64 | generic | 163.15 | 72.45 | 2.25× |

| Darwin arm64 | generic | 113.45 | 75.05 | 1.51× |

| Windows AMD64 | avx2 | 30.70 | 17.46 | 1.76× |

| Windows AMD64 | generic | 168.16 | 70.78 | 2.38× |

fiber

| Platform | Build | 1 thread (s) | 3 threads (s) | parallel speedup |

|---|---|---|---|---|

| Darwin x86_64 | avx2 | 1.50 | 1.10 | 1.36× |

| Darwin x86_64 | generic | 6.79 | 7.53 | 0.90× |

| Darwin arm64 | generic | 2.07 | 2.63 | 0.79× |

| Windows AMD64 | avx2 | 1.91 | 2.06 | 0.93× |

| Windows AMD64 | generic | 3.90 | 4.20 | 0.93× |

NAQ — Never Asked Questions

Why not benchmark on the full MIPLIB 2017 library?

Several practical constraints shape the benchmark set:

-

CI time limits. GitHub Actions enforces a 6-hour wall-clock limit per job. The full MIPLIB 2017 collection contains ~240 instances, many of which take hours even on fast hardware. Including all of them would make every CI run time out before producing any useful measurements.

-

Comparing apples to apples requires instances solved to optimality. If some instances are only solved within a time limit (i.e., a gap > 0 %), a meaningful performance comparison must account for both solve time and solution quality simultaneously. This greatly complicates analysis and makes plots harder to interpret. Restricting to instances that CBC reliably solves to proven optimality keeps the comparison clean: a single elapsed-time number per instance is all that is needed.

-

The instance set is intentionally biased toward set packing / covering / partitioning structure. Most instances in the benchmark (

pp08a,sprint_hidden06_j,nw04,mzzv11,nursesched-sprint02,air0x,trd445c) contain large blocks of set packing, covering, or partitioning constraints. This structure arises naturally in applications such as crew scheduling, nurse scheduling, vehicle routing, and cutting stock — exactly the domain where column generation is most valuable. Since the benchmark focuses on this problem class rather than providing a general-purpose solver survey, it is a specially interesting use case.

License

CBC and all COIN-OR components are distributed under the Eclipse Public License 2.0. OpenBLAS is distributed under the BSD 3-Clause licence. SuiteSparse AMD is distributed under the BSD 3-Clause licence. Nauty is distributed under the Apache 2.0 licence.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file cbcbox-2.923-py3-none-win_amd64.whl.

File metadata

- Download URL: cbcbox-2.923-py3-none-win_amd64.whl

- Upload date:

- Size: 57.6 MB

- Tags: Python 3, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

013075f4171cef694592f334e76b1cd62a6054973fb8a308a658de07e6fe3476

|

|

| MD5 |

1f8c3fea47fe1f2ab075c393db32529d

|

|

| BLAKE2b-256 |

8ca27d47c86cc7dd08aa9e33c5025ebad4358880294915aab033147dd8f104f8

|

Provenance

The following attestation bundles were made for cbcbox-2.923-py3-none-win_amd64.whl:

Publisher:

wheel.yml on h-g-s/cbcbox

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

cbcbox-2.923-py3-none-win_amd64.whl -

Subject digest:

013075f4171cef694592f334e76b1cd62a6054973fb8a308a658de07e6fe3476 - Sigstore transparency entry: 1066522680

- Sigstore integration time:

-

Permalink:

h-g-s/cbcbox@9bfa2e0674b4accc84b1d92b58e18fe4b17f96a5 -

Branch / Tag:

refs/heads/master - Owner: https://github.com/h-g-s

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

wheel.yml@9bfa2e0674b4accc84b1d92b58e18fe4b17f96a5 -

Trigger Event:

workflow_dispatch

-

Statement type:

File details

Details for the file cbcbox-2.923-py3-none-manylinux2014_x86_64.whl.

File metadata

- Download URL: cbcbox-2.923-py3-none-manylinux2014_x86_64.whl

- Upload date:

- Size: 72.7 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d40168b8380b72ae48793a997f82d463541fc7911dee848d379f80fe352c6d64

|

|

| MD5 |

0681cfb7876c345b47928edc375cf0b1

|

|

| BLAKE2b-256 |

13985722988f242f838a8042ffd863cc81564722a68520e93fbbc67f3bd9a51e

|

Provenance

The following attestation bundles were made for cbcbox-2.923-py3-none-manylinux2014_x86_64.whl:

Publisher:

wheel.yml on h-g-s/cbcbox

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

cbcbox-2.923-py3-none-manylinux2014_x86_64.whl -

Subject digest:

d40168b8380b72ae48793a997f82d463541fc7911dee848d379f80fe352c6d64 - Sigstore transparency entry: 1066522679

- Sigstore integration time:

-

Permalink:

h-g-s/cbcbox@9bfa2e0674b4accc84b1d92b58e18fe4b17f96a5 -

Branch / Tag:

refs/heads/master - Owner: https://github.com/h-g-s

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

wheel.yml@9bfa2e0674b4accc84b1d92b58e18fe4b17f96a5 -

Trigger Event:

workflow_dispatch

-

Statement type:

File details

Details for the file cbcbox-2.923-py3-none-manylinux2014_aarch64.whl.

File metadata

- Download URL: cbcbox-2.923-py3-none-manylinux2014_aarch64.whl

- Upload date:

- Size: 35.9 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2e21eec63ac8f9647d421066ca627f24585f6c754d993d5079e4c6c61516062b

|

|

| MD5 |

de20710c960f64f3fc2af947bbaee2c9

|

|

| BLAKE2b-256 |

b04840c74c20f132c620dd10f2a08a1ab0d437da39afd37156175337e598718d

|

Provenance

The following attestation bundles were made for cbcbox-2.923-py3-none-manylinux2014_aarch64.whl:

Publisher:

wheel.yml on h-g-s/cbcbox

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

cbcbox-2.923-py3-none-manylinux2014_aarch64.whl -

Subject digest:

2e21eec63ac8f9647d421066ca627f24585f6c754d993d5079e4c6c61516062b - Sigstore transparency entry: 1066522676

- Sigstore integration time:

-

Permalink:

h-g-s/cbcbox@9bfa2e0674b4accc84b1d92b58e18fe4b17f96a5 -

Branch / Tag:

refs/heads/master - Owner: https://github.com/h-g-s

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

wheel.yml@9bfa2e0674b4accc84b1d92b58e18fe4b17f96a5 -

Trigger Event:

workflow_dispatch

-

Statement type:

File details

Details for the file cbcbox-2.923-py3-none-macosx_15_0_x86_64.whl.

File metadata

- Download URL: cbcbox-2.923-py3-none-macosx_15_0_x86_64.whl

- Upload date:

- Size: 59.9 MB

- Tags: Python 3, macOS 15.0+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

36e1b7a4c42c14731e9557b482dc1373462708f7789f12f72bc56ded2796ffeb

|

|

| MD5 |

15501248bb75ca641bc561270aa65524

|

|

| BLAKE2b-256 |

79f41d227c40faf3dcbcf0e4c3283393e531ed98fad6f50450fbf29065ad917b

|

Provenance

The following attestation bundles were made for cbcbox-2.923-py3-none-macosx_15_0_x86_64.whl:

Publisher:

wheel.yml on h-g-s/cbcbox

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

cbcbox-2.923-py3-none-macosx_15_0_x86_64.whl -

Subject digest:

36e1b7a4c42c14731e9557b482dc1373462708f7789f12f72bc56ded2796ffeb - Sigstore transparency entry: 1066522692

- Sigstore integration time:

-

Permalink:

h-g-s/cbcbox@9bfa2e0674b4accc84b1d92b58e18fe4b17f96a5 -

Branch / Tag:

refs/heads/master - Owner: https://github.com/h-g-s

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

wheel.yml@9bfa2e0674b4accc84b1d92b58e18fe4b17f96a5 -

Trigger Event:

workflow_dispatch

-

Statement type:

File details

Details for the file cbcbox-2.923-py3-none-macosx_15_0_arm64.whl.

File metadata

- Download URL: cbcbox-2.923-py3-none-macosx_15_0_arm64.whl

- Upload date:

- Size: 30.1 MB

- Tags: Python 3, macOS 15.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

872d2f3731f5e149eb936a865206c033c2cd9cd6ec3fa2787db9f89eceaf05cd

|

|

| MD5 |

acc26084596bbf940aced18a79336f1a

|

|

| BLAKE2b-256 |

5acade6a89baf98853fc800871b90c726c0854110e6225b941c6963ac4137bf9

|

Provenance

The following attestation bundles were made for cbcbox-2.923-py3-none-macosx_15_0_arm64.whl:

Publisher:

wheel.yml on h-g-s/cbcbox

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

cbcbox-2.923-py3-none-macosx_15_0_arm64.whl -

Subject digest:

872d2f3731f5e149eb936a865206c033c2cd9cd6ec3fa2787db9f89eceaf05cd - Sigstore transparency entry: 1066522688

- Sigstore integration time:

-

Permalink:

h-g-s/cbcbox@9bfa2e0674b4accc84b1d92b58e18fe4b17f96a5 -

Branch / Tag:

refs/heads/master - Owner: https://github.com/h-g-s

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

wheel.yml@9bfa2e0674b4accc84b1d92b58e18fe4b17f96a5 -

Trigger Event:

workflow_dispatch

-

Statement type: