video and data IO tools for Python

Project description

daio

Video and data IO tools for Python.

Links: API documentation, GitHub repository

Installation

- via conda or mamba:

conda install conda-forge::daio - if you prefer pip:

pip install daio

Use

Video IO

Write video:

from daio.video import VideoReader, VideoWriter

writer = VideoWriter('/path/to/video.mp4', fps=25)

for i in range(20):

frame = np.random.randint(0,255,size=(720,1280), dtype='uint8')

writer.write(frame)

writer.close()

Read video using speed-optimized array-like indexing or iteration:

reader = VideoReader('/path/to/video.mp4')

frame_7 = reader[7]

first10_frames = reader[:10]

for frame in reader:

process_frame(frame)

reader.close()

You can also use with statements to handle file closure:

with VideoWriter('/path/to/video.mp4', fps=25) as writer:

for i in range(20):

frame = np.random.randint(0,255,size=(720,1280), dtype='uint8')

writer.write(frame)

#or

with VideoReader('/path/to/video.mp4') as reader:

frame_7 = reader[7]

HDF5 file IO

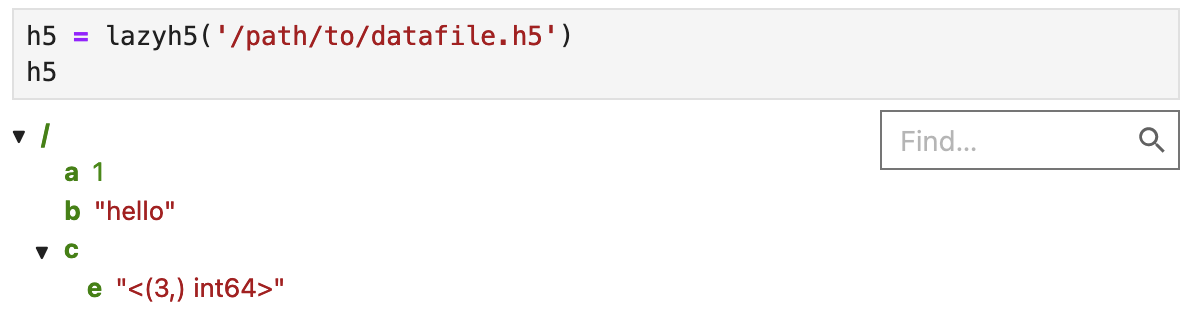

Lazily load HDF5 with a dict-like interface (contents are only loaded when accessed):

from daio.h5 import lazyh5

h5 = lazyh5('/path/to/datafile.h5')

b_loaded = h5['b']

e_loaded = h5['c']['e']

h5.keys()

Create a new HDF5 file (or add items to existing file by setting argument readonly=False):

h5 = lazyh5('test.h5')

h5['a'] = 1

h5['b'] = 'hello'

h5['c'] = {} # create subgroup

h5['c']['e'] = [2,3,4]

Load entire HDF5-file to dict, or save dict to HDF5-file:

# save dict to HDF5 file:

some_dict = dict(a = 1, b = np.random.randn(3,4,5), c = dict(g='nested'), d = 'some_string')

lazyh5('/path/to/datafile.h5').from_dict(some_dict)

# load dict from HDF5 file:

loaded = lazyh5('/path/to/datafile.h5').to_dict()

In Jupyter, you can interactively explore the file structure:

Old interface (expand this)

from daio.h5 import save_to_h5, load_from_h5

# save dict to HDF5 file:

some_dict = dict(a = 1, b = np.random.randn(3,4,5), c = dict(g='nested'), d = 'some_string')

save_to_h5('/path/to/datafile.h5', some_dict)

# load dict from HDF5 file:

dict_loaded = load_from_h5('/path/to/datafile.h5')

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file daio-0.0.9.tar.gz.

File metadata

- Download URL: daio-0.0.9.tar.gz

- Upload date:

- Size: 11.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.9.22

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c782b4dd505625e77a0e1589ed3c056eb3d14c547ade7694b9a0556fb0adbb4e

|

|

| MD5 |

8d18e45825d0f7591a59bb1cd869a732

|

|

| BLAKE2b-256 |

f6ca96daca60f5c7ab48b4ca5e9b95c5ec3501a0ccd0cfb718cd9577fdb1a6c1

|

File details

Details for the file daio-0.0.9-py3-none-any.whl.

File metadata

- Download URL: daio-0.0.9-py3-none-any.whl

- Upload date:

- Size: 10.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.9.22

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

86dbc19225be04bd250a4bb97d13e159f490940bd3c2c6895e5cd1478b3b6cf6

|

|

| MD5 |

7e22f82abcf32eca0cd3050688cf8a6a

|

|

| BLAKE2b-256 |

d3d37185f2132d1dac5754c9d48106e1634707366f7e56a990f6e5ab2c5937c0

|