Time Robustness Audit for RL agents — measures timing reliance, deployment robustness, and stress resilience

Project description

deltatau-audit

Audited by deltatau-audit (CartPole speed-randomized GRU):

Find and fix timing failures in RL agents.

RL agents silently break when deployment timing differs from training — frame drops, variable inference latency, sensor rate changes. deltatau-audit finds these failures and fixes them in one command.

Try it in 30 seconds

pip install "deltatau-audit[demo]"

python -m deltatau_audit demo cartpole

# Faster: python -m deltatau_audit demo cartpole --workers auto

No GPU. No MuJoCo. Just pip install and run. You'll see a Before/After comparison:

| Scenario | Before (Baseline) | After (Speed-Randomized) | Change |

|---|---|---|---|

| 5x speed | 12% | 49% | +37pp |

| Speed jitter | 66% | 115% | +49pp |

| Observation delay | 82% | 95% | +13pp |

| Mid-episode spike | 23% | 62% | +39pp |

| Deployment | FAIL (0.23) | DEGRADED (0.62) | +0.39 |

The standard agent collapses under timing perturbations. Speed-randomized training dramatically improves robustness. Full HTML reports with charts are generated in demo_report/.

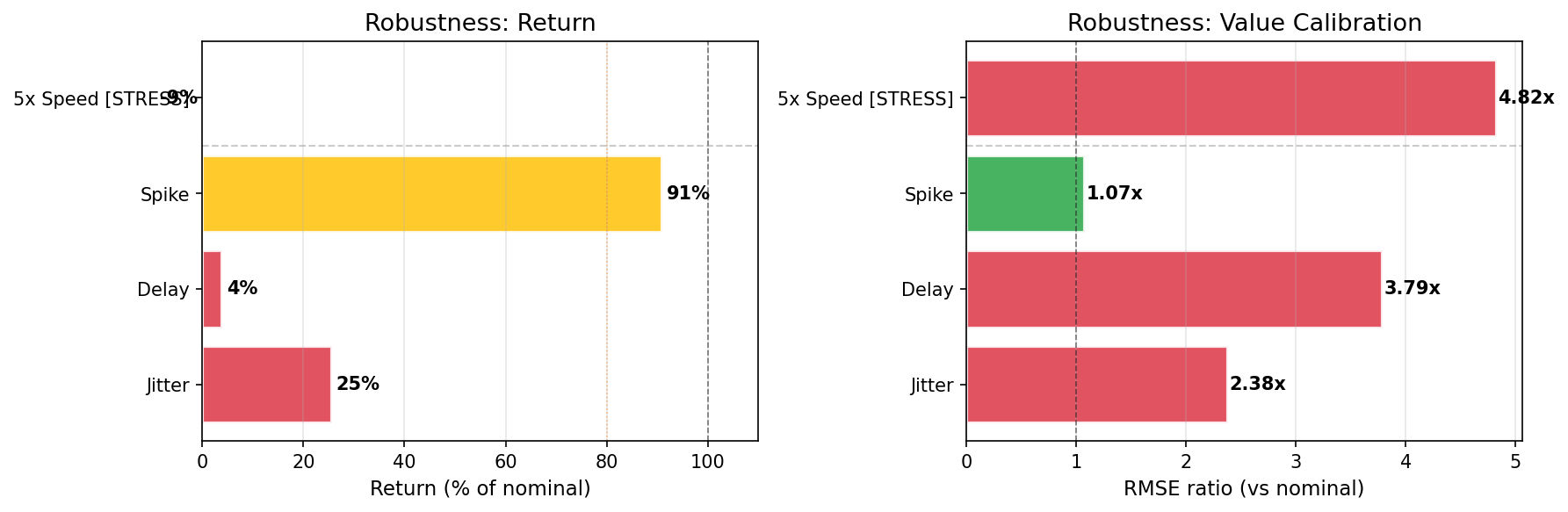

The same pattern at MuJoCo scale: HalfCheetah PPO

A PPO agent trained to reward ~990 on HalfCheetah-v5 shows even more catastrophic timing failures — all 4 scenarios statistically significant (95% bootstrap CI):

| Scenario | Return (% of nominal) | 95% CI | Drop |

|---|---|---|---|

| Observation delay (1 step) | 3.8% | [2.4%, 5.2%] | -96% |

| Speed jitter (2 +/- 1) | 25.4% | [23.5%, 27.8%] | -75% |

| 5x speed (unseen) | -9.3% | [-10.6%, -8.4%] | -109% |

| Mid-episode spike (1->5->1) | 90.9% | [86.3%, 97.8%] | -9% |

A single step of observation delay destroys 96% of performance. The agent goes negative at 5x speed.

View interactive report | Download report ZIP

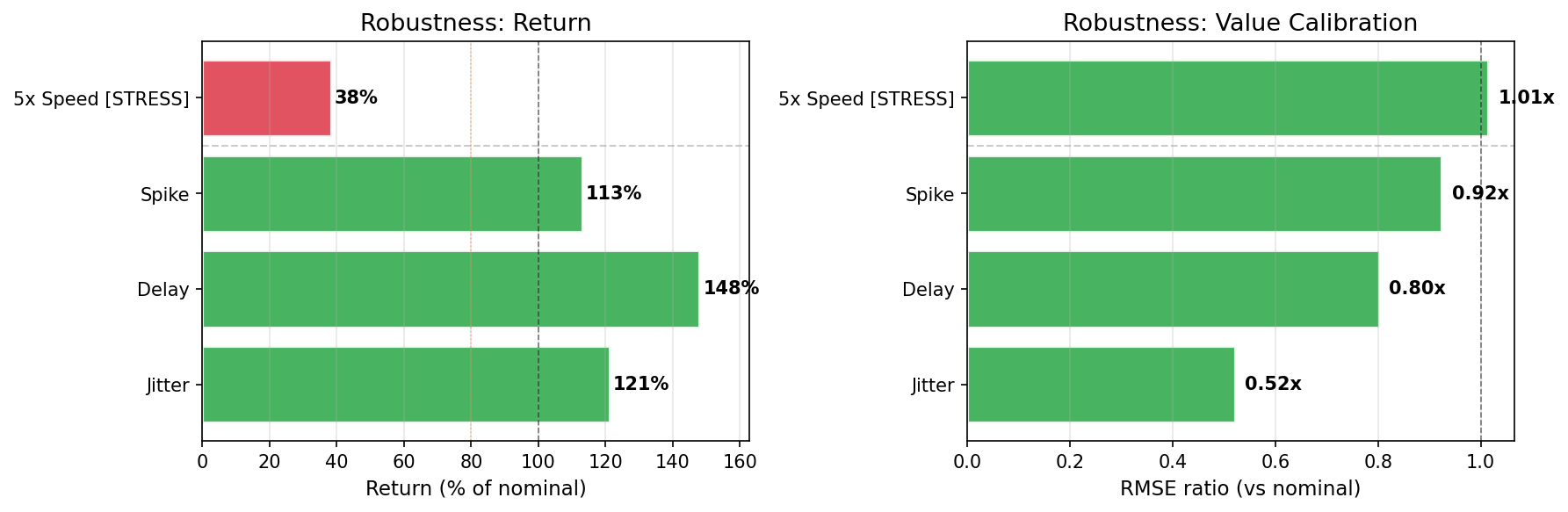

Speed-randomized training fixes the problem

| Scenario | Before (Standard) | After (Speed-Randomized) | Change |

|---|---|---|---|

| Observation delay | 2% | 148% | +146pp |

| Speed jitter | 28% | 121% | +93pp |

| 5x speed (unseen) | -12% | 38% | +50pp |

| Mid-episode spike | 100% | 113% | +13pp |

| Deployment | FAIL (0.02) | PASS (1.00) | |

| Quadrant | deployment_fragile | deployment_ready |

View Before report | View After report

Reproduce HalfCheetah results

pip install "deltatau-audit[sb3,mujoco]"

git clone https://github.com/maruyamakoju/deltatau-audit.git

cd deltatau-audit

python examples/audit_halfcheetah.py # standard PPO audit (~30 min)

python examples/train_robust_halfcheetah.py # train robust PPO (~30 min)

python examples/audit_before_after.py # Before/After comparison

Or download pre-trained models from Releases.

Install

pip install deltatau-audit # core

pip install "deltatau-audit[demo]" # + CartPole demo (recommended start)

pip install "deltatau-audit[sb3,mujoco]" # + SB3 + MuJoCo environments

Find and Fix in One Command

pip install "deltatau-audit[sb3]"

deltatau-audit fix-sb3 --algo ppo --model my_model.zip --env HalfCheetah-v5

This single command:

- Audits your model (finds timing failures)

- Retrains with speed randomization (the fix)

- Re-audits the fixed model (verifies the fix)

- Generates Before/After comparison report

BEFORE vs AFTER

Scenario Before After Change

------------ ---------- ---------- ----------

speed_5x 12.7% 76.6% + 63.9pp

jitter 43.7% 100.0% + 56.3pp

delay 100.0% 100.0% + 0.0pp

spike 26.7% 91.9% + 65.2pp

Deployment: FAIL (0.27) -> MILD (0.92)

Quadrant: deployment_fragile -> deployment_ready

Output: fixed model (.zip) + HTML reports + comparison.html (+ comparison.md).

Options: --timesteps (training budget), --speed-min/--speed-max (speed range), --workers (parallel episodes), --seed (reproducible), --ci (pipeline gate).

Audit Your Own SB3 Model

Just want the diagnosis? Use audit-sb3:

deltatau-audit audit-sb3 --algo ppo --model my_model.zip --env HalfCheetah-v5 --out my_report/

# Faster — use all CPU cores:

deltatau-audit audit-sb3 --algo ppo --model my_model.zip --env HalfCheetah-v5 --workers auto

# Reproducible:

deltatau-audit audit-sb3 --algo ppo --model my_model.zip --env HalfCheetah-v5 --seed 42

No model handy? Try with a sample:

gh release download assets -R maruyamakoju/deltatau-audit -p cartpole_ppo_sb3.zip

deltatau-audit audit-sb3 --algo ppo --model cartpole_ppo_sb3.zip --env CartPole-v1

Supported algorithms: ppo, sac, td3, a2c. Any Gymnasium environment ID works.

Python API (for custom workflows)

# Audit only

from deltatau_audit.adapters.sb3 import SB3Adapter

from deltatau_audit.auditor import run_full_audit

from deltatau_audit.report import generate_report

from stable_baselines3 import PPO

import gymnasium as gym

model = PPO.load("my_model.zip")

adapter = SB3Adapter(model)

result = run_full_audit(

adapter,

lambda: gym.make("HalfCheetah-v5"),

speeds=[1, 2, 3, 5, 8],

n_episodes=30,

n_workers=4, # parallel episode collection

seed=42, # reproducible results

)

generate_report(result, "my_audit/", title="My Agent Audit")

# Full fix pipeline

from deltatau_audit.fixer import fix_sb3_model

result = fix_sb3_model("my_model.zip", "ppo", "HalfCheetah-v5",

output_dir="fix_output/")

# result["fixed_model_path"] -> "fix_output/ppo_fixed.zip"

What It Measures

| Badge | What it tests | How |

|---|---|---|

| Reliance | Does the agent use internal timing? | Tampers with internal Δτ, measures value prediction error |

| Deployment | Does the agent survive realistic timing changes? | Speed jitter, observation delay, mid-episode spikes, sensor noise |

| Stress | Does the agent survive extreme timing changes? | 5× speed (unseen during training) |

Deployment scenarios (4): jitter (speed 2±1), delay (1-step obs lag), spike (1→5→1), obs_noise (Gaussian σ=0.1 on observations). All four run automatically.

Agents without internal timing (standard PPO, SAC, etc.) get Reliance: N/A — only Deployment and Stress are tested.

Rating Scale

| Rating | Return Ratio | Meaning |

|---|---|---|

| PASS | > 95% | Production ready |

| MILD | > 80% | Minor degradation |

| DEGRADED | > 50% | Significant loss |

| FAIL | <= 50% | Agent breaks |

All return ratios include bootstrap 95% confidence intervals with significance testing.

Performance

By default all episodes run serially. Use --workers to parallelize:

# Auto-detect CPU core count (recommended for local runs)

deltatau-audit audit-sb3 --algo ppo --model model.zip --env HalfCheetah-v5 --workers auto

# Explicit count

deltatau-audit demo cartpole --workers 4

| Workers | 30 episodes × 5 scenarios | Speedup |

|---|---|---|

| 1 (default) | ~3 min (CartPole) | — |

| 4 | ~50 sec | ~3.5× |

| auto (8 cores) | ~30 sec | ~6× |

--workers auto maps to os.cpu_count(). Works with all audit-* and demo subcommands. For reproducibility, pair with --seed 42 (parallel order is non-deterministic but per-episode seeds are fixed).

CI / Pipeline Integration

python -m deltatau_audit demo cartpole --ci --out ci_report/

# exit 0 = pass, exit 1 = warn (stress), exit 2 = fail (deployment)

Outputs ci_summary.json and ci_summary.md for pipeline gates and PR comments.

Output formats

# PR-ready markdown table (appends to $GITHUB_STEP_SUMMARY in GitHub Actions)

deltatau-audit audit-sb3 --algo ppo --model model.zip --env CartPole-v1 \

--format markdown

# Structured JSON to stdout (pipe to jq, scripts, or downstream tools)

deltatau-audit audit-sb3 --algo ppo --model model.zip --env CartPole-v1 \

--format json | jq '.summary'

# Combine JSON + CI exit codes

deltatau-audit audit-sb3 ... --format json --ci > result.json

JSON mode redirects all progress output to stderr so stdout contains only valid, parseable JSON. Reports are still generated in --out.

Markdown PR comment example

## Time Robustness Audit: PASS

| Badge | Rating | Score |

|-------|--------|-------|

| **Deployment** | **PASS** | 0.92 |

| **Stress** | **MILD** | 0.81 |

| Scenario | Category | Return | Significant |

|----------|----------|--------|-------------|

| jitter | Deployment | 95% | — |

...

GitHub Action (one line)

- uses: maruyamakoju/deltatau-audit@main

with:

command: audit-sb3

model: model.zip

algo: ppo

env: CartPole-v1

extras: sb3

Outputs status, deployment-score, stress-score for downstream steps. Exit code 0/1/2 for pass/warn/fail.

Full workflow examples

CartPole demo gate (zero config):

- uses: maruyamakoju/deltatau-audit@main

- uses: actions/upload-artifact@v4

if: always()

with:

name: timing-audit

path: audit_report/

Audit your own SB3 model:

- uses: maruyamakoju/deltatau-audit@main

id: audit

with:

command: audit-sb3

model: model.zip

algo: ppo

env: HalfCheetah-v5

extras: "sb3,mujoco"

- run: echo "Deployment score: ${{ steps.audit.outputs.deployment-score }}"

Manual install (if you prefer):

- run: pip install "deltatau-audit[sb3]"

- run: deltatau-audit audit-sb3 --algo ppo --model model.zip --env CartPole-v1 --ci

Speed-Randomized Training (the fix)

The fix for timing failures is simple: train with variable speed. Use JitterWrapper during SB3 training:

import gymnasium as gym

from stable_baselines3 import PPO

from deltatau_audit.wrappers import JitterWrapper

# Wrap env with speed randomization (speed 1-5)

env = JitterWrapper(gym.make("CartPole-v1"), base_speed=3, jitter=2)

model = PPO("MlpPolicy", env)

model.learn(total_timesteps=100_000)

model.save("robust_model")

This is exactly what fix-sb3 does under the hood. Use the wrapper directly when you want more control over training.

Available wrappers: JitterWrapper (random speed), FixedSpeedWrapper (constant speed), PiecewiseSwitchWrapper (scheduled speed changes), ObservationDelayWrapper (sensor delay), ObsNoiseWrapper (Gaussian observation noise).

Audit CleanRL Agents

CleanRL agents are plain nn.Module subclasses — no framework wrapper needed.

deltatau-audit audit-cleanrl \

--checkpoint runs/CartPole-v1/agent.pt \

--agent-module ppo_cartpole.py \

--agent-class Agent \

--agent-kwargs obs_dim=4,act_dim=2 \

--env CartPole-v1

Or via Python API:

from deltatau_audit.adapters.cleanrl import CleanRLAdapter

# Agent class must implement get_action_and_value(obs)

adapter = CleanRLAdapter(agent, lstm=False)

result = run_full_audit(adapter, env_factory, speeds=[1, 2, 3, 5, 8])

LSTM agents: pass --lstm (CLI) or CleanRLAdapter(agent, lstm=True) (API).

See examples/audit_cleanrl.py for a complete runnable example.

Sim-to-Real Transfer

Timing failures are one of the main causes of sim-to-real gaps. A policy that runs at 50 Hz in simulation may be deployed at 30 Hz or with variable latency in the real world — and collapse.

Simulation → Reality

50 Hz → 30 Hz (0.6x speed)

Fixed dt → Variable dt (jitter)

Instant obs → Observation delay (network/sensor lag)

Stable → Mid-episode spikes (system load)

deltatau-audit measures exactly these failure modes. If your agent passes Deployment ≥ MILD, it is likely to survive real-world timing variation.

IsaacLab / RSL-RL

For policies trained with IsaacLab (RSL-RL format):

from deltatau_audit.adapters.torch_policy import TorchPolicyAdapter

# Define your actor/critic architectures (same as training)

actor = MyActorNet(obs_dim=48, act_dim=12)

critic = MyCriticNet(obs_dim=48)

# Loads RSL-RL checkpoint format automatically

adapter = TorchPolicyAdapter.from_checkpoint(

"model.pt",

actor=actor,

critic=critic,

is_discrete=False, # continuous actions

)

result = run_full_audit(adapter, env_factory, speeds=[1, 2, 3, 5])

Supported checkpoint formats:

{"model_state_dict": {"actor.*": ..., "critic.*": ...}}(RSL-RL){"actor": state_dict, "critic": state_dict}(explicit split)- Raw

state_dict(actor-only)

Or use a callable — no checkpoint loading needed:

# Works with any framework's inference API

def my_act(obs):

action = runner.alg.actor_critic.act(obs)

value = runner.alg.actor_critic.evaluate(obs)

return action, value

adapter = TorchPolicyAdapter(my_act)

See examples/isaaclab_skeleton.py for a complete IsaacLab skeleton.

Custom Adapters

Implement AgentAdapter (see deltatau_audit/adapters/base.py):

from deltatau_audit.adapters.base import AgentAdapter

class MyAdapter(AgentAdapter):

def reset_hidden(self, batch=1, device="cpu"):

return torch.zeros(batch, hidden_dim)

def act(self, obs, hidden):

# Returns: (action, value, hidden_new, dt_or_None)

...

return action, value, hidden_new, None

Built-in adapters: SB3Adapter (PPO/SAC/TD3/A2C), SB3RecurrentAdapter (RecurrentPPO), CleanRLAdapter (CleanRL MLP/LSTM), TorchPolicyAdapter (IsaacLab/RSL-RL/custom), InternalTimeAdapter (Dt-GRU models).

Compare Two Audits

After auditing a fixed model, compare to a previous result in one command:

# Generate comparison.html alongside the new audit

deltatau-audit audit-sb3 --algo ppo --model fixed.zip --env HalfCheetah-v5 \

--compare before_audit/summary.json --out after_audit/

Or use the diff subcommand directly (writes both .md and .html):

python -m deltatau_audit diff before/summary.json after/summary.json --out comparison.md

Experiment Tracking

Push audit metrics to Weights & Biases or MLflow after any audit:

pip install "deltatau-audit[wandb]"

deltatau-audit audit-sb3 --model m.zip --algo ppo --env CartPole-v1 \

--wandb --wandb-project my-project --wandb-run baseline

pip install "deltatau-audit[mlflow]"

deltatau-audit audit-sb3 --model m.zip --algo ppo --env CartPole-v1 \

--mlflow --mlflow-experiment my-experiment

Or from Python:

from deltatau_audit.tracker import log_to_wandb, log_to_mlflow

result = run_full_audit(adapter, env_factory)

log_to_wandb(result, project="my-project")

log_to_mlflow(result, experiment_name="my-experiment")

Logged scalars: deployment_score, stress_score, reliance_score, per-scenario return_ratio. Logged params: deployment_rating, stress_rating, quadrant. Missing tracker packages print a warning instead of crashing.

Adaptive Sampling

For high-confidence results, use adaptive episode sampling:

deltatau-audit audit-sb3 --model m.zip --algo ppo --env HalfCheetah-v5 \

--adaptive --target-ci-width 0.05 --max-episodes 300

Instead of a fixed episode count, this keeps sampling until every scenario's 95% bootstrap CI width on the return ratio drops below --target-ci-width (default: 0.10), or until --max-episodes is reached (default: 500).

Failure Diagnostics

When scenarios fail, the audit automatically diagnoses the root cause:

Failure Analysis

FAIL jitter — Speed Jitter Sensitivity

The agent cannot handle variable-frequency control.

Root cause: Policy overfits to fixed dt → breaks when step timing varies.

Fix: Train with JitterWrapper(base_speed=3, jitter=2).

The HTML report includes a dedicated diagnostics card with per-scenario pattern matching, root cause analysis, and actionable fix recommendations.

Feature Summary

| Feature | CLI | Python API | Since |

|---|---|---|---|

| SB3 model audit | audit-sb3 |

SB3Adapter |

v0.3.0 |

| CleanRL audit | audit-cleanrl |

CleanRLAdapter |

v0.4.0 |

| HuggingFace Hub audit | audit-hf |

SB3Adapter.from_hub() |

v0.5.0 |

| IsaacLab / custom PyTorch | — | TorchPolicyAdapter |

v0.4.5 |

| One-command fix | fix-sb3, fix-cleanrl |

fix_sb3_model() |

v0.3.8 |

| Before/After comparison | --compare, diff |

generate_comparison() |

v0.4.0 |

| CI pipeline gates | --ci |

exit codes 0/1/2 | v0.3.0 |

| Markdown PR comments | --format markdown |

_print_markdown_summary() |

v0.3.9 |

| JSON output | --format json |

json.dumps(result) |

v0.5.7 |

| Failure diagnostics | automatic | generate_diagnosis() |

v0.5.2 |

| Adaptive sampling | --adaptive |

adaptive=True |

v0.5.3 |

| Type annotations (PEP 561) | — | py.typed |

v0.5.4 |

| WandB / MLflow tracking | --wandb, --mlflow |

log_to_wandb() |

v0.5.5 |

| Parallel episodes | --workers auto |

n_workers= |

v0.4.2 |

| Reproducible seeds | --seed 42 |

seed= |

v0.4.3 |

| HTML + JSON reports | --out dir/ |

generate_report() |

v0.3.0 |

| GitHub Actions | uses: maruyamakoju/deltatau-audit@main |

— | v0.5.10 |

| Colab notebook | notebooks/quickstart.ipynb |

— | v0.6.0 |

| SB3 training callback | — | TimingAuditCallback |

v0.6.1 |

| Badge SVG generation | badge summary.json |

generate_badges() |

v0.6.1 |

License

MIT

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file deltatau_audit-0.6.2.tar.gz.

File metadata

- Download URL: deltatau_audit-0.6.2.tar.gz

- Upload date:

- Size: 278.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

03b90edd917de85702f6db654d60cf13ed0a3fccf3d00eba016b261e9014387a

|

|

| MD5 |

aa6687c6b3c92cb4577f6cf3b0d39690

|

|

| BLAKE2b-256 |

101aec0115ecc3946e49a728625618e6075f21b2231037aa22d3ec30fb731bb7

|

Provenance

The following attestation bundles were made for deltatau_audit-0.6.2.tar.gz:

Publisher:

release.yml on maruyamakoju/deltatau-audit

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

deltatau_audit-0.6.2.tar.gz -

Subject digest:

03b90edd917de85702f6db654d60cf13ed0a3fccf3d00eba016b261e9014387a - Sigstore transparency entry: 970618596

- Sigstore integration time:

-

Permalink:

maruyamakoju/deltatau-audit@1dab6a44a8f471b6d90a027a98a354c9d095d74b -

Branch / Tag:

refs/tags/v0.6.2 - Owner: https://github.com/maruyamakoju

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@1dab6a44a8f471b6d90a027a98a354c9d095d74b -

Trigger Event:

push

-

Statement type:

File details

Details for the file deltatau_audit-0.6.2-py3-none-any.whl.

File metadata

- Download URL: deltatau_audit-0.6.2-py3-none-any.whl

- Upload date:

- Size: 251.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

7a4ff377c6a018ba2e73abc803f51e1c1f628b2db219680554c5db1635e4679a

|

|

| MD5 |

8f4b69c0b6356fdf41c48c3d4466e685

|

|

| BLAKE2b-256 |

39de3d8e9fede8b304ffba3da4a5ca703f37f7a2fe03cbafbc53acca6a281ed9

|

Provenance

The following attestation bundles were made for deltatau_audit-0.6.2-py3-none-any.whl:

Publisher:

release.yml on maruyamakoju/deltatau-audit

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

deltatau_audit-0.6.2-py3-none-any.whl -

Subject digest:

7a4ff377c6a018ba2e73abc803f51e1c1f628b2db219680554c5db1635e4679a - Sigstore transparency entry: 970618647

- Sigstore integration time:

-

Permalink:

maruyamakoju/deltatau-audit@1dab6a44a8f471b6d90a027a98a354c9d095d74b -

Branch / Tag:

refs/tags/v0.6.2 - Owner: https://github.com/maruyamakoju

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@1dab6a44a8f471b6d90a027a98a354c9d095d74b -

Trigger Event:

push

-

Statement type: