Time Robustness Audit for RL agents — measures timing reliance, deployment robustness, and stress resilience

Project description

deltatau-audit

Time Robustness Audit for RL agents.

Evaluates whether an RL agent breaks when the environment's timing changes — the kind of failure that silently appears in deployment but never shows up in training.

Try it in 30 seconds

pip install "deltatau-audit[demo]"

python -m deltatau_audit demo cartpole

No GPU. No MuJoCo. Just pip install and run. You'll see a Before/After comparison:

| Scenario | Before (Baseline) | After (Speed-Randomized) | Change |

|---|---|---|---|

| 5x speed | 12% | 49% | +37pp |

| Speed jitter | 66% | 115% | +49pp |

| Observation delay | 82% | 95% | +13pp |

| Mid-episode spike | 23% | 62% | +39pp |

| Deployment | FAIL (0.23) | DEGRADED (0.62) | +0.39 |

The standard agent collapses under timing perturbations. Speed-randomized training dramatically improves robustness. Full HTML reports with charts are generated in demo_report/.

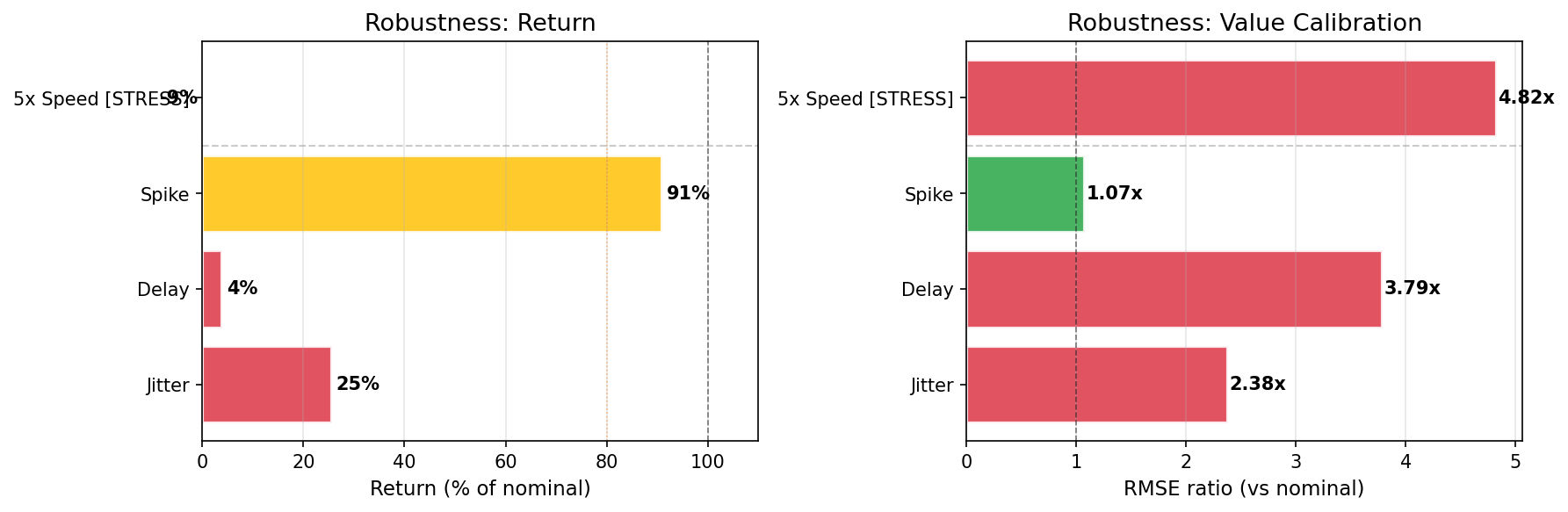

The same pattern at MuJoCo scale: HalfCheetah PPO

A PPO agent trained to reward ~990 on HalfCheetah-v5 shows even more catastrophic timing failures — all 4 scenarios statistically significant (95% bootstrap CI):

| Scenario | Return (% of nominal) | 95% CI | Drop |

|---|---|---|---|

| Observation delay (1 step) | 3.8% | [2.4%, 5.2%] | -96% |

| Speed jitter (2 +/- 1) | 25.4% | [23.5%, 27.8%] | -75% |

| 5x speed (unseen) | -9.3% | [-10.6%, -8.4%] | -109% |

| Mid-episode spike (1->5->1) | 90.9% | [86.3%, 97.8%] | -9% |

A single step of observation delay destroys 96% of performance. The agent goes negative at 5x speed.

View interactive report | Download report ZIP

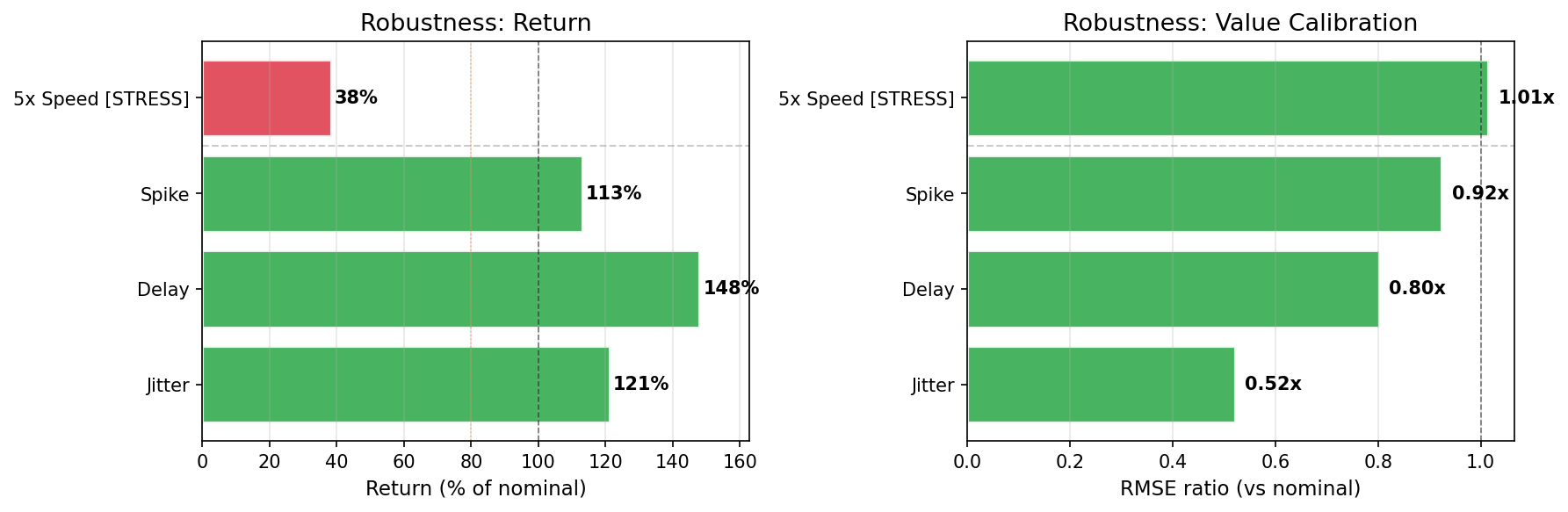

Speed-randomized training fixes the problem

| Scenario | Before (Standard) | After (Speed-Randomized) | Change |

|---|---|---|---|

| Observation delay | 2% | 148% | +146pp |

| Speed jitter | 28% | 121% | +93pp |

| 5x speed (unseen) | -12% | 38% | +50pp |

| Mid-episode spike | 100% | 113% | +13pp |

| Deployment | FAIL (0.02) | PASS (1.00) | |

| Quadrant | deployment_fragile | deployment_ready |

View Before report | View After report

Reproduce HalfCheetah results

pip install "deltatau-audit[sb3,mujoco]"

git clone https://github.com/maruyamakoju/deltatau-audit.git

cd deltatau-audit

python examples/audit_halfcheetah.py # standard PPO audit (~30 min)

python examples/train_robust_halfcheetah.py # train robust PPO (~30 min)

python examples/audit_before_after.py # Before/After comparison

Or download pre-trained models from Releases.

Install

pip install deltatau-audit # core

pip install "deltatau-audit[demo]" # + CartPole demo (recommended start)

pip install "deltatau-audit[sb3,mujoco]" # + SB3 + MuJoCo environments

Audit Your Own SB3 Model

from stable_baselines3 import PPO

from deltatau_audit.adapters.sb3 import SB3Adapter

from deltatau_audit.auditor import run_full_audit

from deltatau_audit.report import generate_report

import gymnasium as gym

model = PPO.load("my_model.zip")

adapter = SB3Adapter(model)

result = run_full_audit(

adapter,

lambda: gym.make("HalfCheetah-v5"),

speeds=[1, 2, 3, 5, 8],

n_episodes=30,

)

generate_report(result, "my_audit/", title="My Agent Audit")

What It Measures

| Badge | What it tests | How |

|---|---|---|

| Reliance | Does the agent use internal timing? | Tampers with internal Dt, measures value prediction error |

| Deployment | Does the agent survive realistic timing changes? | Jitter, observation delay, mid-episode speed spikes |

| Stress | Does the agent survive extreme timing changes? | 5x speed (unseen during training) |

Agents without internal timing (standard PPO, SAC, etc.) get Reliance: N/A — only Deployment and Stress are tested.

Rating Scale

| Rating | Return Ratio | Meaning |

|---|---|---|

| PASS | > 95% | Production ready |

| MILD | > 80% | Minor degradation |

| DEGRADED | > 50% | Significant loss |

| FAIL | <= 50% | Agent breaks |

All return ratios include bootstrap 95% confidence intervals with significance testing.

CI Mode

python -m deltatau_audit demo cartpole --ci --out ci_report/

# exit 0 = pass, exit 1 = warn (stress), exit 2 = fail (deployment)

Outputs ci_summary.json and ci_summary.md for pipeline gates and PR comments.

GitHub Actions example

- name: Install deltatau-audit

run: pip install "deltatau-audit[demo]"

- name: Run timing robustness gate

run: python -m deltatau_audit demo cartpole --ci --out ci_report/

- name: Upload audit report

if: always()

uses: actions/upload-artifact@v4

with:

name: timing-audit

path: ci_report/

Custom Adapters

Implement AgentAdapter (see deltatau_audit/adapters/base.py):

from deltatau_audit.adapters.base import AgentAdapter

class MyAdapter(AgentAdapter):

def reset_hidden(self, batch=1, device="cpu"):

return torch.zeros(batch, hidden_dim)

def act(self, obs, hidden):

# Returns: (action, value, hidden_new, dt_or_None)

...

return action, value, hidden_new, None

Built-in adapters: SB3Adapter (PPO/SAC/TD3/A2C), SB3RecurrentAdapter (RecurrentPPO), InternalTimeAdapter (Dt-GRU models).

Comparing Results

python -m deltatau_audit diff before/summary.json after/summary.json --out comparison.md

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file deltatau_audit-0.3.7.tar.gz.

File metadata

- Download URL: deltatau_audit-0.3.7.tar.gz

- Upload date:

- Size: 210.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

32048141907531742b1564c8370b3ded2707cd0946aa9d4814d9461800eceefb

|

|

| MD5 |

a9b8a315c31b29652ce72b4c3244809f

|

|

| BLAKE2b-256 |

cff78823a40c6f7e12bfda9d311cbfc48ed245c4515000ad4128d1abed382be5

|

Provenance

The following attestation bundles were made for deltatau_audit-0.3.7.tar.gz:

Publisher:

release.yml on maruyamakoju/deltatau-audit

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

deltatau_audit-0.3.7.tar.gz -

Subject digest:

32048141907531742b1564c8370b3ded2707cd0946aa9d4814d9461800eceefb - Sigstore transparency entry: 962208494

- Sigstore integration time:

-

Permalink:

maruyamakoju/deltatau-audit@d048023f99c658dfa5498902850392d55bcb8008 -

Branch / Tag:

refs/tags/v0.3.7 - Owner: https://github.com/maruyamakoju

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@d048023f99c658dfa5498902850392d55bcb8008 -

Trigger Event:

push

-

Statement type:

File details

Details for the file deltatau_audit-0.3.7-py3-none-any.whl.

File metadata

- Download URL: deltatau_audit-0.3.7-py3-none-any.whl

- Upload date:

- Size: 207.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

36196f36b425cf4bae81d7abe7a6c15da536edd673125723a5fef0530c33bb26

|

|

| MD5 |

63c7425a6cd5158510499cc7e7fa6d5a

|

|

| BLAKE2b-256 |

480fa4d8429a8726a879ff16074aea7ef25802e2911cf5f7d8182b43087895a5

|

Provenance

The following attestation bundles were made for deltatau_audit-0.3.7-py3-none-any.whl:

Publisher:

release.yml on maruyamakoju/deltatau-audit

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

deltatau_audit-0.3.7-py3-none-any.whl -

Subject digest:

36196f36b425cf4bae81d7abe7a6c15da536edd673125723a5fef0530c33bb26 - Sigstore transparency entry: 962208495

- Sigstore integration time:

-

Permalink:

maruyamakoju/deltatau-audit@d048023f99c658dfa5498902850392d55bcb8008 -

Branch / Tag:

refs/tags/v0.3.7 - Owner: https://github.com/maruyamakoju

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@d048023f99c658dfa5498902850392d55bcb8008 -

Trigger Event:

push

-

Statement type: