A memory profiler for data batch processing applications.

Project description

The Fil memory profiler for Python

Your code reads some data, processes it, and uses too much memory. In order to reduce memory usage, you need to figure out:

- Where peak memory usage is, also known as the high-water mark.

- What code was responsible for allocating the memory that was present at that peak moment.

That's exactly what Fil will help you find. Fil an open source memory profiler designed for data processing applications written in Python, and includes native support for Jupyter.

At the moment it only runs on Linux and macOS, and while it supports threading, it does not yet support multiprocessing or multiple processes in general.

"Within minutes of using your tool, I was able to identify a major memory bottleneck that I never would have thought existed. The ability to track memory allocated via the Python interface and also C allocation is awesome, especially for my NumPy / Pandas programs."

—Derrick Kondo

For more information, including an example of the output, see https://pythonspeed.com/products/filmemoryprofiler/

- Fil vs. other Python memory tools

- Installation

- Using Fil

- Reducing memory usage in your code

- How Fil works

Fil vs. other Python memory tools

There are two distinct patterns of Python usage, each with its own source of memory problems.

In a long-running server, memory usage can grow indefinitely due to memory leaks. That is, some memory is not being freed.

- If the issue is in Python code, tools like

tracemallocand Pympler can tell you which objects are leaking and what is preventing them from being leaked. - If you're leaking memory in C code, you can use tools like Valgrind.

Fil, however, is not aimed at memory leaks, but at the other use case: data processing applications. These applications load in data, process it somehow, and then finish running.

The problem with these applications is that they can, on purpose or by mistake, allocate huge amounts of memory. It might get freed soon after, but if you allocate 16GB RAM and only have 8GB in your computer, the lack of leaks doesn't help you.

Fil will therefore tell you, in an easy to understand way:

- Where peak memory usage is, also known as the high-water mark.

- What code was responsible for allocating the memory that was present at that peak moment.

- This includes C/Fortran/C++/whatever extensions that don't use Python's memory allocation API (

tracemalloconly does Python memory APIs).

This allows you to optimize that code in a variety of ways.

Installation

Assuming you're on macOS or Linux, and are using Python 3.6 or later, you can use either Conda or pip (or any tool that is pip-compatible and can install manylinux2010 wheels).

Conda

To install on Conda:

$ conda install -c conda-forge filprofiler

Pip

To install the latest version of Fil you'll need Pip 19 or newer. You can check like this:

$ pip --version

pip 19.3.0

If you're using something older than v19, you can upgrade by doing:

$ pip install --upgrade pip

If that doesn't work, try running your code in a virtualenv:

$ python3 -m venv venv/

$ . venv/bin/activate

(venv) $ pip install --upgrade pip

Assuming you have a new enough version of pip:

$ pip install filprofiler

Using Fil

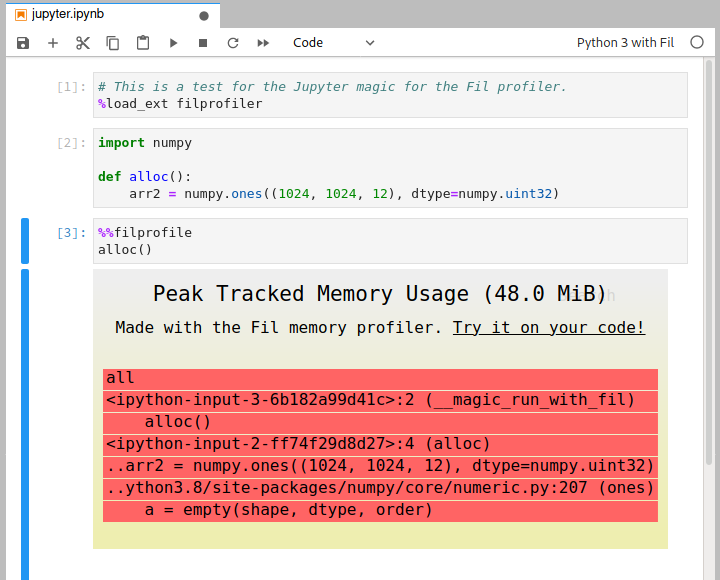

Profiling in Jupyter

To measure peak memory usage of some code in Jupyter you need to do three things:

- Use an alternative kernel, "Python 3 with Fil". You can choose this kernel when you create a new notebook, or you can switch an existing notebook in the Kernel menu; there should be a "Change Kernel" option in there in both Jupyter Notebook and JupyterLab.

- Load the extension by doing

%load_ext filprofiler. - Add the

%%filprofilemagic to the top of the cell with the code you wish to profile.

Profiling complete Python programs

Instead of doing:

$ python yourscript.py --input-file=yourfile

Just do:

$ fil-profile run yourscript.py --input-file=yourfile

And it will generate a report and automatically try to open it in for you in a browser.

Reports will be stored in the fil-result/ directory in your current working directory.

If your program is usually run as python -m yourapp.yourmodule --args, you can do that with Fil too:

$ fil-profile run -m yourapp.yourmodule --args

As of version 0.11, you can use python -m to run Fil:

$ python -m filprofiler run yourscript.py --input-file=yourfile

As of version 2021.04.2, you can disable opening reports in a browser by using the --no-browser option (see fil-profile --help for details).

You will want to view the SVG report in a browser, since they rely heavily on JavaScript.

If you want to serve the report files from a static directory from a web server, you can use python -m http.server.

API for profiling specific Python functions

You can also measure memory usage in part of your program; this requires version 0.15 or later. This requires two steps.

1. Add profiling in your code

Let's you have some code that does the following:

def main():

config = load_config()

result = run_processing(config)

generate_report(result)

You only want to get memory profiling for the run_processing() call.

You can do so in the code like so:

from filprofiler.api import profile

def main():

config = load_config()

result = profile(lambda: run_processing(config), "/tmp/fil-result")

generate_report(result)

You could also make it conditional, e.g. based on an environment variable:

import os

from filprofiler.api import profile

def main():

config = load_config()

if os.environ.get("FIL_PROFILE"):

result = profile(lambda: run_processing(config), "/tmp/fil-result")

else:

result = run_processing(config)

generate_report(result)

2. Run your script with Fil

You still need to run your program in a special way. If previously you did:

$ python yourscript.py --config=myconfig

Now you would do:

$ filprofiler python yourscript.py --config=myconfig

Notice that you're doing filprofiler python, rather than filprofiler run as you would if you were profiling the full script.

Only functions explicitly called with the filprofiler.api.profile() will have memory profiling enabled; the rest of the code will run at (close) to normal speed and configuration.

Each call to profile() will generate a separate report.

The memory profiling report will be written to the directory specified as the output destination when calling profile(); in or example above that was "/tmp/fil-result".

Unlike full-program profiling:

- The directory you give will be used directly, there won't be timestamped sub-directories.

If there are multiple calls to

profile(), it is your responsibility to ensure each call writes to a unique directory. - The report(s) will not be opened in a browser automatically, on the presumption you're running this in an automated fashion.

Debugging out-of-memory crashes

New in v0.14 and later: Just run your program under Fil, and it will generate a SVG at the point in time when memory runs out, and then exit with exit code 53:

$ fil-profile run oom.py

...

=fil-profile= Wrote memory usage flamegraph to fil-result/2020-06-15T12:37:13.033/out-of-memory.svg

Fil uses three heuristics to determine if the process is close to running out of memory:

- A failed allocation, indicating insufficient memory is available.

- The operating system or memory-limited cgroup (e.g. a Docker container) only has 100MB of RAM available.

- The process swap is larger than available memory, indicating heavy swapping by the process.

In general you want to avoid swapping, and e.g. explicitly use

mmap()if you expect to be using disk as a backfill for memory.

Reducing memory usage in your code

You've found where memory usage is coming from—now what?

If you're using data processing or scientific computing libraries, I have written a relevant guide to reducing memory usage.

How Fil works

Fil uses the LD_PRELOAD/DYLD_INSERT_LIBRARIES mechanism to preload a shared library at process startup.

This shared library captures all memory allocations and deallocations and keeps track of them.

At the same time, the Python tracing infrastructure (used e.g. by cProfile and coverage.py) to figure out which Python callstack/backtrace is responsible for each allocation.

For performance reasons, only the largest allocations are reported, with a minimum of 99% of allocated memory reported. The remaining <1% is highly unlikely to be relevant when trying to reduce usage; it's effectively noise.

Fil and threading, with notes on NumPy and Zarr {#threading}

In general, Fil will track allocations in threads correctly.

First, if you start a thread via Python, running Python code, that thread will get its own callstack for tracking who is responsible for a memory allocation.

Second, if you start a C thread, the calling Python code is considered responsible for any memory allocations in that thread. This works fine... except for thread pools. If you start a pool of threads that are not Python threads, the Python code that created those threads will be responsible for all allocations created during the thread pool's lifetime.

Therefore, in order to ensure correct memory tracking, Fil disables thread pools in BLAS (used by NumPy), BLOSC (used e.g. by Zarr), OpenMP, and numexpr.

They are all set to use 1 thread, so calls should run in the calling Python thread and everything should be tracked correctly.

This has some costs:

- This can reduce performance in some cases, since you're doing computation with one CPU instead of many.

- Insofar as these libraries allocate memory proportional to number of threads, the measured memory usage might be wrong.

Fil does this for the whole program when using fil-profile run.

When using the Jupyter kernel, anything run with the %%filprofile magic will have thread pools disabled, but other code should run normally.

What Fil tracks

Fil will track memory allocated by:

- Normal Python code.

- C code using

malloc()/calloc()/realloc()/posix_memalign(). - C++ code using

new(including viaaligned_alloc()). - Anonymous

mmap()s. - Fortran 90 explicitly allocated memory (tested with gcc's

gfortran).

Still not supported, but planned:

mremap()(resizing ofmmap()).- File-backed

mmap(). The semantics are somewhat different than normal allocations or anonymousmmap(), since the OS can swap it in or out from disk transparently, so supporting this will involve a different kind of resource usage and reporting. - Other forms of shared memory, need to investigate if any of them allow sufficient allocation.

- Anonymous

mmap()s created via/dev/zero(not common, since it's not cross-platform, e.g. macOS doesn't support this). memfd_create(), a Linux-only mechanism for creating in-memory files.- Possibly

memalign,valloc(),pvalloc(),reallocarray(). These are all rarely used, as far as I can tell.

License

Copyright 2020 Hyphenated Enterprises LLC

Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file filprofiler-2021.5.0-cp39-cp39-manylinux_2_12_x86_64.manylinux2010_x86_64.whl.

File metadata

- Download URL: filprofiler-2021.5.0-cp39-cp39-manylinux_2_12_x86_64.manylinux2010_x86_64.whl

- Upload date:

- Size: 2.7 MB

- Tags: CPython 3.9, manylinux: glibc 2.12+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.4.1 importlib_metadata/4.0.1 pkginfo/1.7.0 requests/2.25.1 requests-toolbelt/0.9.1 tqdm/4.60.0 CPython/3.8.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

18c1d5083bee532fb7ae063f6264cebb6851a558e4d6d57eeeb9f64b521627a4

|

|

| MD5 |

c55aff920e52ae4fec89112302a0bd48

|

|

| BLAKE2b-256 |

6a3b3453b11dda3b3530a8f02ce8574719b6f19addf4e10b5b06195c9a785927

|

File details

Details for the file filprofiler-2021.5.0-cp39-cp39-macosx_10_14_x86_64.whl.

File metadata

- Download URL: filprofiler-2021.5.0-cp39-cp39-macosx_10_14_x86_64.whl

- Upload date:

- Size: 457.4 kB

- Tags: CPython 3.9, macOS 10.14+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.4.1 importlib_metadata/4.0.1 pkginfo/1.7.0 requests/2.25.1 requests-toolbelt/0.9.1 tqdm/4.60.0 CPython/3.9.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0bc2dc04c270408ff6502332a01dc1b07a89b20b23405a1e142d9d05139ad937

|

|

| MD5 |

21227cd975528c6dc89bcc36ef4b61f7

|

|

| BLAKE2b-256 |

0b82c1311063d68f6ed28c3c13c775a6944bb53b79127cd73f9e97d3da8fea22

|

File details

Details for the file filprofiler-2021.5.0-cp38-cp38-manylinux_2_12_x86_64.manylinux2010_x86_64.whl.

File metadata

- Download URL: filprofiler-2021.5.0-cp38-cp38-manylinux_2_12_x86_64.manylinux2010_x86_64.whl

- Upload date:

- Size: 2.7 MB

- Tags: CPython 3.8, manylinux: glibc 2.12+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.4.1 importlib_metadata/4.0.1 pkginfo/1.7.0 requests/2.25.1 requests-toolbelt/0.9.1 tqdm/4.60.0 CPython/3.8.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3cb8f8982df9a1c50d8a3ab2fadb56ed0330836f9f7202e11e42f053b342fcc5

|

|

| MD5 |

14ce0a6e03b45ce6c3276a8ff256cf19

|

|

| BLAKE2b-256 |

26074c60345e92d9c202c485db4d03927abbef54aa154022c646fa7905b717ee

|

File details

Details for the file filprofiler-2021.5.0-cp38-cp38-macosx_10_14_x86_64.whl.

File metadata

- Download URL: filprofiler-2021.5.0-cp38-cp38-macosx_10_14_x86_64.whl

- Upload date:

- Size: 457.4 kB

- Tags: CPython 3.8, macOS 10.14+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.4.1 importlib_metadata/4.0.1 pkginfo/1.7.0 requests/2.25.1 requests-toolbelt/0.9.1 tqdm/4.60.0 CPython/3.8.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4e2d37b4d8160761800165dc304fe0931e37b52d6da2da1562723b554293daed

|

|

| MD5 |

5948e44296165ef47cca198f80065d2f

|

|

| BLAKE2b-256 |

f79107480225a7c61a6c25a624234f77daa41a1e3ac864e0167b4a98520932a7

|

File details

Details for the file filprofiler-2021.5.0-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl.

File metadata

- Download URL: filprofiler-2021.5.0-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl

- Upload date:

- Size: 2.7 MB

- Tags: CPython 3.7m, manylinux: glibc 2.12+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.4.1 importlib_metadata/4.0.1 pkginfo/1.7.0 requests/2.25.1 requests-toolbelt/0.9.1 tqdm/4.60.0 CPython/3.8.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a66c1d471e8b8240a2ab413a520f7594713e7867d27a9237fdfc380603be74ed

|

|

| MD5 |

3265912257b243093c0df3638cf73759

|

|

| BLAKE2b-256 |

f035c874f65afb92c755ac073d9abb000aef996081e3f908d362699440d92590

|

File details

Details for the file filprofiler-2021.5.0-cp37-cp37m-macosx_10_14_x86_64.whl.

File metadata

- Download URL: filprofiler-2021.5.0-cp37-cp37m-macosx_10_14_x86_64.whl

- Upload date:

- Size: 457.6 kB

- Tags: CPython 3.7m, macOS 10.14+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.4.1 importlib_metadata/4.0.1 pkginfo/1.7.0 requests/2.25.1 requests-toolbelt/0.9.1 tqdm/4.60.0 CPython/3.7.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c5f3b4200139f1dc14da39bd09056761c6ae377cd77cf620441857736cc0ffeb

|

|

| MD5 |

d012f316e5ce735af4270db211911bcb

|

|

| BLAKE2b-256 |

9c46f4582095593f3efe40dc949c1d232f0f32880c96f965f2e16c17bb709504

|

File details

Details for the file filprofiler-2021.5.0-cp36-cp36m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl.

File metadata

- Download URL: filprofiler-2021.5.0-cp36-cp36m-manylinux_2_12_x86_64.manylinux2010_x86_64.whl

- Upload date:

- Size: 2.7 MB

- Tags: CPython 3.6m, manylinux: glibc 2.12+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.4.1 importlib_metadata/4.0.1 pkginfo/1.7.0 requests/2.25.1 requests-toolbelt/0.9.1 tqdm/4.60.0 CPython/3.8.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3b42020728d424524a22cf4eede2cc3023de0f20ad3cbf067ec43e6d1838e976

|

|

| MD5 |

d6561d905b7114a1f3acf37ea6da2897

|

|

| BLAKE2b-256 |

4c7abfab1e3890c39b7a10ec3566f179752fe1be82f2cf63afdc0e995af702df

|

File details

Details for the file filprofiler-2021.5.0-cp36-cp36m-macosx_10_14_x86_64.whl.

File metadata

- Download URL: filprofiler-2021.5.0-cp36-cp36m-macosx_10_14_x86_64.whl

- Upload date:

- Size: 457.6 kB

- Tags: CPython 3.6m, macOS 10.14+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.4.1 importlib_metadata/4.0.1 pkginfo/1.7.0 requests/2.25.1 requests-toolbelt/0.9.1 tqdm/4.60.0 CPython/3.6.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0e6a4680124b6f75db0f1b2bc8fe6c149e68aaed800b36f3cce1ea091337c277

|

|

| MD5 |

60989a8205261538107224b5e42503ca

|

|

| BLAKE2b-256 |

be3cda27ed7d12bef0da88700742ccd78f418a3a1246dc3d6c239a86ecfd384f

|