Declarative middleware ordering via slug-based DAG resolution for LangChain and beyond

Project description

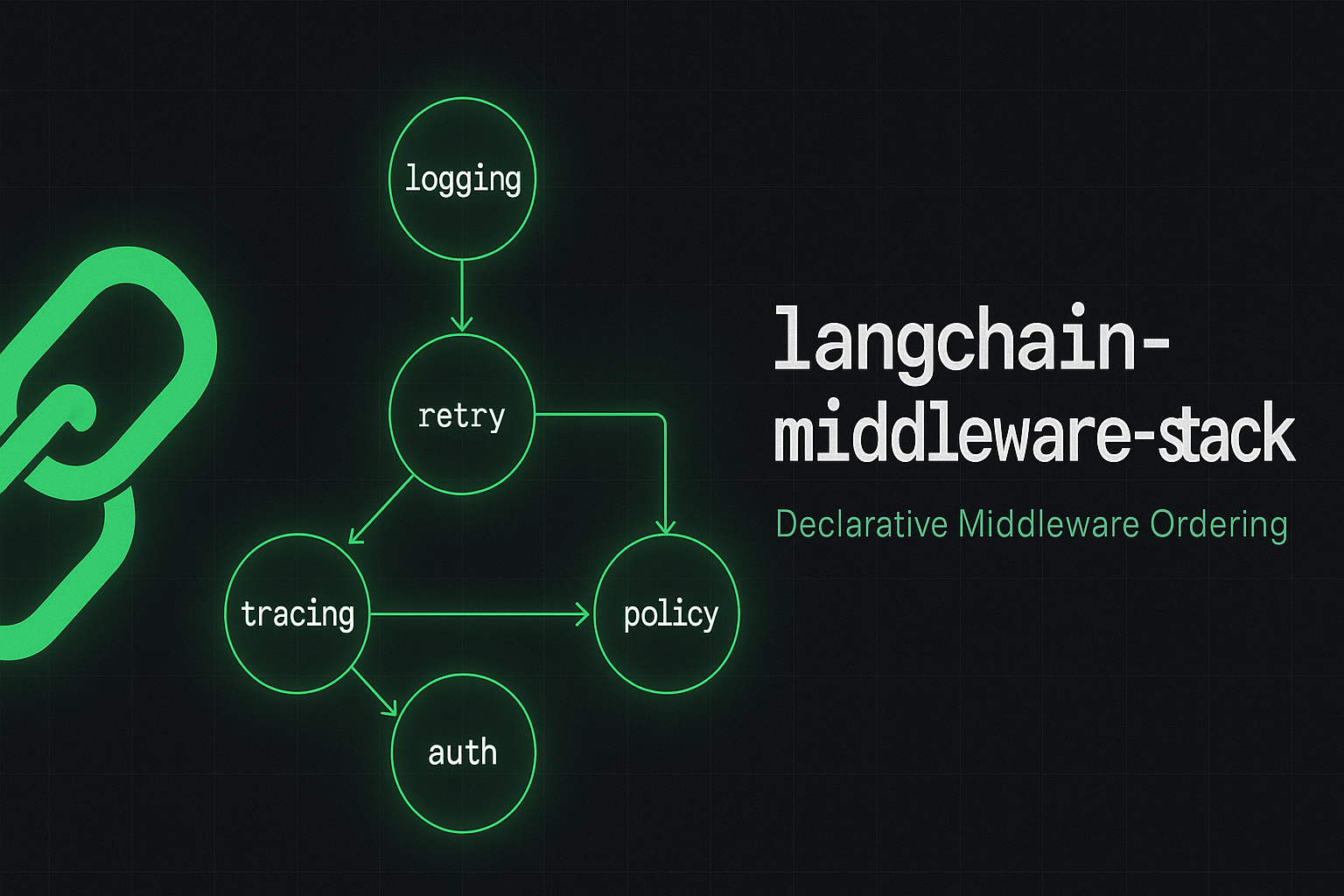

langchain-middleware-stack

Declarative middleware ordering for LangChain Deep Agents using stable slug-based DAG resolution.

Why

In LangChain Deep Agents, middleware is a first-class control layer over model calls, tools, and state. The framework still composes it as a positional middleware=[...] list on create_agent. In that model, ordering is semantics: the first entry is the outermost wrapper (for example around wrap_model_call), so reordering changes retries, timeouts, logging, and policy in non-obvious ways.

The underlying issue is not middleware itself — it is that composition is positional instead of declarative. That breaks down in production because:

- Fragility — Inserting or reordering entries changes behavior; constraints like “after logging, before retry” are not declared on the middleware type.

- Poor composability — Separate teams or packages cannot merge contributions without one owner of the final list and implicit coordination.

- Hidden coupling — Dependencies are expressed as indices, not as explicit, reviewable constraints.

- No validated guarantees — Ordering invariants and dependency relationships are not enforced before runtime.

**langchain-middleware-stack** addresses this with constraint-based composition (DAG + topological sort, stable Kahn tie-break). You declare intent with four primitives:

| Primitive | Role |

|---|---|

slug |

Stable, unique identity for each middleware |

after / before |

Self-declared ordering constraints |

MiddlewareStack |

Topological resolver (Kahn's algorithm, stable tie-break) |

wires |

Cross-middleware attribute injection at resolve-time |

Installation

pip install langchain-middleware-stack

Zero runtime dependencies. Python ≥ 3.9.

Demo notebook

[notebooks/deep-agents-middleware.ipynb](notebooks/deep-agents-middleware.ipynb) walks through baseline vs improved, both using a real [ChatOpenAI](https://python.langchain.com/docs/integrations/chat/openai/) model (OPENAI_API_KEY required for those cells):

| Baseline | [create_agent](https://reference.langchain.com/python/langchain/agents/create_agent) with a manually ordered middleware=[...] list. |

| Improved | The same middleware types added to a MiddlewareStack in scrambled order; resolve() produces the LangChain list (outermost first), then create_agent uses that list. |

Appendices at the end: an offline wrap(handler) toy stack and optional LangChain FakeListChatModel — not the main teaching path.

make notebook # from a dev setup with `make setup`

Quick start

from langchain_middleware_stack import MiddlewareStack

from langchain_middleware_stack.middleware import LoggingMiddleware, RetryMiddleware

stack = MiddlewareStack()

stack.add([RetryMiddleware(max_retries=3), LoggingMiddleware()])

# or: stack.add(RetryMiddleware(...)).add(LoggingMiddleware())

ordered = stack.resolve()

# -> [LoggingMiddleware, RetryMiddleware]

# retry.after=("logging",) reordered — every retry is logged

Writing your own middleware

from typing import ClassVar

from langchain_middleware_stack import BaseMiddleware

class TracingMiddleware(BaseMiddleware):

slug: ClassVar[str] = "tracing"

after: ClassVar[tuple[str, ...]] = ("logging",) # run after logging

def wrap(self, handler, *args, **kwargs):

with tracer.start_span(handler.__name__):

return handler(*args, **kwargs)

async def awrap(self, handler, *args, **kwargs):

with tracer.start_span(handler.__name__):

return await handler(*args, **kwargs)

BaseMiddleware is a mixin — use it with LangChain's AgentMiddleware when you pass middleware into create_agent. Subclasses must implement the agent hooks you need (typically wrap_model_call); declare tools (often ()) on the class.

from typing import ClassVar

from langchain.agents.middleware import AgentMiddleware

from langchain_middleware_stack import BaseMiddleware

class MyMiddleware(AgentMiddleware, BaseMiddleware):

slug: ClassVar[str] = "my-middleware"

tools: ClassVar[tuple] = ()

def wrap_model_call(self, request, handler):

# intercept model calls; delegate with handler(request)

return handler(request)

The notebook uses this pattern end-to-end for the baseline and improved scenarios.

Cross-middleware wiring

wires injects an attribute from a resolved upstream middleware into your middleware at stack build time:

class ConsumerMiddleware(BaseMiddleware):

slug: ClassVar[str] = "consumer"

after: ClassVar[tuple[str, ...]] = ("provider",)

wires: ClassVar[dict[str, tuple[str, str]]] = {

"_shared_fn": ("provider", "exported_fn")

}

# _shared_fn is injected from provider.exported_fn after resolve()

Error reference

| Exception | Raised when |

|---|---|

MiddlewareResolutionError |

Base class for all stack build errors |

MiddlewareCycleError |

Dependency graph contains a cycle |

MiddlewareDuplicateSlugError |

Two middleware share the same slug |

MiddlewareWiringError |

Cross-middleware wiring fails |

RetryExhaustedError |

RetryMiddleware runs out of attempts |

LangChain upstream and related initiatives

Pointers to declarative ordering and composition for agent middleware—the same problem this package targets (not exhaustive):

| Link | Kind | Topic |

|---|---|---|

| #33885 | issue (langchain-ai/langchain) |

Middleware declaring dependencies; tracked as a possible 1.x evolution |

| #2126 | issue (langchain-ai/deepagents) |

Less rigid middleware composition at the Deep Agents entry point |

| #34514 | PR (langchain-ai/langchain, open) |

depends_on on middleware classes and topological ordering inside create_agent |

This package is a standalone resolver you can use with the current harness; how much overlaps with #34514 if it merges is an integration detail for later. A maintainer-facing draft lives in docs/github-issue-langchain-community.md (fill before opening a tracking issue).

License

Apache-2.0

Author

João Gabriel Lima — LinkedIn · joaogabriellima.eng@gmail.com · jambu.ai

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file langchain_middleware_stack-0.1.3.tar.gz.

File metadata

- Download URL: langchain_middleware_stack-0.1.3.tar.gz

- Upload date:

- Size: 1.5 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

932957246ee76d8fb85fcfdf2e29e6b383d82c88af5173bf4ff810d33240ba5f

|

|

| MD5 |

2e4b8f5905dcfa13f1fd79c61e55d54b

|

|

| BLAKE2b-256 |

6f371e5d5ecf9189c0c5205eb7bf1d4afe4e7b8cd9ee50a7695dd041e364c3e8

|

File details

Details for the file langchain_middleware_stack-0.1.3-py3-none-any.whl.

File metadata

- Download URL: langchain_middleware_stack-0.1.3-py3-none-any.whl

- Upload date:

- Size: 19.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1436c9c1a34a2139a3f3e9950f972adc8f0683a141ff5ecf3f5ad9f5d8188969

|

|

| MD5 |

1bdcf8eec4823dba8804f728a583db0b

|

|

| BLAKE2b-256 |

4fca271d2a325bdd051c5d70278cbf672e0ea3880f842a570732f9fe58eebe0f

|