Lightly Purple is a lightweight, fast, and easy-to-use data exploration tool for data scientists and engineers.

Project description

🚀 Aloha!

We at Lightly created Lightly Purple, an open-source tool designed to supercharge your data curation workflows for computer vision datasets. Explore your data, visualize annotations and crops, tag samples, and export curated lists to improve your machine learning pipelines.

Lightly Purple runs entirely locally on your machine, keeping your data private. It consists of a Python library for indexing your data and a web-based UI for visualization and curation.

✨ Core Workflow

Using Lightly Purple typically involves these steps:

- Index Your Dataset: Run a Python script using the

lightly-purplelibrary to process your local dataset (images and annotations) and save metadata into a localpurple.dbfile. - Launch the UI: The script then starts a local web server.

- Explore & Curate: Use the UI to visualize images, annotations, and object crops. Filter and search your data (experimental text search available). Apply tags to interesting samples (e.g., "mislabeled", "review").

- Export Curated Data: Export information (like filenames) for your tagged samples from the UI to use downstream.

- Stop the Server: Close the terminal running the script (Ctrl+C) when done.

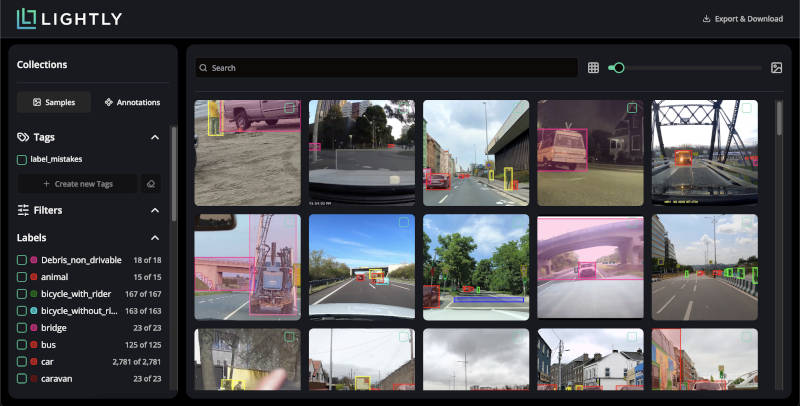

Visualize your dataset samples with annotations in the grid view.

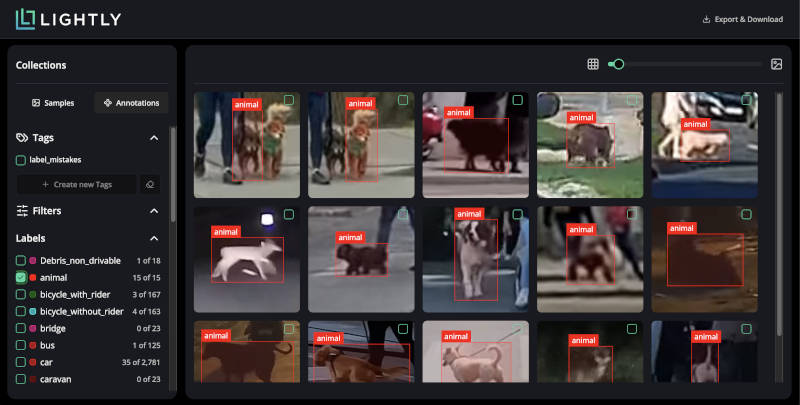

Switch to the annotation view to inspect individual object crops easily.

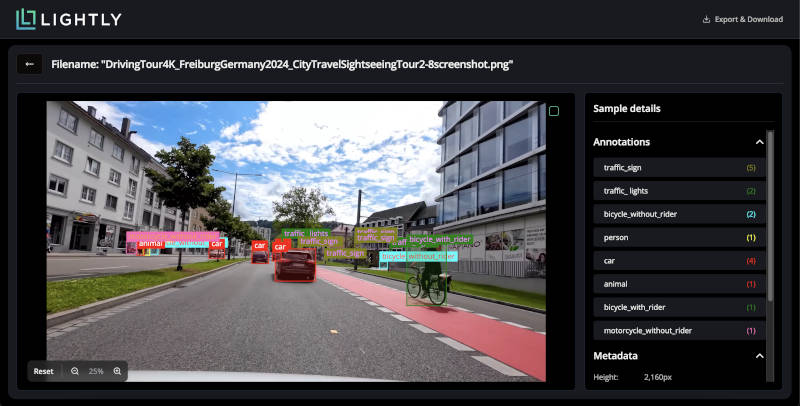

Inspect individual samples in detail, viewing all annotations and metadata.

💻 Installation

Ensure you have Python 3.8 or higher. We strongly recommend using a virtual environment.

The library is OS-independent and works on Windows, Linux, and macOS.

# 1. Create and activate a virtual environment (Recommended)

# On Linux/macOS:

python3 -m venv venv

source venv/bin/activate

# On Windows:

python -m venv venv

.\venv\Scripts\activate

# 2. Install Lightly Purple

pip install lightly-purple

# 3. Verify installation (Optional)

pip show lightly-purple

Quickstart

Download the dataset and run a quickstart script to load your dataset and launch the app.

YOLO Object Detection

To run an example using a yolo dataset, clone the example repository and run the example script:

git clone https://github.com/lightly-ai/datasets_examples_purple dataset_examples_purple

python dataset_examples_purple/road_signs_yolo/example_yolo.py

The YOLO format details:

road_signs_yolo/

├── train/

│ ├── images/

│ │ ├── image1.jpg

│ │ ├── image2.jpg

│ │ └── ...

│ └── labels/

│ ├── image1.txt

│ ├── image2.txt

│ └── ...

├── valid/ (optional)

│ ├── images/

│ │ └── ...

│ └── labels/

│ └── ...

└── data.yaml

Each label file should contain YOLO format annotations (one per line):

<class> <x_center> <y_center> <width> <height>

Where coordinates are normalized between 0 and 1.

Let's break down the `example_yolo.py` script to explore the dataset:

# We import the DatasetLoader class from the lightly_purple module

from lightly_purple import DatasetLoader

from pathlib import Path

# Create a DatasetLoader instance

loader = DatasetLoader()

data_yaml_path = Path(__file__).resolve().parent / "data.yaml"

loader.from_yolo(

data_yaml_path=str(data_yaml_path),

input_split="test",

)

# We start the UI application on port 8001

loader.launch()

COCO Instance Segmentation

To run an instance segmentation example using a COCO dataset, clone the example repository and run the example script:

git clone https://github.com/lightly-ai/datasets_examples_purple dataset_examples_purple

python dataset_examples_purple/coco_subset_128_images/example_coco.py

The COCO format details:

coco_subset_128_images/

├── images/

│ ├── image1.jpg

│ ├── image2.jpg

│ └── ...

└── instances_train2017.json # Single JSON file containing all annotations

COCO uses a single JSON file containing all annotations. The format consists of three main components:

- Images: Defines metadata for each image in the dataset.

- Categories: Defines the object classes.

- Annotations: Defines object instances.

Let's break down the `example_coco.py` script to explore the dataset:

# We import the DatasetLoader class from the lightly_purple module

from lightly_purple import DatasetLoader

from pathlib import Path

# Create a DatasetLoader instance

loader = DatasetLoader()

current_dir = Path(__file__).resolve().parent

loader.from_coco_instance_segmentations(

annotations_json_path=str(current_dir / "instances_train2017.json"),

input_images_folder=str(current_dir / "images"),

)

# We start the UI application on port 8001

loader.launch()

🔍 How It Works

- Your Python script uses the

lightly-purpleDataset Loader. - The Loader reads your images and annotations, calculates embeddings, and saves metadata to a local

purple.dbfile (using DuckDB). loader.launch()starts a local Backend API server.- This server reads from

purple.dband serves data to the UI Application running in your browser (http://localhost:8001). - Images are streamed directly from your disk for display in the UI.

📦 Supported Dataset Formats & Annotations

The DatasetLoader currently supports:

- YOLOv8 Object Detection: Reads

.yamlfile. Supports bounding boxes ✅. - COCO Object Detection: Reads

.jsonannotations. Supports bounding boxes ✅. - COCO Instance Segmentation: Reads

.jsonannotations. Supports instance masks in RLE (Run-Length Encoding) format ✅.

Limitations:

- Requires datasets with annotations. Cannot index image folders alone ❌.

- No direct support for classification datasets yet ❌.

- Cannot add custom metadata during the loading step ❌.

📚 FAQ

Are the datasets persistent?

Yes, the information about datasets is persistent and stored in the db file. You can see it after the dataset is processed. If you rerun the loader it will create a new dataset representing the same dataset, keeping the previous dataset information untouched.

Can I change the database path?

Not yet. The database is stored in the working directory by default.

Can I launch in another Python script or do I have to do it in the same script?

It is possible to use only one script at the same time because we lock the db file for the duration of the script.

Can I change the API backend port?

Currently, the API always runs on port 8001, and this cannot be changed yet.

Can I process datasets that do not have annotations?

No, we support only datasets with annotations now.

What dataset annotations are supported?

Bounding boxes are supported ✅

Instance segmentation is supported ✅

Custom metadata is NOT yet supported ❌

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file lightly_purple-0.2.20.tar.gz.

File metadata

- Download URL: lightly_purple-0.2.20.tar.gz

- Upload date:

- Size: 1.3 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.5.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b3b66c2f655156d33e92d29f627d64928e4e8015e2aaf86267ca9687ed7f8f6b

|

|

| MD5 |

864d30b59a34f079c4fa09c3f6675424

|

|

| BLAKE2b-256 |

212cf34e07cd43d933e8405c521fe3ff8ef1192fdd2cf6b37a1e3ef6d36e8674

|

File details

Details for the file lightly_purple-0.2.20-py3-none-any.whl.

File metadata

- Download URL: lightly_purple-0.2.20-py3-none-any.whl

- Upload date:

- Size: 1.4 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.5.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

31f15b1d33fe06c47cbc443a3d94d6c1a07bdc992a1acf5af0188c852bf7302c

|

|

| MD5 |

4a3ba465c040ff35cd7d66062e99f233

|

|

| BLAKE2b-256 |

3432d24f15e830b7a5b3c95883367fed5e1ade225845c696673dfedb7f618813

|