Static scanning library for detecting malicious code, backdoors, and other security risks in ML model files

Project description

ModelAudit

Secure your AI models before deployment. Detects malicious code, backdoors, and security vulnerabilities in ML model files.

📖 Full Documentation | 🎯 Usage Examples | 🔍 Supported Formats

🚀 Quick Start

Install and scan in 30 seconds:

# Install ModelAudit with all ML framework support

pip install modelaudit[all]

# Scan a model file

modelaudit model.pkl

# Scan a directory

modelaudit ./models/

# Export results for CI/CD

modelaudit model.pkl --format json --output results.json

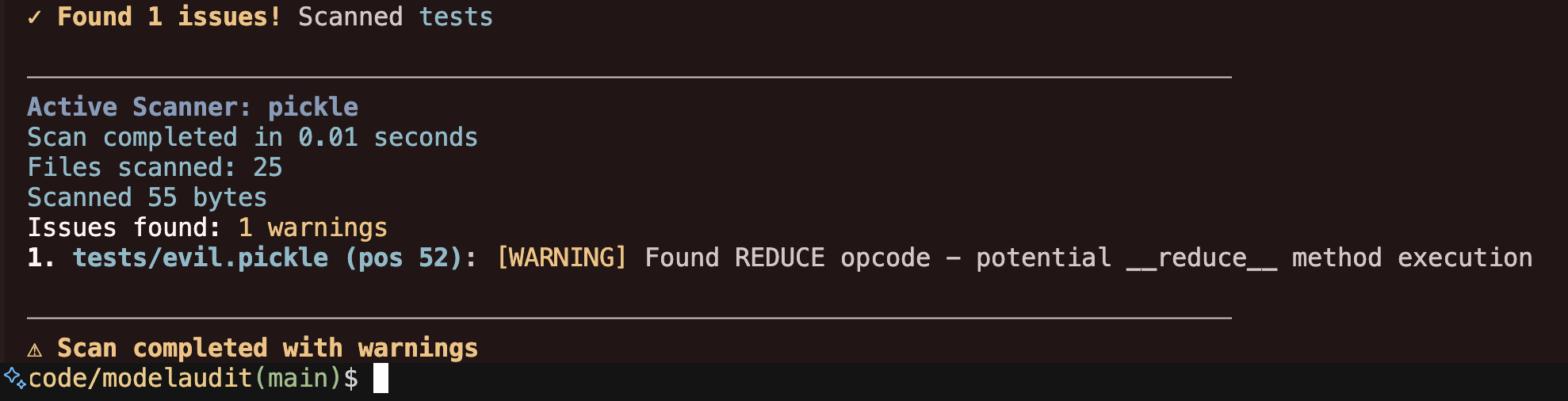

Example output:

$ modelaudit suspicious_model.pkl

✓ Scanning suspicious_model.pkl

Files scanned: 1 | Issues found: 2 critical, 1 warning

1. suspicious_model.pkl (pos 28): [CRITICAL] Malicious code execution attempt

Why: Contains os.system() call that could run arbitrary commands

2. suspicious_model.pkl (pos 52): [WARNING] Dangerous pickle deserialization

Why: Could execute code when the model loads

✗ Security issues found - DO NOT deploy this model

🛡️ What Problems It Solves

Prevents Code Execution Attacks

Stops malicious models that run arbitrary commands when loaded (common in PyTorch .pt files)

Detects Model Backdoors

Identifies trojaned models with hidden functionality or suspicious weight patterns

Ensures Supply Chain Security

Validates model integrity and prevents tampering in your ML pipeline

Enforces License Compliance

Checks for license violations that could expose your company to legal risk

📊 Supported Model Formats

ModelAudit scans all major ML model formats with specialized security analysis for each:

| Format | Extensions | Risk Level | Notes |

|---|---|---|---|

| PyTorch | .pt, .pth, .ckpt, .bin |

🔴 HIGH | Contains pickle serialization - always scan |

| Pickle | .pkl, .pickle, .dill |

🔴 HIGH | Avoid in production - convert to SafeTensors |

| Joblib | .joblib |

🔴 HIGH | Can contain pickled objects |

| SafeTensors | .safetensors |

🟢 SAFE | Preferred secure format |

| GGUF/GGML | .gguf, .ggml |

🟢 SAFE | LLM standard, binary format |

| ONNX | .onnx |

🟢 SAFE | Industry standard, good interoperability |

| TensorFlow | .pb, SavedModel |

🟠 MEDIUM | Scan for dangerous operations |

| Keras | .h5, .keras, .hdf5 |

🟠 MEDIUM | Check for executable layers |

| JAX/Flax | .msgpack, .flax, .orbax, .jax |

🟡 LOW | Validate transforms |

Plus 10+ additional formats including ExecuTorch, TensorFlow Lite, Core ML, and more.

View complete format documentation →

🎯 Common Use Cases

Pre-Deployment Security Checks

modelaudit production_model.safetensors --format json --output security_report.json

CI/CD Pipeline Integration

modelaudit models/ --exit-code-on-issues --timeout 300

Third-Party Model Validation

# Scan models from HuggingFace or cloud storage

modelaudit https://huggingface.co/gpt2

modelaudit s3://my-bucket/downloaded-model.pt

Compliance & Audit Reporting

modelaudit model_package.zip --sbom compliance_report.json --verbose

View advanced usage examples →

⚙️ Installation Options

Basic installation (recommended for most users):

pip install modelaudit[all]

Minimal installation with specific formats:

# Basic installation

pip install modelaudit

# Add specific format support as needed

pip install modelaudit[tensorflow] # TensorFlow SavedModel

pip install modelaudit[pytorch] # PyTorch models

pip install modelaudit[onnx] # ONNX models

NumPy compatibility:

# For full compatibility with all ML frameworks

pip install modelaudit[numpy1]

# Check scanner compatibility status

modelaudit doctor --show-failed

Docker installation:

docker pull ghcr.io/promptfoo/modelaudit:latest

docker run --rm -v $(pwd):/data ghcr.io/promptfoo/modelaudit:latest model.pkl

📋 Output Formats

Human-readable output (default):

$ modelaudit model.pkl

✓ Scanning model.pkl

Files scanned: 1 | Issues found: 1 critical

1. model.pkl (pos 28): [CRITICAL] Malicious code execution attempt

Why: Contains os.system() call that could run arbitrary commands

JSON output for automation:

{

"files_scanned": 1,

"issues": [

{

"message": "Malicious code execution attempt",

"severity": "critical",

"location": "model.pkl (pos 28)"

}

]

}

🔧 Getting Help

- Documentation: promptfoo.dev/docs/model-audit/

- Troubleshooting: promptfoo.dev/docs/model-audit/troubleshooting/

- Issues: github.com/promptfoo/modelaudit/issues

For scanner compatibility issues:

modelaudit doctor --show-failed

📝 License

This project is licensed under the MIT License - see the LICENSE file for details.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file modelaudit-0.2.0.tar.gz.

File metadata

- Download URL: modelaudit-0.2.0.tar.gz

- Upload date:

- Size: 8.6 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

407e02f6633eb46f81903f6ca36a5849c105c54dfb0f49ef234f5993a7273171

|

|

| MD5 |

9c02212a639da73dce977baad06ffecd

|

|

| BLAKE2b-256 |

a5e09e7a23f8ae81cb663dc4d36d4be07425fc5ec0c93b2381fc8900a111d7a1

|

File details

Details for the file modelaudit-0.2.0-py3-none-any.whl.

File metadata

- Download URL: modelaudit-0.2.0-py3-none-any.whl

- Upload date:

- Size: 145.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

222ba84dcc869a37f0b5c079792808f1a640cc86dd2f9c01350ac75b7a88707e

|

|

| MD5 |

6c6c267573a66b688cdfc9f477dd5592

|

|

| BLAKE2b-256 |

e0a43194e8d6b1229fa5d6a4800862f7aa6ec8eda199ce9c561cf82ebdb06b52

|