Static scanning library for detecting malicious code, backdoors, and other security risks in ML model files

Project description

ModelAudit

Static security scanner for AI/ML model files. It detects malicious code, dangerous deserialization, risky module usage, and embedded secrets—all without loading or executing the model.

Documentation | Usage Examples | Supported Formats

Quick Start

# Install with all supported ML framework dependencies

pip install modelaudit[all]

# Scan a model file

modelaudit model.pkl

# Scan a directory

modelaudit ./models/

# Export results for automation

modelaudit model.pkl --format json --output results.json

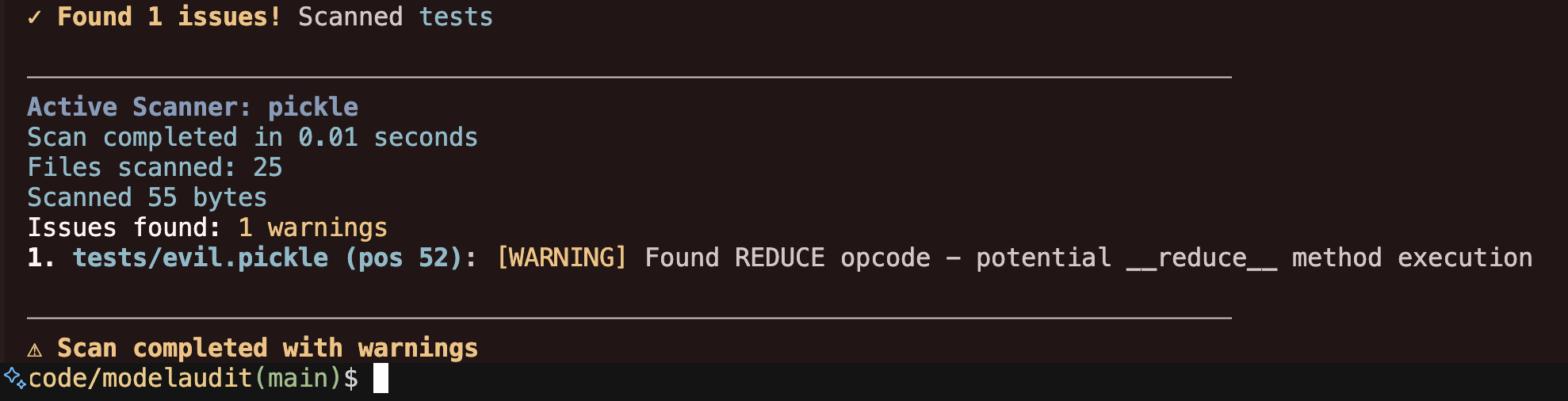

Example output:

$ modelaudit suspicious_model.pkl

✓ Scanning suspicious_model.pkl

Files scanned: 1 | Issues found: 2 critical, 1 warning

1. suspicious_model.pkl (pos 28): [CRITICAL] Malicious code execution attempt

Why: Contains os.system() call that could run arbitrary commands

2. suspicious_model.pkl (pos 52): [WARNING] Dangerous pickle deserialization

Why: Could execute code when the model loads

✗ 2 security issues found. See details above.

Installation

Recommended (includes common ML frameworks):

pip install modelaudit[all]

Basic installation:

# Core functionality only (pickle, numpy, archives)

pip install modelaudit

Specific frameworks:

pip install modelaudit[tensorflow] # TensorFlow (.pb)

pip install modelaudit[pytorch] # PyTorch (.pt, .pth)

pip install modelaudit[h5] # Keras (.h5, .keras)

pip install modelaudit[onnx] # ONNX (.onnx)

pip install modelaudit[safetensors] # SafeTensors (.safetensors)

# Multiple frameworks

pip install modelaudit[tensorflow,pytorch,h5]

Additional features:

pip install modelaudit[cloud] # S3, GCS, Azure storage

pip install modelaudit[coreml] # Apple Core ML

pip install modelaudit[flax] # JAX/Flax models

pip install modelaudit[mlflow] # MLflow registry

pip install modelaudit[huggingface] # Hugging Face integration

Compatibility:

# NumPy 1.x compatibility (some frameworks require NumPy < 2.0)

pip install modelaudit[numpy1]

# For CI/CD environments (omits dependencies like TensorRT that may not be available)

pip install modelaudit[all-ci]

Docker:

docker pull ghcr.io/promptfoo/modelaudit:latest

# Linux/macOS

docker run --rm -v "$(pwd)":/app ghcr.io/promptfoo/modelaudit:latest model.pkl

# Windows

docker run --rm -v "%cd%":/app ghcr.io/promptfoo/modelaudit:latest model.pkl

Security Checks

Code Execution Detection

- Dangerous Python modules:

os,sys,subprocess,eval,exec - Pickle opcodes:

REDUCE,GLOBAL,INST,OBJ,NEWOBJ,STACK_GLOBAL,BUILD,NEWOBJ_EX - Embedded executable file detection

Embedded Data Extraction

- API keys, tokens, and credentials in model weights/metadata

- URLs, IP addresses, and network endpoints

- Suspicious configuration properties

Archive Security

- Path traversal attacks in ZIP/TAR archives

- Executable files within model packages

- Malicious filenames and directory structures

ML Framework Analysis

- TensorFlow operations:

PyFunc,PyFuncStateless - Keras unsafe layers and custom objects

- Template injection in model configurations

Context-Aware Analysis

- Intelligently distinguishes between legitimate ML framework patterns and genuine threats to reduce false positives in complex model files

Supported Formats

ModelAudit includes 29 specialized scanners for ML model formats (see complete list):

| Format | Extensions | Security Focus |

|---|---|---|

| Pickle | .pkl, .pickle, .dill, .pt, .pth |

Code execution, malicious opcodes, deserialization |

| Archives | .zip, .tar, .gz, .7z, .bz2 |

Path traversal, embedded executables |

| TensorFlow | .pb, SavedModel directories |

Dangerous operations, custom ops |

| Keras | .h5, .keras, .hdf5 |

Unsafe layers, custom objects |

| ONNX | .onnx |

Custom operators, metadata |

| SafeTensors | .safetensors |

Header validation, metadata |

| GGUF/GGML | .gguf, .ggml |

Header validation, metadata |

| Joblib | .joblib |

Pickled objects, scikit-learn |

| JAX/Flax | .msgpack, .flax, .orbax |

Serialized transforms |

| NumPy | .npy, .npz |

Array metadata, pickle objects |

| Core ML | .mlmodel |

Custom layers, metadata |

| ExecuTorch | .ptl, .pte |

Mobile model validation |

Plus scanners for TensorFlow Lite, TensorRT, PaddlePaddle, OpenVINO, text files, and configuration formats.

Complete format documentation →

Usage Examples

Basic Scanning

# Scan single file

modelaudit model.pkl

# Scan directory

modelaudit ./models/

# Strict mode (fail on warnings)

modelaudit model.pkl --strict

CI/CD Integration

# JSON output for automation

modelaudit models/ --format json --output results.json

# Generate SBOM report

modelaudit model.pkl --sbom compliance_report.json

# Disable colors in CI

NO_COLOR=1 modelaudit models/

Remote Sources

# Hugging Face models (via direct URL or hf:// scheme)

modelaudit https://huggingface.co/gpt2

modelaudit hf://microsoft/DialoGPT-medium

# Cloud storage

modelaudit s3://bucket/model.pt

modelaudit gs://bucket/models/

modelaudit https://account.blob.core.windows.net/container/model.pt

# MLflow registry

modelaudit models:/MyModel/Production

# JFrog Artifactory

modelaudit https://company.jfrog.io/repo/model.pt

Command Options

--format- Output format: text, json, sarif--output- Write results to file--verbose- Detailed output--quiet- Minimal output--strict- Fail on warnings, scan all files--timeout- Override scan timeout--max-size- Set size limits (e.g., 10 GB)--dry-run- Preview without scanning--progress- Force progress display--sbom- Generate CycloneDX SBOM--blacklist- Additional patterns to flag--no-cache- Disable result caching

Output Formats

Text (default)

$ modelaudit model.pkl

✓ Scanning model.pkl

Files scanned: 1 | Issues found: 1 critical

1. model.pkl (pos 28): [CRITICAL] Malicious code execution attempt

Why: Contains os.system() call that could run arbitrary commands

JSON (for automation)

modelaudit model.pkl --format json

{

"files_scanned": 1,

"issues": [

{

"message": "Malicious code execution attempt",

"severity": "critical",

"location": "model.pkl (pos 28)"

}

]

}

SARIF (for security tools)

modelaudit model.pkl --format sarif --output results.sarif

Troubleshooting

Check scanner availability

modelaudit doctor --show-failed

NumPy compatibility issues

# Use NumPy 1.x compatibility mode

pip install modelaudit[numpy1]

Missing dependencies

# ModelAudit shows exactly what to install

modelaudit your-model.onnx

# Output: "Install with 'pip install modelaudit[onnx]'"

Exit Codes

0- No security issues found1- Security issues detected2- Scan errors occurred

Authentication

ModelAudit uses environment variables for authenticating to remote services:

# JFrog Artifactory

export JFROG_API_TOKEN=your_token

# MLflow

export MLFLOW_TRACKING_URI=http://localhost:5000

# AWS, Google Cloud, and Azure

# Authentication is handled automatically by the respective client libraries

# (e.g., via IAM roles, `aws configure`, `gcloud auth login`, or environment variables).

# For specific env var setup, refer to the library's documentation.

export AWS_ACCESS_KEY_ID=your_access_key

export AWS_SECRET_ACCESS_KEY=your_secret_key

export GOOGLE_APPLICATION_CREDENTIALS=/path/to/service-account.json

# Hugging Face

export HF_TOKEN=your_token

Documentation

- Documentation: promptfoo.dev/docs/model-audit/

- Usage Examples: promptfoo.dev/docs/model-audit/usage/

- Report Issues: Contact support at promptfoo.dev

📝 License

This project is licensed under the MIT License - see the LICENSE file for details.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file modelaudit-0.2.5.tar.gz.

File metadata

- Download URL: modelaudit-0.2.5.tar.gz

- Upload date:

- Size: 9.1 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

424b9f9c54f1e44294bbf31f186a8fed943b430e0c6d6b93cf7e7d7ce7b2bb77

|

|

| MD5 |

3c30ab6f7563b751c693862893fe0ad1

|

|

| BLAKE2b-256 |

05d581328d18bb4446a3d965927fa2a88a58fbd4adaa3d56e1381dde18aa46ec

|

File details

Details for the file modelaudit-0.2.5-py3-none-any.whl.

File metadata

- Download URL: modelaudit-0.2.5-py3-none-any.whl

- Upload date:

- Size: 416.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

446aec92fdf7d4effa517f132a7b04aa7397a621f5bd3ee64d4dbd3a0e2d6bab

|

|

| MD5 |

75a3118f2817931b374fab4451500958

|

|

| BLAKE2b-256 |

f2385c92d96ab1516f5d46e06d1b8d5f7a21e570c5aebf6313cafa8f94e6375c

|