Using OME specifications with Apache Arrow for fast, queryable, and language agnostic bioimage data.

Project description

Open, interoperable, and queryable microscopy images with OME Arrow

OME-Arrow uses Open Microscopy Environment (OME) specifications through Apache Arrow for fast, queryable, and language agnostic bioimage data.

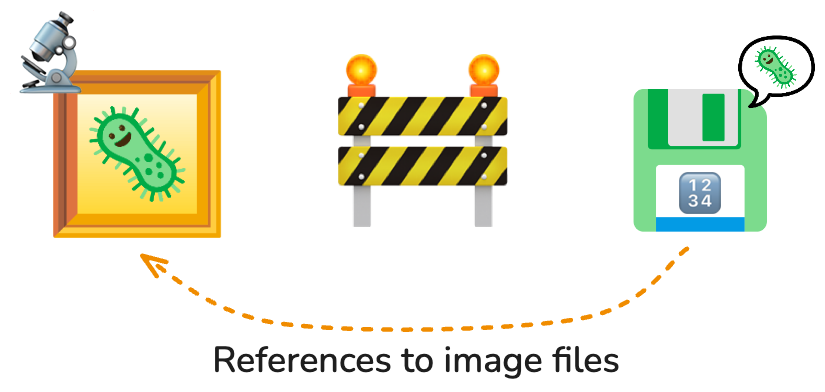

Images are often left behind from the data model, referenced but excluded from databases.

OME-Arrow brings images back into the story.

OME Arrow enables image data to be stored alongside metadata or derived data such as single-cell morphology features. Images in OME Arrow are composed of mutlilayer structs so they may be stored as values within tables. This means you can store, query, and build relationships on data from the same location using any system which is compatible with Apache Arrow (including Parquet) through common data interfaces (such as SQL and DuckDB).

Project focus

This package is intentionally dedicated to work at a per-image level and not large batch handling (though it may be used for those purposes by users or in other projects).

- For visualizing OME Arrow and OME Parquet data in Napari, please see the

napari-ome-arrowNapari plugin. - For more comprehensive handling of many images and features in the context of the OME Parquet format please see the

CytoDataFrameproject (and relevant example notebook).

Installation

Install OME Arrow from PyPI or from source:

# install from pypi

pip install ome-arrow

# install directly from source

pip install git+https://github.com/wayscience/ome-arrow.git

Quick start

See below for a quick start guide. Please also reference an example notebook: Learning to fly with OME-Arrow.

from ome_arrow import OMEArrow

# Ingest a tif image through a convenient OME Arrow class

# We can also ingest OME-Zarr or NumPy arrays.

oa_image = OMEArrow(

data="your_image.tif"

)

# Access the OME Arrow struct itself

# (compatible with Arrow-compliant data storage).

oa_image.data

# Show information about the image.

oa_image.info()

# Display the image with matplotlib.

oa_image.view(how="matplotlib")

# Display the image with pyvista

# (great for ZYX 3D images; install extras: `pip install 'ome-arrow[viz]'`).

oa_image.view(how="pyvista")

# Export to OME-Parquet.

# We can also export OME-TIFF, OME-Zarr or NumPy arrays.

oa_image.export(how="ome-parquet", out="your_image.ome.parquet")

# Export to Vortex (install extras: `pip install 'ome-arrow[vortex]'`).

oa_image.export(how="vortex", out="your_image.vortex")

Tensor view (DLPack)

For tensor-focused workflows (PyTorch/JAX), use tensor_view and DLPack export.

from ome_arrow import OMEArrow

oa = OMEArrow("your_image.ome.parquet")

# Spatial ROI per plane (YX convention)

view = oa.tensor_view(t=0, z=0, roi=(32, 32, 128, 128), layout="CYX")

# Convenience 3D ROI (x, y, z, w, h, d)

view3d = oa.tensor_view(roi3d=(32, 32, 2, 128, 128, 4), layout="TZCYX")

# 3D tiled iteration over (z, y, x)

for cap in view3d.iter_tiles_3d(tile_size=(2, 64, 64), mode="numpy"):

pass

Lazy scan-style convention (Polars-like):

from ome_arrow import OMEArrow

oa = OMEArrow.scan("your_image.ome.parquet") # deferred load

# First: queue lazy spatial/index slicing

lazy_crop = oa.slice_lazy(0, 512, 0, 512).slice_lazy(64, 256, 64, 256)

cropped = lazy_crop.collect()

# slice_lazy returns a new OMEArrow plan; collect does not mutate `oa`.

# Build tensor_view from the returned sliced object to reuse that plan.

tensor_view_result = cropped.tensor_view(t=0, z=slice(0, 4), roi=(0, 0, 192, 192))

arr = tensor_view_result.to_numpy()

Advanced options:

chunk_policy="auto" | "combine" | "keep"controls ChunkedArray handling.channel_policy="error" | "first"controls behavior when droppingCfrom layout.

See full docs: docs/src/dlpack.md

Tensor ingest (PyTorch/JAX)

You can ingest torch or JAX arrays directly with OMEArrow(...).

You can also use explicit helper functions from ome_arrow.ingest.

Why this is useful:

- It reduces compute overhead by removing conversion code boilerplate in separate model/data pipelines that already use torch or JAX tensors (i.e., it provides a direct port of OME-arrow into popular deep learning libraries).

- However, this is more about clean interoperability than dramatic end-to-end speedups (although we expect fewer handoffs to result in speedups). Specifically:

- It makes it easier for a user to update dimension ordering input in the same place without requiring separate functionality (see argument

dim_order). - This smooths handoffs and reduces mistakes when moving between tensor layouts and OME-Arrow records. For example, CPU torch tensors often expose a NumPy view without an extra copy.

- Ingest still materializes OME-Arrow planes/chunks.

from ome_arrow import OMEArrow

# Direct constructor support:

# inferred defaults are rank-based:

# 2D -> "YX", 3D -> "ZYX", 4D -> "TCYX", 5D -> "TCZYX"

oa_torch = OMEArrow(torch_tensor)

oa_jax = OMEArrow(jax_array)

# Optional: override dim order when shape is ambiguous

oa_zyx = OMEArrow(torch_volume, dim_order="ZYX")

from ome_arrow.ingest import from_torch_array, from_jax_array

scalar_torch = from_torch_array(torch_tensor, dim_order="TCYX")

scalar_jax = from_jax_array(jax_array, dim_order="TCYX")

Notes:

- Torch/JAX support is optional.

- Install extras as needed:

pip install "ome-arrow[dlpack-torch]"orpip install "ome-arrow[dlpack-jax]". - Torch tensors are detached and converted on CPU for ingest.

dim_orderis accepted only for NumPy/torch/JAX array inputs.- Ingest now passes flattened NumPy pixel buffers directly to Arrow.

- This avoids materializing Python

listpayloads per plane/chunk.

Benchmarking lazy reads

Use the lightweight benchmark utility in benchmarks/ to compare lazy tensor

read paths (TIFF source-backed, Parquet planes, Parquet chunks):

uv run python benchmarks/benchmark_lazy_tensor.py --repeats 5 --warmup 1

Notes:

- This benchmark is for local iteration and relative comparisons.

- It is not part of CI pass/fail checks.

- CI also runs this benchmark in a dedicated

benchmark_canaryjob and uploadsbenchmark-results.jsonas a workflow artifact.

Recalibrating benchmarks/ci-baseline.json:

- Run the benchmark on

maina few times (for example 3-5 runs):uv run python benchmarks/benchmark_lazy_tensor.py --repeats 7 --warmup 2 --json-out benchmark-results.json - For each case, collect the observed

median_msvalues. - Update

benchmarks/ci-baseline.jsonwith stable medians from those runs (prefer a conservative value near the slower side, not the fastest sample). - Keep CI canary tolerance (

regression_factor+absolute_slack_ms) unchanged unless you have repeated false positives.

Contributing, Development, and Testing

Please see our contributing documentation for more details on contributions, development, and testing.

Related projects

OME Arrow is used or inspired by the following projects, check them out!

napari-ome-arrow: enables you to view OME Arrow and related images.nViz: focuses on ingesting and visualizing various 3D image data.CytoDataFrame: provides a DataFrame-like experience for viewing feature and microscopy image data within Jupyter notebook interfaces and creating OME Parquet files.coSMicQC: performs quality control on microscopy feature datasets, visualized using CytoDataFrames.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file ome_arrow-0.0.9.tar.gz.

File metadata

- Download URL: ome_arrow-0.0.9.tar.gz

- Upload date:

- Size: 35.9 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9dc680fd123b041aea77d6c2742f37cb94f1314333cb4475ff99ba266ce38960

|

|

| MD5 |

de18263e52adc91cbdd6061714b533b5

|

|

| BLAKE2b-256 |

1eec45f743b251254a1a06952802fbc3cab849254d4a51d05c73f90f2bc7d37a

|

Provenance

The following attestation bundles were made for ome_arrow-0.0.9.tar.gz:

Publisher:

publish-pypi.yml on WayScience/ome-arrow

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

ome_arrow-0.0.9.tar.gz -

Subject digest:

9dc680fd123b041aea77d6c2742f37cb94f1314333cb4475ff99ba266ce38960 - Sigstore transparency entry: 1258093731

- Sigstore integration time:

-

Permalink:

WayScience/ome-arrow@c33e70e15b366948b4b74a0b58b2bb489912d289 -

Branch / Tag:

refs/tags/v0.0.9 - Owner: https://github.com/WayScience

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish-pypi.yml@c33e70e15b366948b4b74a0b58b2bb489912d289 -

Trigger Event:

release

-

Statement type:

File details

Details for the file ome_arrow-0.0.9-py3-none-any.whl.

File metadata

- Download URL: ome_arrow-0.0.9-py3-none-any.whl

- Upload date:

- Size: 59.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

20a51fd41a30aebbe70b0423bf00994e22b9c534ba602ec4d2c15f0f9790a66d

|

|

| MD5 |

a52a0f0e8c8b98a8e96ec8f8f231cef4

|

|

| BLAKE2b-256 |

754645cac4380e01441d9dc37583626c33ada31b0ec4a9efc75339379f2f635e

|

Provenance

The following attestation bundles were made for ome_arrow-0.0.9-py3-none-any.whl:

Publisher:

publish-pypi.yml on WayScience/ome-arrow

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

ome_arrow-0.0.9-py3-none-any.whl -

Subject digest:

20a51fd41a30aebbe70b0423bf00994e22b9c534ba602ec4d2c15f0f9790a66d - Sigstore transparency entry: 1258093787

- Sigstore integration time:

-

Permalink:

WayScience/ome-arrow@c33e70e15b366948b4b74a0b58b2bb489912d289 -

Branch / Tag:

refs/tags/v0.0.9 - Owner: https://github.com/WayScience

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish-pypi.yml@c33e70e15b366948b4b74a0b58b2bb489912d289 -

Trigger Event:

release

-

Statement type: