Tiny CLI for OpenAI image generation. Prompt in, PNG out. Model-agnostic.

Project description

open-image

Tiny CLI for OpenAI image generation. Prompt in, PNG out. Model-agnostic.

Why another CLI?

Every serious image-gen workflow needs a stable, forgettable command — one you can pipe into, script around, and re-run six months later without rewriting. The official SDKs are fine for apps; they're heavy for "just give me a PNG."

open-image is one file, ~290 lines, pure stdlib + openai. No framework, no config, no lock-in to a specific model.

pip install open-image

export OPENAI_API_KEY=sk-...

open-image --prompt "a red fox in a snowy forest, cinematic"

# → /abs/path/output/20260423-223012-a1b2c3d4.png

That's it.

Features

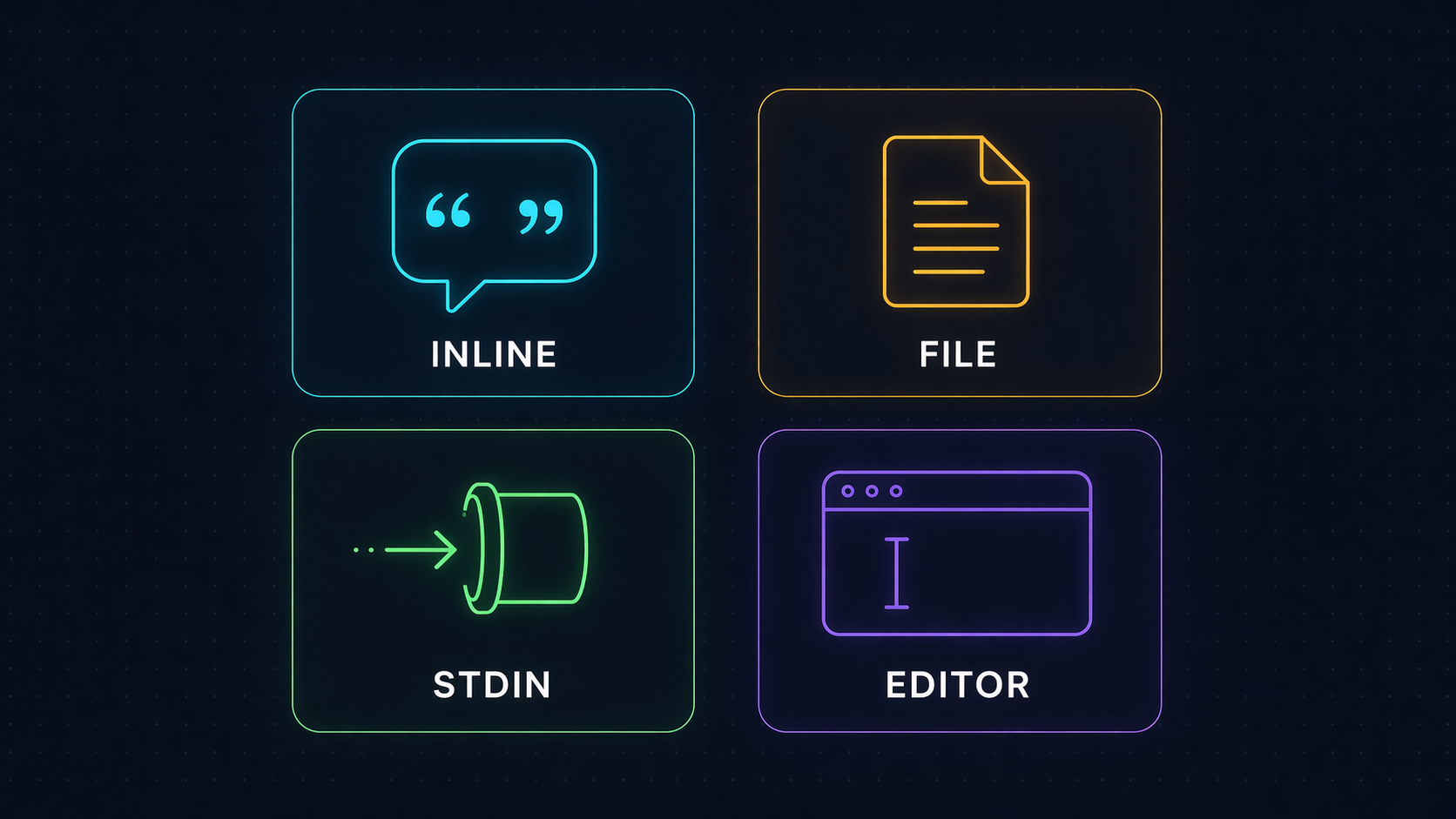

Four ways to feed a prompt

| Method | Example |

|---|---|

| Inline | open-image --prompt "a red fox in snow" |

| File | open-image --prompt-file prompts/scene.txt |

| Stdin | echo "a blue cat" | open-image |

| Editor | open-image (no args in a TTY → opens $EDITOR, or notepad on Windows, vi otherwise) |

The resolver picks them in that order. Lines starting with # in the editor buffer are stripped — write notes to yourself without polluting the prompt.

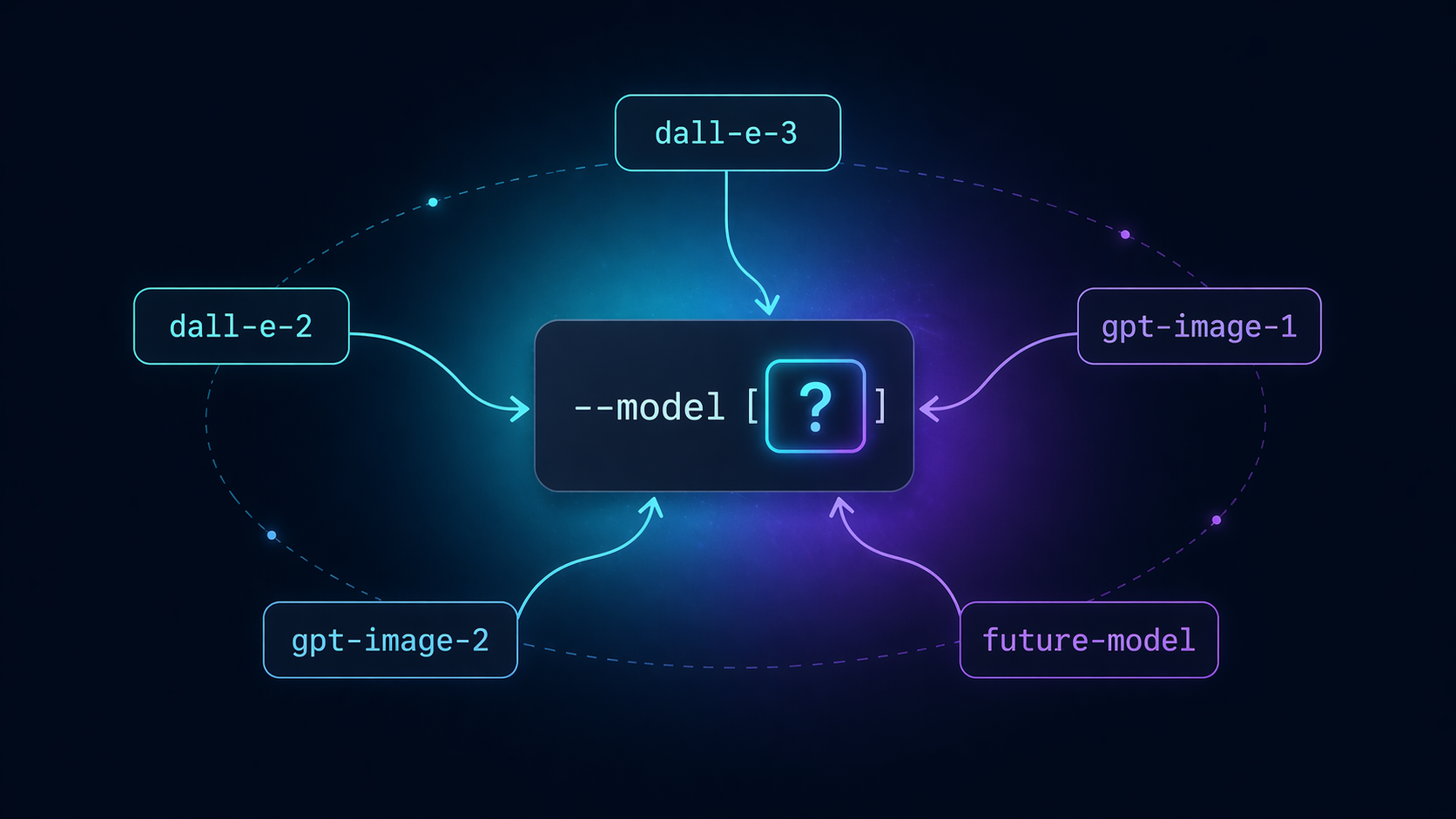

Model-agnostic by design

--model is a flag, not a constant. The day a new image model ships, swap the string — no code change, no version bump, no fork:

open-image --model gpt-image-2 --prompt "..." # default; requires org verification

open-image --model gpt-image-1 --prompt "..." # transparency, output_format support

open-image --model future-model --prompt "..." # whenever it arrives

Default is gpt-image-2. Change per call, or alias open-image='open-image --model gpt-image-1' in your shell if you prefer a different default.

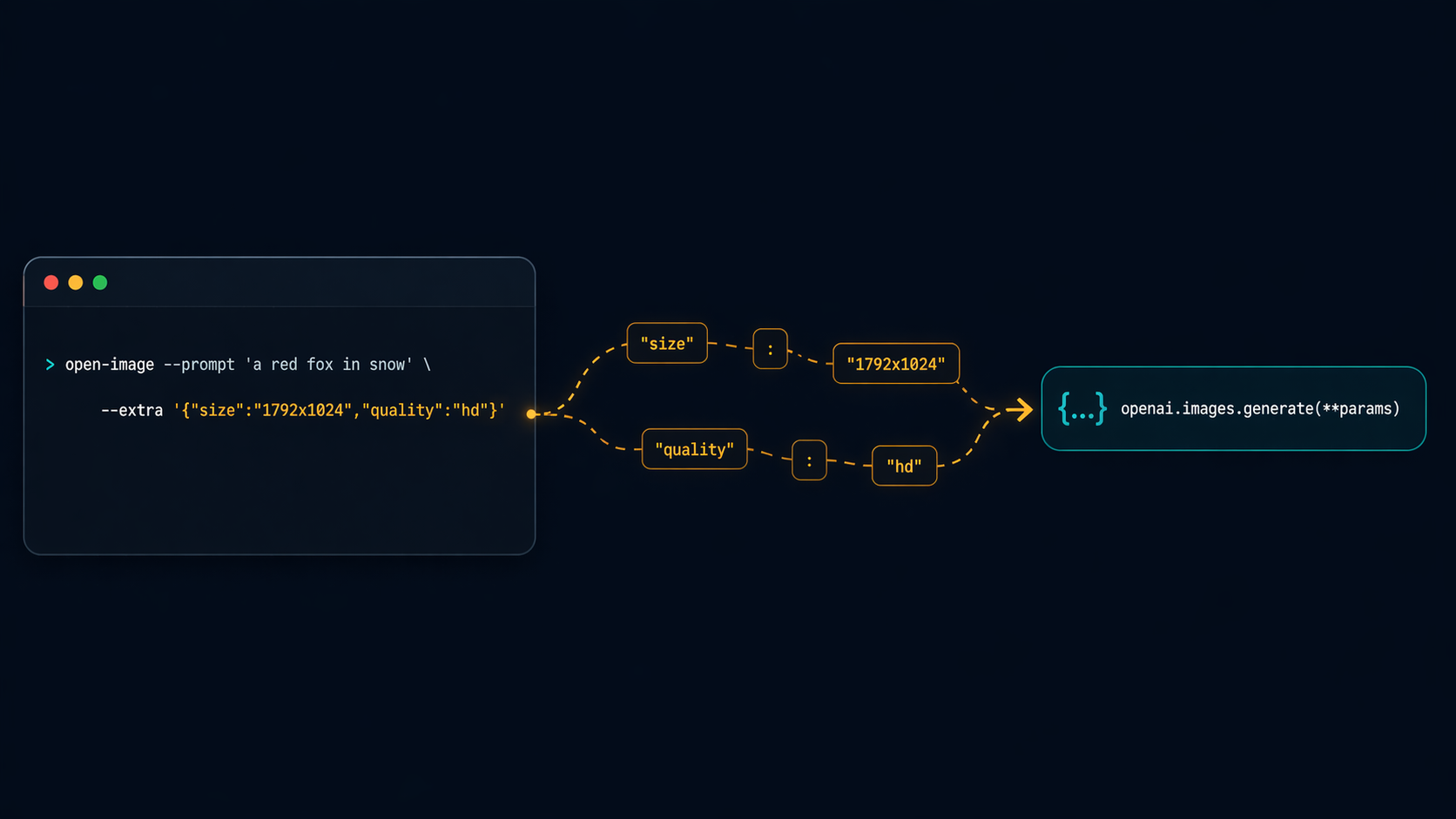

--extra escape hatch

Any keyword the API accepts, --extra forwards verbatim to openai.images.generate(**params). Zero client-side validation — the API is the source of truth:

open-image \

--model gpt-image-2 \

--extra '{"size":"1024x1024","quality":"high"}' \

--prompt "a lone surfer at dawn, Hokusai woodblock style"

open-image \

--model gpt-image-1 \

--extra '{"size":"1024x1024","output_format":"png","transparency":true}' \

--prompt "a minimalist cat icon on a transparent background"

If you pass a wrong key, the API error surfaces verbatim — exactly what you want for debugging. No wrapper in the way.

Install

From PyPI (recommended)

pip install open-image

With pipx (isolated global command)

pipx install open-image

From source

git clone https://github.com/tvtdev94/open-image

cd open-image

pip install -e .

Setup

Set your OpenAI API key (must have image-generation credit):

# Option A — environment variable (recommended)

export OPENAI_API_KEY=sk-...

# Option B — per-call flag

open-image --api-key sk-... --prompt "..."

Flags

| Flag | Default | Purpose |

|---|---|---|

--prompt |

— | Inline prompt text |

--prompt-file |

— | Path to a file containing the prompt |

--model |

gpt-image-2 |

Any OpenAI image model (gpt-image-2, gpt-image-1, dall-e-3, dall-e-2, …) |

--extra |

{} |

JSON object forwarded to images.generate |

--out-dir |

./output |

Where to save PNGs (auto-created) |

--api-key |

$OPENAI_API_KEY |

Override via flag if not in env |

--keep |

50 |

Keep only N newest PNGs in --out-dir after save; 0 disables pruning |

--list-models |

— | List known OpenAI image models with notes, then exit |

--install-skill |

— | Re-install Claude Code skill at ~/.claude/skills/open-image/ (overwrites) |

Output

./output/{YYYYMMDD-HHMMSS}-{uuid8}.png

One PNG per response.data item (so n=4 → four files). Absolute path(s) printed to stdout, one per line — friendly to xargs, fzf, wl-copy, whatever you pipe into.

open-image --prompt "a corgi" | tee -a log.txt

open-image --prompt "a corgi" | head -n1 | xargs -I{} open {} # macOS preview

Gallery

All generated by open-image with gpt-image-2:

|

|

| A close-up cinematic macro of a bee hovering over a lotus at sunrise. | A bustling night market in a cyberpunk Hanoi alleyway. |

Error handling

Every error path exits with a clear, actionable message:

- No API key →

ERROR: No API key. Set OPENAI_API_KEY env or pass --api-key. --extranot valid JSON → parser error with column offset- Empty prompt →

ERROR: Empty prompt. - API failure (auth, model access, invalid params) → API error string forwarded verbatim

- Un-writable

--out-dir→PermissionErrorsurfaced with the path

Models supported

The CLI is model-agnostic — --model accepts any string. These are the models known at write time; pass any future model ID without a code change.

| Model | Notes |

|---|---|

gpt-image-2 |

Default. Requires org verification on OpenAI dashboard. Returns b64_json. |

gpt-image-1 |

Newer GPT image model. Supports input_fidelity, transparency, output_format. |

dall-e-3 |

n=1 only. Sizes: 1024x1024 / 1792x1024 / 1024x1792. quality: standard / hd. style: vivid / natural. Pass response_format=b64_json via --extra for offline storage. |

dall-e-2 |

n>1 supported. Sizes: 256x256 / 512x512 / 1024x1024. |

Run open-image --list-models to print this table at any time.

Claude Code integration

If you use Claude Code, open-image ships a Claude skill that teaches the agent how to use this CLI — no manual prompt setup.

- Auto-install: First time you run any

open-imagecommand, the skill is silently installed at~/.claude/skills/open-image/SKILL.md(only if~/.claude/exists, never overwrites existing customization). - Re-install / update: After upgrading the package, refresh the skill content:

open-image --install-skill

Once installed, Claude Code knows when to call open-image, which models exist, how --extra works, and how to capture the stdout paths. If you don't use Claude Code, nothing happens — the auto-install gracefully no-ops when ~/.claude/ is absent.

Philosophy

Three principles, one file:

- YAGNI — no MCP server, no HTTP wrapper, no runtime plugins. The optional Claude Code skill is just markdown — Claude reads it, no daemon, no IPC. If your agent has a shell, it can use this.

- KISS — argparse + stdlib + one SDK call. Zero abstractions between you and the API.

- DRY —

--extrameans the tool never needs a new flag per new API param.

The whole tool fits in your head. When a future model adds a parameter, you already know how to use it.

License

MIT © 2026 tvtdev94

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file open_image-0.3.2.tar.gz.

File metadata

- Download URL: open_image-0.3.2.tar.gz

- Upload date:

- Size: 11.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

087fd86791e8e9ef6c8843d2cb76f3b23c26042e738d4f287910c9d4bd8d7b13

|

|

| MD5 |

d5d180235314dec39f40d43264283955

|

|

| BLAKE2b-256 |

624c59be28874b468dec9e33f5fc6c6fde8e0044a51c293f92668d79a1933a37

|

File details

Details for the file open_image-0.3.2-py3-none-any.whl.

File metadata

- Download URL: open_image-0.3.2-py3-none-any.whl

- Upload date:

- Size: 12.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

52cbfe8ec6b3f9de5463d86c3b006c5083704985bff82c5924c147e9d41f69f5

|

|

| MD5 |

b147a107da96cf9c254d782b0596d687

|

|

| BLAKE2b-256 |

7ee1a490abc76353370eb6852bcdaf91740edcc893205640445887b8574e0430

|