The terminal client for Ollama, OpenAI, Anthropic, and any pydantic-ai-supported provider.

Project description

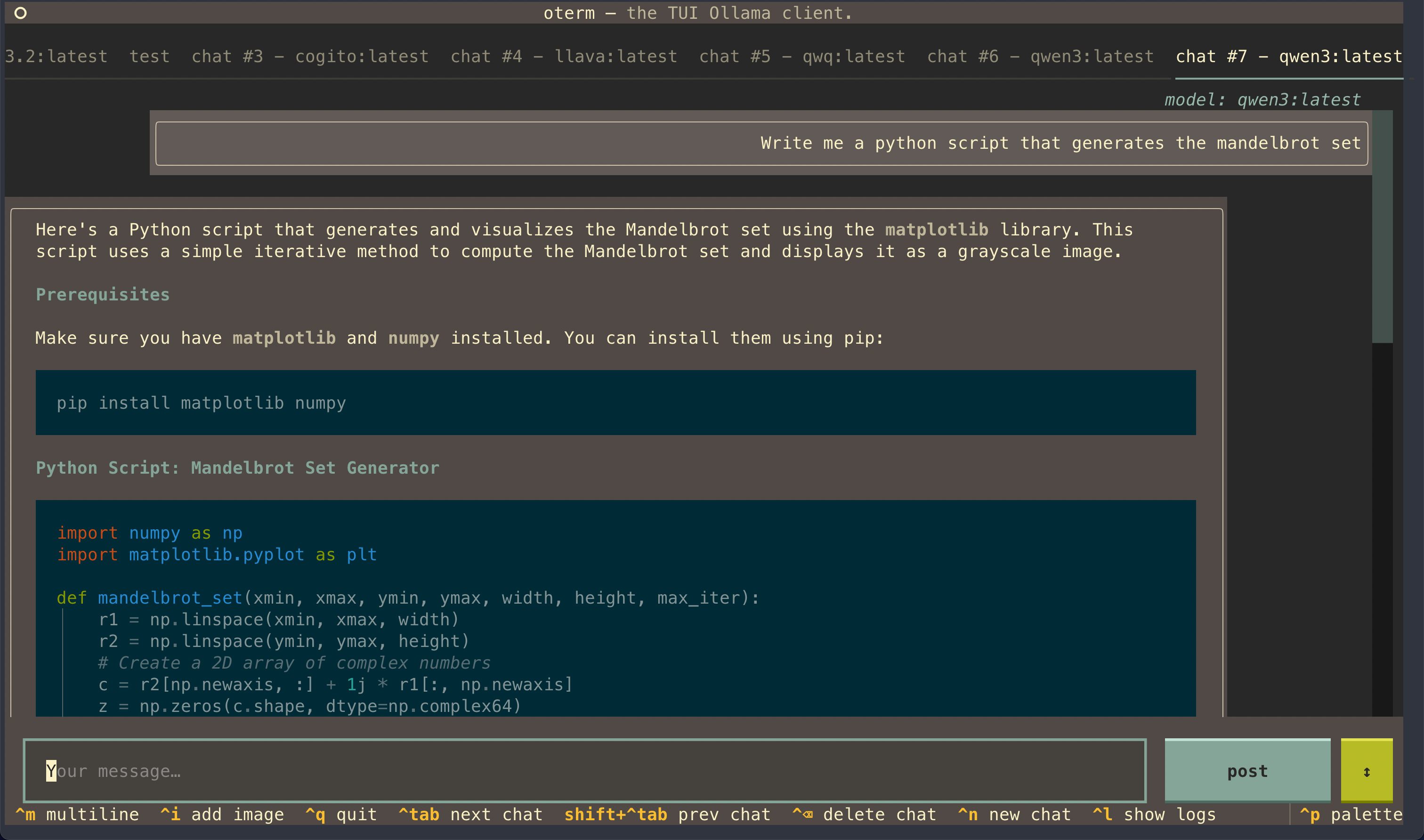

oterm

the terminal client for Ollama, OpenAI, Anthropic, and any pydantic-ai-supported provider.

🚀 oterm is now multi-provider! Alongside Ollama, oterm drives OpenAI, Anthropic, Google (AI / Vertex), Groq, Mistral, Cohere, AWS Bedrock, DeepSeek, Cerebras, Grok, Hugging Face, and any OpenAI-compatible endpoint (vLLM, LM Studio, llama.cpp, OpenRouter, LiteLLM, …). See What's new below for the full set of changes.

Features

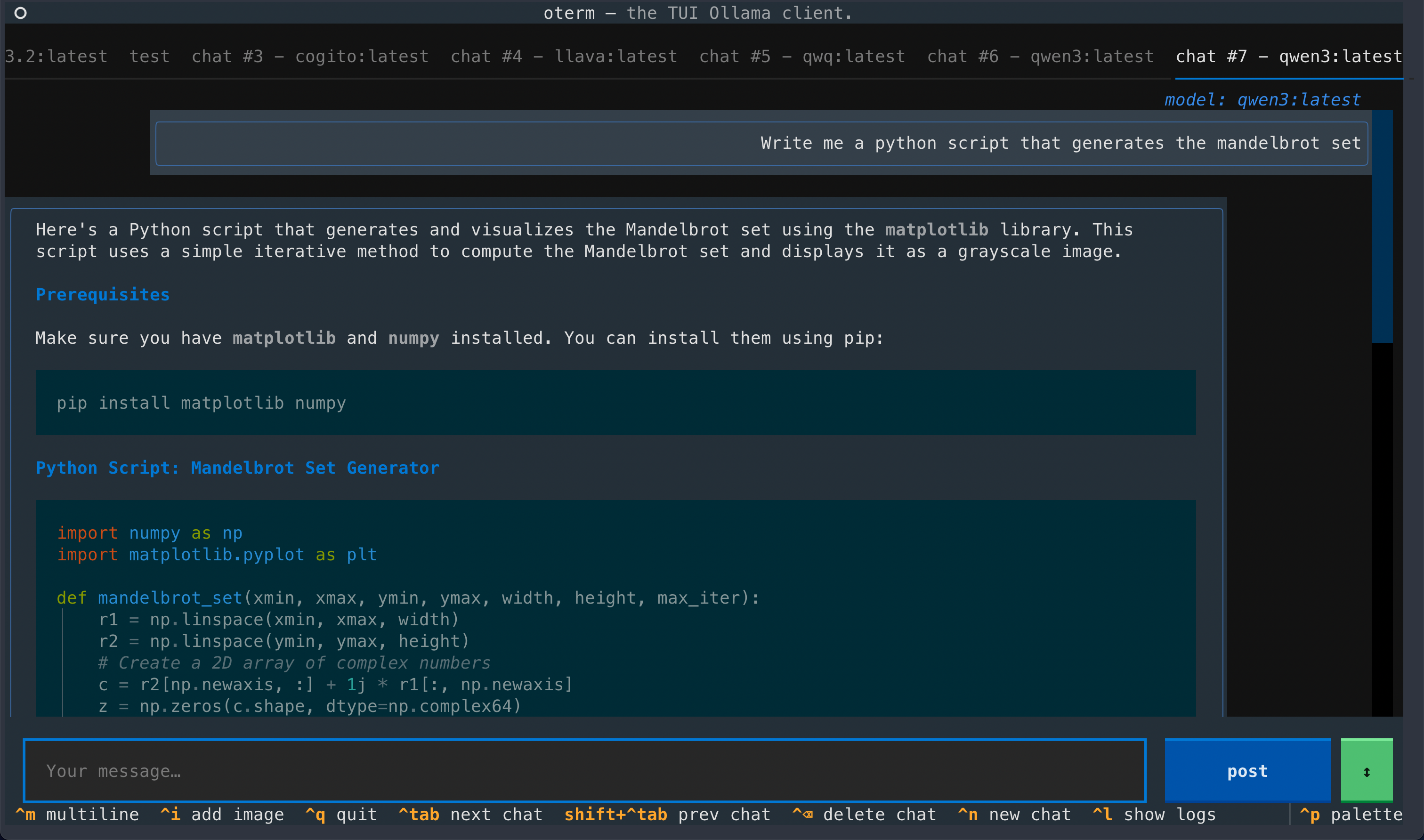

- intuitive and simple terminal UI, no need to run servers, frontends, just type

otermin your terminal. - supports Linux, MacOS, and Windows and most terminal emulators.

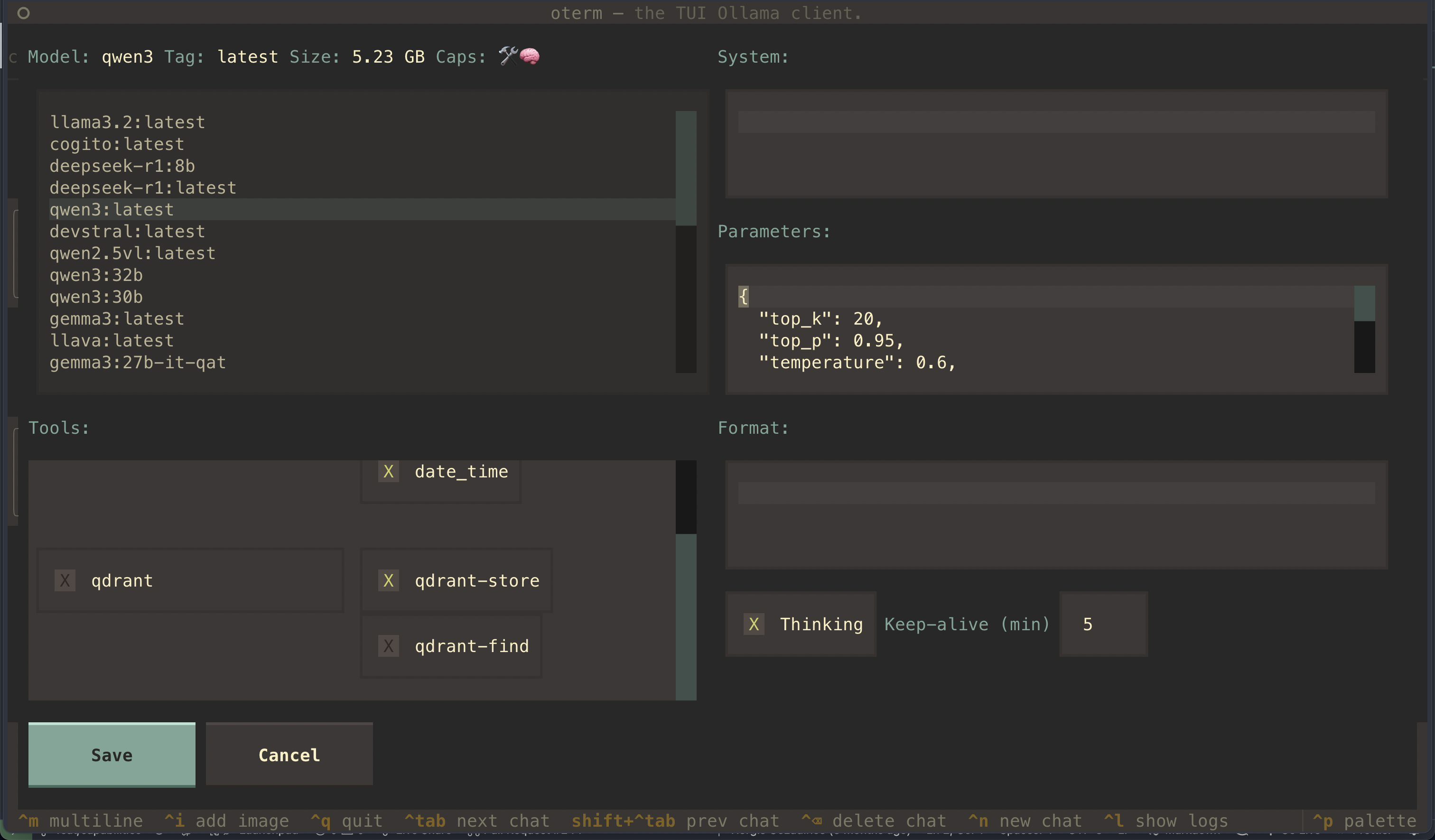

- multiple persistent chat sessions, stored together with system prompt & parameter customizations in sqlite.

- talks to Ollama, OpenAI, Anthropic, Google (AI / Vertex), Groq, Mistral, Cohere, AWS Bedrock, DeepSeek, Cerebras, Grok, Hugging Face, and any OpenAI-compatible endpoint — local (vLLM, LM Studio, llama.cpp, …) or hosted (OpenRouter, LiteLLM, …).

- tools — built-in (

shell,date_time,think), custom Python plugins via entry points, and any Model Context Protocol (MCP) server. - allows for easy customization of the model's system prompt and parameters.

Quick install

uvx oterm

See Installation for more details.

Documentation

What's new

- Multi-provider, via pydantic-ai (breaking).

otermis no longer Ollama-only — it drives any pydantic-ai-supported provider: OpenAI, Anthropic, Google (AI / Vertex), Groq, Mistral, Cohere, AWS Bedrock, DeepSeek, Cerebras, Grok, Hugging Face, OpenAI-compatible endpoints (vLLM, LM Studio, llama.cpp, OpenRouter, LiteLLM, …), and Ollama. Set the matching API key and the provider appears in the new-chat dropdown. - Refreshed chat UI. Borderless accent-driven layout, auto-growing prompt, inline

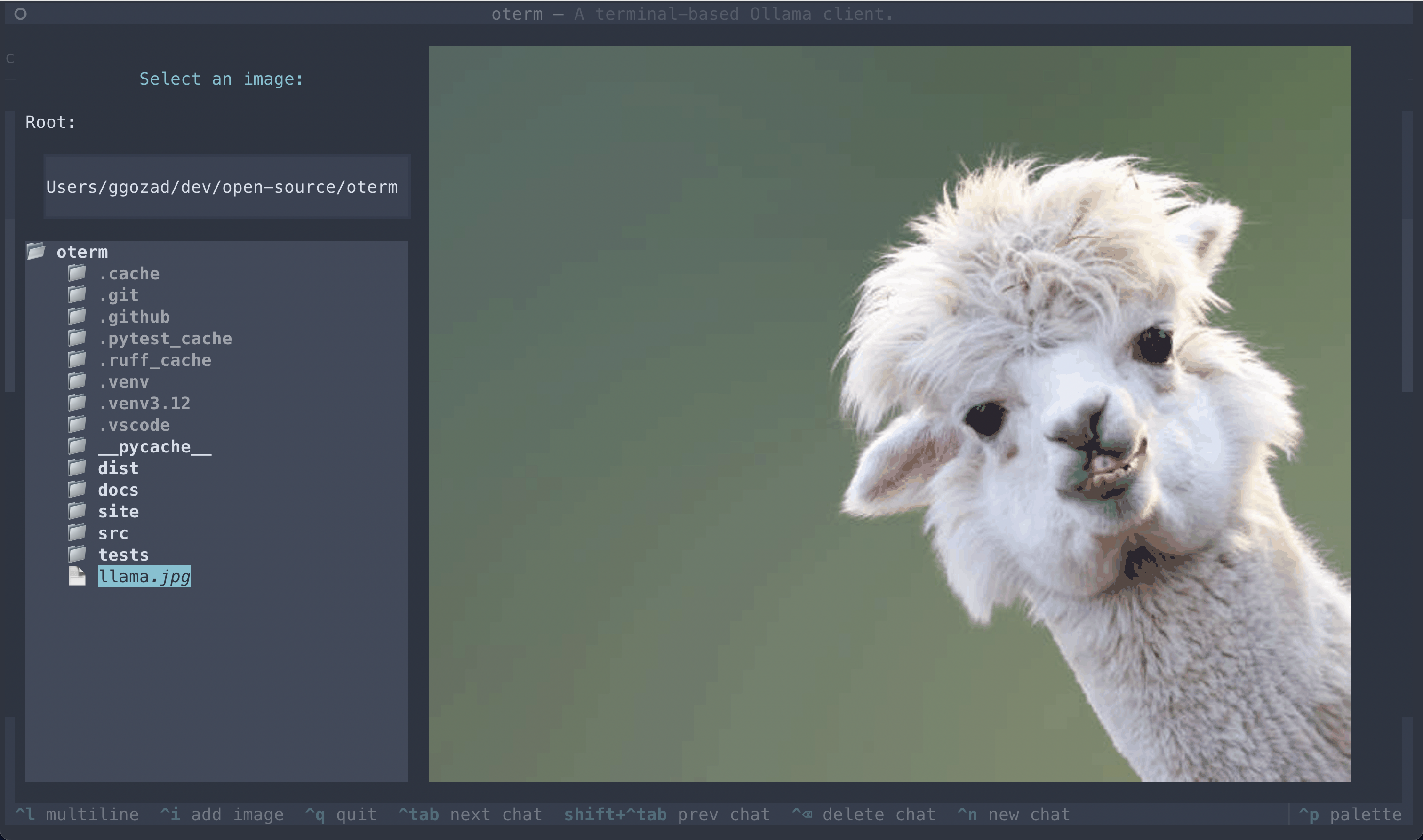

[Image #N]attachment tokens, a collapsing thinking section, and a live token-usage footer in place of the spinner. - Faster streaming. Markdown is now updated as deltas arrive instead of being re-rendered on every token, so long responses don't slow the terminal as they grow.

- MCP rewrite (breaking). The

mcpServersconfig block adopts pydantic-ai's standard schema (compatible with Claude Desktop / Cursor). See docs/mcp for the full migration notes.

Screenshots

License

This project is licensed under the MIT License.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file oterm-0.16.0.tar.gz.

File metadata

- Download URL: oterm-0.16.0.tar.gz

- Upload date:

- Size: 8.4 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

be2c79db9b4134a879ecc0c21d9fcbc06316fdac29cdd512989d7cdcad934afa

|

|

| MD5 |

e07812c3b69de4896205c99018099269

|

|

| BLAKE2b-256 |

7894c4037182321b67bbb08d609131f7b3331a59e53697317e6f12c0fea23082

|

File details

Details for the file oterm-0.16.0-py3-none-any.whl.

File metadata

- Download URL: oterm-0.16.0-py3-none-any.whl

- Upload date:

- Size: 50.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8dc021504519f9201070c084ea050dd9d870f25b80a3ebe45329e6ddaa71d9b6

|

|

| MD5 |

8a2d144ae29f376ea95024183c1981ef

|

|

| BLAKE2b-256 |

8a51ea6864a905ada4589de77b710a92695f0cc061c95f004844e71c49d9e3bb

|