Automating calcium imaging analysis

Project description

photon-mosaic

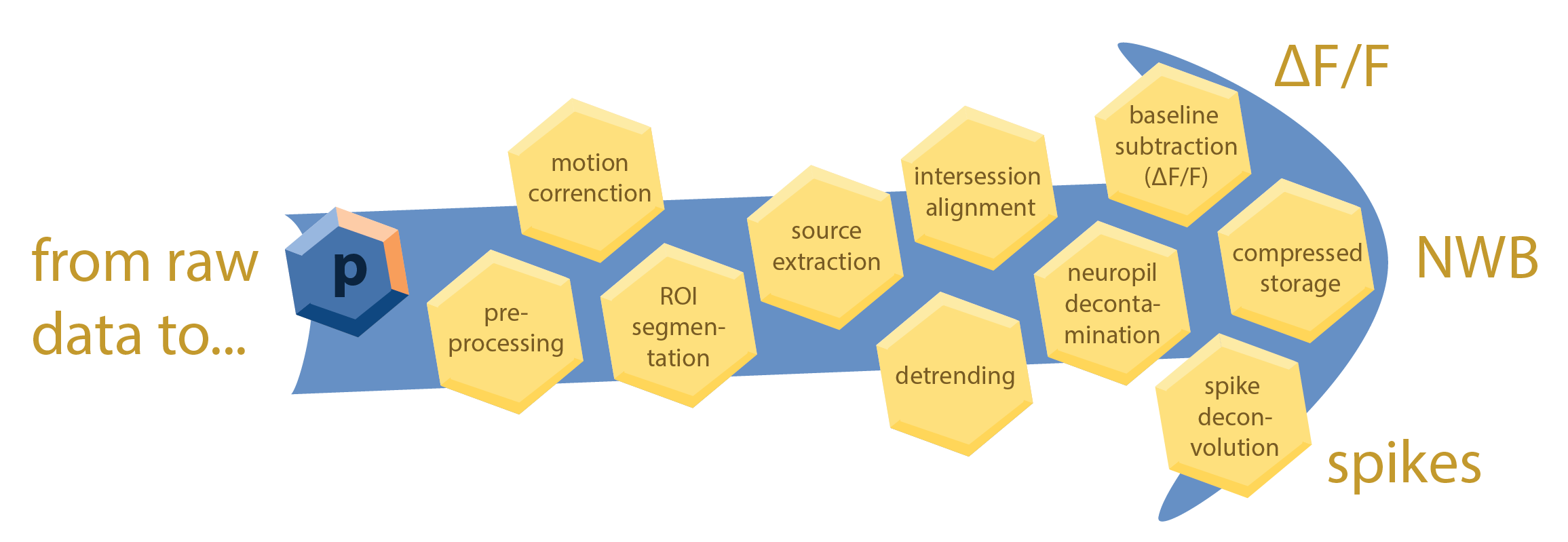

photon-mosaic is a Snakemake-based toolkit for processing multiphoton datasets. It orchestrates a curated collection of algorithms to transform your raw data (e.g., TIFF files) into analysis-ready outputs, such as ΔF/F traces, NWB files, or inferred spikes.

Each analysis step is integrated into an automated workflow, allowing you to chain preprocessing, registration, signal extraction, and post-processing steps into a single, reproducible pipeline. The design prioritizes usability for labs that process many imaging sessions and need to scale across an HPC cluster.

This is made possible by Snakemake, a workflow management system that provides a powerful and flexible framework for defining and executing complex data processing pipelines. Snakemake automatically builds a directed acyclic graph (DAG) of all the steps in your analysis, ensuring that each step is executed in the correct order and that intermediate results are cached to avoid redundant computations. photon-mosaic also includes a SLURM executor plugin for Snakemake to seamlessly scale your analysis across an HPC cluster. To ensure consistency and reproducibility, photon-mosaic writes processed data according to the NeuroBlueprint data standard for organizing and storing multiphoton imaging data.

The goal of photon-mosaic is to provide a modular, extensible, and user-friendly framework for multiphoton data analysis that can be easily adapted to different experimental designs and analysis requirements. For each processing step, we aim to vet and integrate the best available open-source tools, providing sensible defaults tailored to the specific data type and experimental modality.

Roadmap

Current features

- Preprocessing: derotation and contrast enhancement (see

photon_mosaic/preprocessing). - Registration & source extraction using Suite2p.

- Cell detection / anatomical ROI extraction using Cellpose (v3 or v4, including Cellpose-SAM).

Planned additions

- Registration using NoRMCorre for non-rigid motion correction.

- ROI matching using ROICat for inter-session / inter-plane ROI matching.

- Neuropil subtraction / decontamination: methods from the AllenSDK and AST-model.

- Spike deconvolution: OASIS and CASCADE.

See issues on GitHub and the project board for more details and participate in planning. Please refer to our guidelines to understand how to contribute.

Installation

Photon-mosaic requires Python 3.11 or 3.12.

conda create -n photon-mosaic python=3.12

conda activate photon-mosaic

pip install photon-mosaic

To install with developer tools (e.g., testing and linting):

pip install 'photon-mosaic[dev]'

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file photon_mosaic-0.2.2.tar.gz.

File metadata

- Download URL: photon_mosaic-0.2.2.tar.gz

- Upload date:

- Size: 39.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

949cd8e28ca3d46037f2cb83b5067119a2bd6f31a0f6d16cc83c927f9b749190

|

|

| MD5 |

f6ddddf6712fc3a58c0497080069cbe8

|

|

| BLAKE2b-256 |

0977c358378fe60ad097dda08189f9c13c5c08ae3641b6c9cc04294915b35ce0

|

File details

Details for the file photon_mosaic-0.2.2-py3-none-any.whl.

File metadata

- Download URL: photon_mosaic-0.2.2-py3-none-any.whl

- Upload date:

- Size: 40.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0410b179dd6a5c334ec39fbff9b3a1e8c40eed642ed0f0a2ce065fd1695318aa

|

|

| MD5 |

ae91cb19f71687d94f8d20f620d56c24

|

|

| BLAKE2b-256 |

090cd5b11a6ecdf287ac4acb3240e852fe5edbd8562f2f56a75ab5a5ddb3511b

|