High performance, non blocking profiler for Python web apps.

Project description

Profilis

A high performance, non-blocking profiler for Python web applications.

Overview

Profilis provides drop-in observability across APIs, functions, and database queries with minimal performance impact. It's designed to be:

- Non blocking: Async collection with configurable batching and backpressure handling

- Framework agnostic: Flask, FastAPI, and Sanic with optional ASGI middleware for any ASGI app

- Database aware: SQLAlchemy (sync & async), MongoDB (PyMongo), Neo4j, and pyodbc

- Production ready: Configurable sampling, error tracking, and multiple export formats

Star This Repository

If you find Profilis helpful for your projects, please consider giving it a star! It helps others discover this tool and motivates continued development.

Features

- Request Profiling: Automatic HTTP request/response timing and status tracking

- Frameworks: Flask, FastAPI (ASGI middleware), and Sanic with built-in dashboard (Flask blueprint, FastAPI router, Sanic blueprint)

- Function Profiling: Decorator-based function timing with exception tracking

- Database Instrumentation: SQLAlchemy (sync & async), MongoDB (PyMongo), Neo4j, pyodbc with query/command monitoring

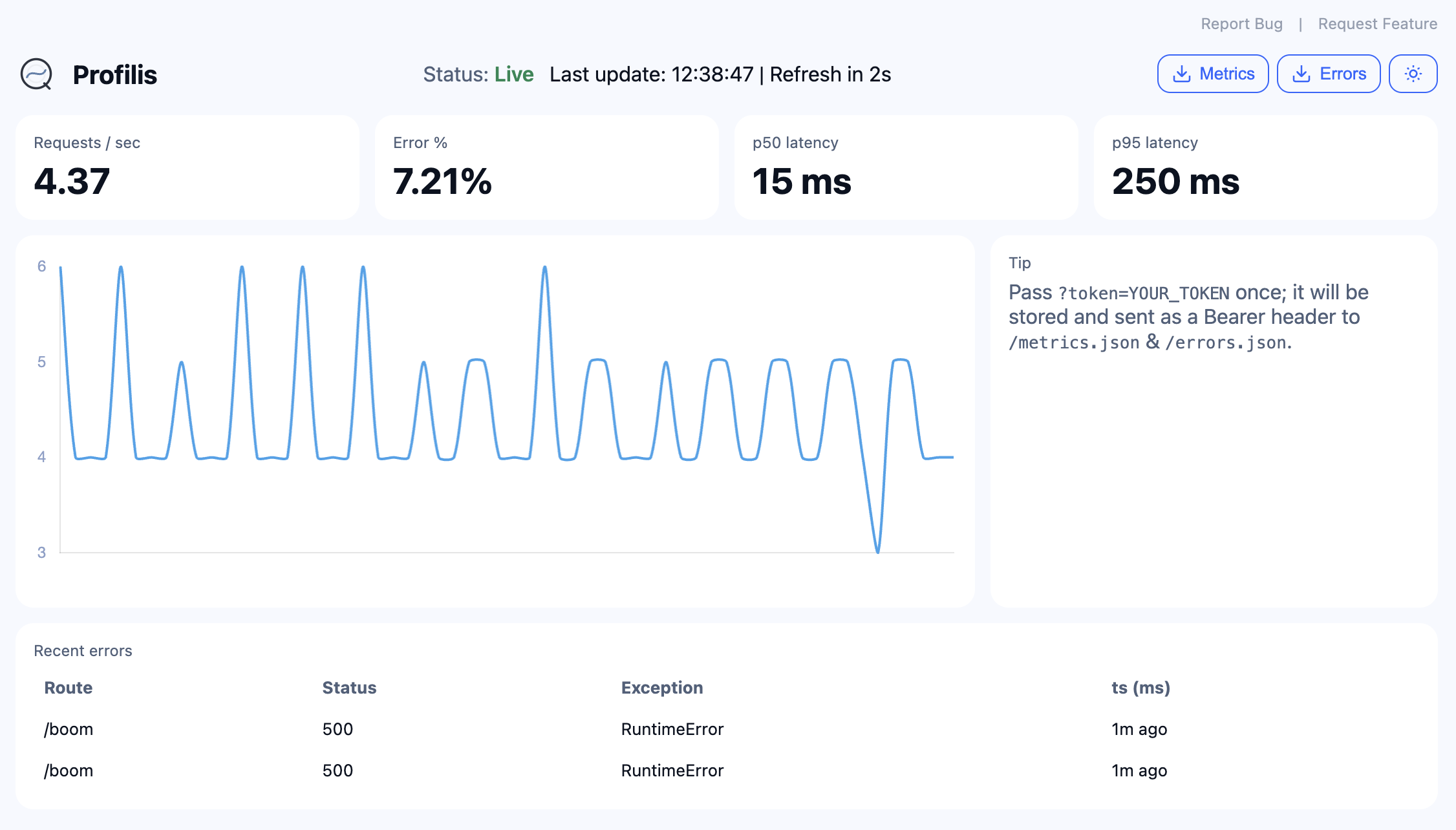

- Built-in UI: Real-time dashboard for monitoring and debugging

- Multiple Exporters: JSONL (with rotation), Console

- Runtime Context: Distributed tracing with trace/span ID management

- Configurable Sampling: Control data collection volume (Flask, ASGI, Sanic)

Installation

Install the core package with optional dependencies for your specific needs:

Option 1: Using pip with extras (Recommended)

# Core package only

pip install profilis

# With Flask support

pip install profilis[flask]

# With FastAPI support

pip install profilis[fastapi]

# With Sanic support

pip install profilis[sanic]

# With database support

pip install profilis[flask,sqlalchemy]

# With all integrations

pip install profilis[all]

Option 2: Using requirements files

# Minimal setup (core only)

pip install -r requirements-minimal.txt

# Flask integration

pip install -r requirements-flask.txt

# SQLAlchemy integration

pip install -r requirements-sqlalchemy.txt

# All integrations

pip install -r requirements-all.txt

Option 3: Manual installation

# Core dependencies

pip install typing_extensions>=4.0

# Flask support

pip install flask[async]>=3.0

# FastAPI support

pip install fastapi>=0.110 starlette>=0.37 httpx>=0.24.0

# Sanic support

pip install sanic>=23.0

# SQLAlchemy support

pip install sqlalchemy>=2.0 aiosqlite greenlet

# Performance optimization

pip install orjson>=3.8

Quick Start

Flask Integration

from flask import Flask

from profilis.flask.adapter import ProfilisFlask

from profilis.exporters.jsonl import JSONLExporter

from profilis.core.async_collector import AsyncCollector

# Setup exporter and collector

exporter = JSONLExporter(dir="./logs", rotate_bytes=1024*1024, rotate_secs=3600)

collector = AsyncCollector(exporter, queue_size=2048, batch_max=128, flush_interval=0.1)

# Create Flask app and integrate Profilis

app = Flask(__name__)

profilis = ProfilisFlask(

app,

collector=collector,

exclude_routes=["/health", "/metrics"],

sample=1.0 # 100% sampling

)

@app.route('/api/users')

def get_users():

return {"users": ["alice", "bob"]}

# Visit /_profilis for the dashboard (if you mount the UI blueprint)

if __name__ == "__main__":

app.run(debug=True)

FastAPI Integration

from fastapi import FastAPI

from profilis.fastapi.adapter import instrument_fastapi

from profilis.fastapi.ui import make_ui_router

from profilis.exporters.jsonl import JSONLExporter

from profilis.core.async_collector import AsyncCollector

from profilis.core.emitter import Emitter

from profilis.core.stats import StatsStore

exporter = JSONLExporter(dir="./logs", rotate_bytes=1024*1024, rotate_secs=3600)

collector = AsyncCollector(exporter, queue_size=2048, batch_max=128, flush_interval=0.1)

emitter = Emitter(collector)

stats = StatsStore()

app = FastAPI()

instrument_fastapi(app, emitter, route_excludes=["/profilis"])

app.include_router(make_ui_router(stats, prefix="/profilis"))

@app.get("/api/users")

async def get_users():

return {"users": ["alice", "bob"]}

# Run with: uvicorn your_module:app --reload

# Visit http://localhost:8000/profilis for the dashboard

Function Profiling

from profilis.decorators.profile import profile_function

from profilis.core.emitter import Emitter

from profilis.exporters.console import ConsoleExporter

from profilis.core.async_collector import AsyncCollector

# Setup profiling

exporter = ConsoleExporter(pretty=True)

collector = AsyncCollector(exporter, queue_size=128, flush_interval=0.2)

emitter = Emitter(collector)

@profile_function(emitter)

def expensive_calculation(n: int) -> int:

"""This function will be automatically profiled."""

result = sum(i * i for i in range(n))

return result

@profile_function(emitter)

async def async_operation(data: list) -> list:

"""Async functions are also supported."""

processed = [item * 2 for item in data]

return processed

# Use the profiled functions

result = expensive_calculation(1000)

Manual Event Emission

from profilis.core.emitter import Emitter

from profilis.exporters.jsonl import JSONLExporter

from profilis.core.async_collector import AsyncCollector

from profilis.runtime import use_span, span_id

# Setup

exporter = JSONLExporter(dir="./logs")

collector = AsyncCollector(exporter)

emitter = Emitter(collector)

# Create a trace context

with use_span(trace_id=span_id()):

# Emit custom events

emitter.emit_req("/api/custom", 200, dur_ns=15000000) # 15ms

emitter.emit_fn("custom_function", dur_ns=5000000) # 5ms

emitter.emit_db("SELECT * FROM users", dur_ns=8000000, rows=100)

# Close collector to flush remaining events

collector.close()

Built-in Dashboard

Dashboard is available per framework:

- Flask:

make_ui_blueprint(stats, ui_prefix="/_profilis")→app.register_blueprint(ui_bp) - FastAPI:

make_ui_router(stats, prefix="/profilis")→app.include_router(router) - Sanic:

make_ui_blueprint(stats, ui_prefix="/profilis")→app.blueprint(bp)

# Example: Flask

from flask import Flask

from profilis.flask.ui import make_ui_blueprint

from profilis.core.stats import StatsStore

app = Flask(__name__)

stats = StatsStore()

ui_bp = make_ui_blueprint(stats, ui_prefix="/_profilis")

app.register_blueprint(ui_bp)

# Visit http://localhost:5000/_profilis

Advanced Usage

Custom Exporters

from profilis.core.async_collector import AsyncCollector

from profilis.exporters.base import BaseExporter

class CustomExporter(BaseExporter):

def export(self, events: list[dict]) -> None:

for event in events:

# Custom export logic

print(f"Custom export: {event}")

# Use custom exporter

exporter = CustomExporter()

collector = AsyncCollector(exporter)

Runtime Context Management

from profilis.runtime import use_span, span_id, get_trace_id, get_span_id

# Create distributed trace context

with use_span(trace_id="trace-123", span_id="span-456"):

current_trace = get_trace_id() # "trace-123"

current_span = get_span_id() # "span-456"

# Nested spans inherit trace context

with use_span(span_id="span-789"):

nested_span = get_span_id() # "span-789"

parent_trace = get_trace_id() # "trace-123"

Performance Tuning

from profilis.core.async_collector import AsyncCollector

# High-throughput configuration

collector = AsyncCollector(

exporter,

queue_size=8192, # Large queue for high concurrency

batch_max=256, # Larger batches for efficiency

flush_interval=0.05, # More frequent flushing

drop_oldest=True # Drop events under backpressure

)

# Low-latency configuration

collector = AsyncCollector(

exporter,

queue_size=512, # Smaller queue for lower latency

batch_max=32, # Smaller batches for faster processing

flush_interval=0.01, # Very frequent flushing

drop_oldest=False # Don't drop events

)

Configuration

Environment Variables

# Note: Environment variable support is planned for future releases

# Currently, all configuration is done programmatically

Sampling Strategies

# Random sampling

profilis = ProfilisFlask(app, collector=collector, sample=0.1) # 10% of requests

# Route-based sampling

profilis = ProfilisFlask(

app,

collector=collector,

exclude_routes=["/health", "/metrics", "/static"],

sample=1.0

)

Exporters

JSONL Exporter

from profilis.exporters.jsonl import JSONLExporter

# With rotation

exporter = JSONLExporter(

dir="./logs",

rotate_bytes=1024*1024, # 1MB per file

rotate_secs=3600 # Rotate every hour

)

Console Exporter

from profilis.exporters.console import ConsoleExporter

# Pretty-printed output for development

exporter = ConsoleExporter(pretty=True)

# Compact output for production

exporter = ConsoleExporter(pretty=False)

Performance Characteristics

- Event Creation: ≤15µs per event

- Memory Overhead: ~100 bytes per event

- Throughput: 100K+ events/second on modern hardware

- Latency: Sub-millisecond collection overhead

Documentation

Full documentation is available at: Profilis Docs

Docs are written in Markdown under docs/ and built with MkDocs Material.

Available Documentation

- Getting Started - Quick setup and basic usage

- Configuration - Tuning and customization

- Flask Integration - Flask adapter

- FastAPI Integration - FastAPI/ASGI adapter

- Sanic Integration - Sanic adapter

- SQLAlchemy Support - Database instrumentation

- MongoDB · Neo4j · pyodbc - Additional databases

- JSONL Exporter - Log file output

- Built-in UI - Dashboard documentation

- Architecture - System design

To preview locally:

pip install mkdocs mkdocs-material mkdocs-mermaid2-plugin

mkdocs serve

Development

Setting up the project

-

Clone and enter the repo

git clone https://github.com/ankan97dutta/profilis.git cd profilis

-

Create a virtual environment and install in editable mode with dev dependencies

python -m venv .venv source .venv/bin/activate # Windows: .venv\Scripts\activate pip install -e ".[dev]"

-

Install pre-commit hooks (optional but recommended)

pre-commit install -

Run the test suite

pytest

Use

pytest -vfor verbose output,pytest path/to/test_file.pyto run a single file, orpytest -k "test_name"to run tests matching a pattern. Coverage:pytest --cov=profilis --cov-report=term-missing.

Working with TDD

We encourage test-driven development (TDD):

- Red — Write a failing test that describes the behaviour you want.

- Green — Implement the minimum code to make the test pass.

- Refactor — Improve the implementation while keeping tests green.

Run tests frequently (e.g. pytest or pytest tests/ -q) as you work. See Development Guidelines for the full TDD workflow and test layout.

Branching and commits

- See Contributing and Development Guidelines.

- Branch strategy: trunk‑based (

feat/*,fix/*,perf/*,chore/*). - Commits follow Conventional Commits.

Roadmap

See Profilis – v0 Roadmap Project and docs/overview/roadmap.md.

License

Contact

- Email: connect@ankandutta.in

- Website: https://www.ankandutta.in

- Blog: Signals & Noise

- GitHub: @ankan97dutta

Feel free to reach out if you have questions, suggestions, or would like to contribute to Profilis!

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file profilis-0.3.0.tar.gz.

File metadata

- Download URL: profilis-0.3.0.tar.gz

- Upload date:

- Size: 56.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.5

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c83e58c54d24af22215b15e323a8140b831fdd0846efc29ffb7fb00e665b02a4

|

|

| MD5 |

03ec2ac4d29fd575244da18ef28082a4

|

|

| BLAKE2b-256 |

a3600c86ad4d0a5df73e05cff6e57a8be905975fee298ace7570d557ea27d581

|

File details

Details for the file profilis-0.3.0-py3-none-any.whl.

File metadata

- Download URL: profilis-0.3.0-py3-none-any.whl

- Upload date:

- Size: 47.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.13.5

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b9645bad1497aafd32d5de0faccfa1659100e160246b37417a220de8730a035b

|

|

| MD5 |

be4e14457e7e255f0c45e707ebe5f138

|

|

| BLAKE2b-256 |

8ebcf03a76d83d2edb6237091eb23a3326d6fe3ae387aac19e210cf6c8ceed90

|