A ``py.test`` fixture for benchmarking code.

Project description

docs |

|

|---|---|

tests |

|

package |

A py.test fixture for benchmarking code. It will group the tests into rounds that are calibrated to the chosen timer. See calibration and FAQ.

Free software: BSD license

Installation

pip install pytest-benchmark

Documentation

Examples

This plugin provides a benchmark fixture. This fixture is a callable object that will benchmark any function passed to it.

Example:

def something(duration=0.000001):

# Code to be measured

return time.sleep(duration)

def test_my_stuff(benchmark):

# benchmark something

result = benchmark(something)

# Extra code, to verify that the run completed correctly.

# Note: this code is not measured.

assert result is NoneYou can also pass extra arguments:

def test_my_stuff(benchmark):

result = benchmark(time.sleep, 0.02)Screenshots

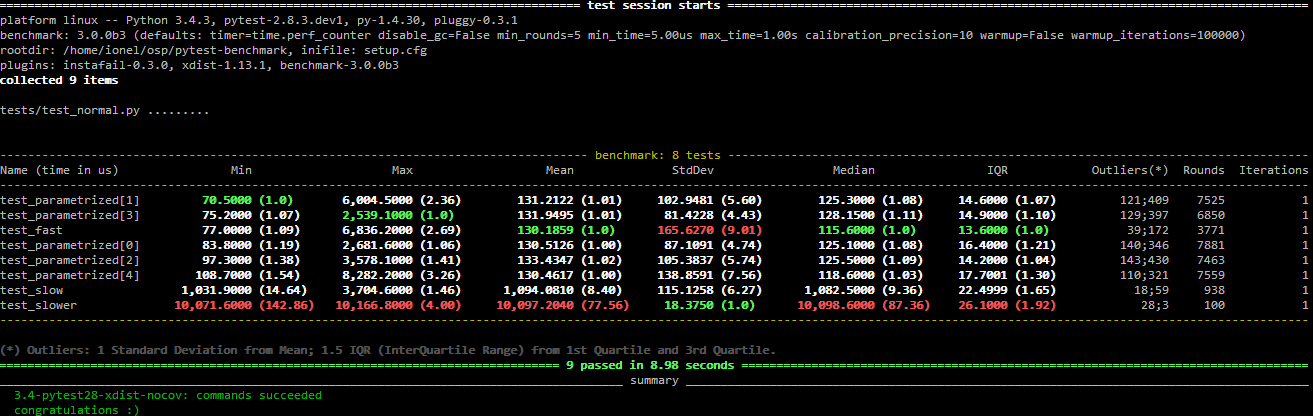

Normal run:

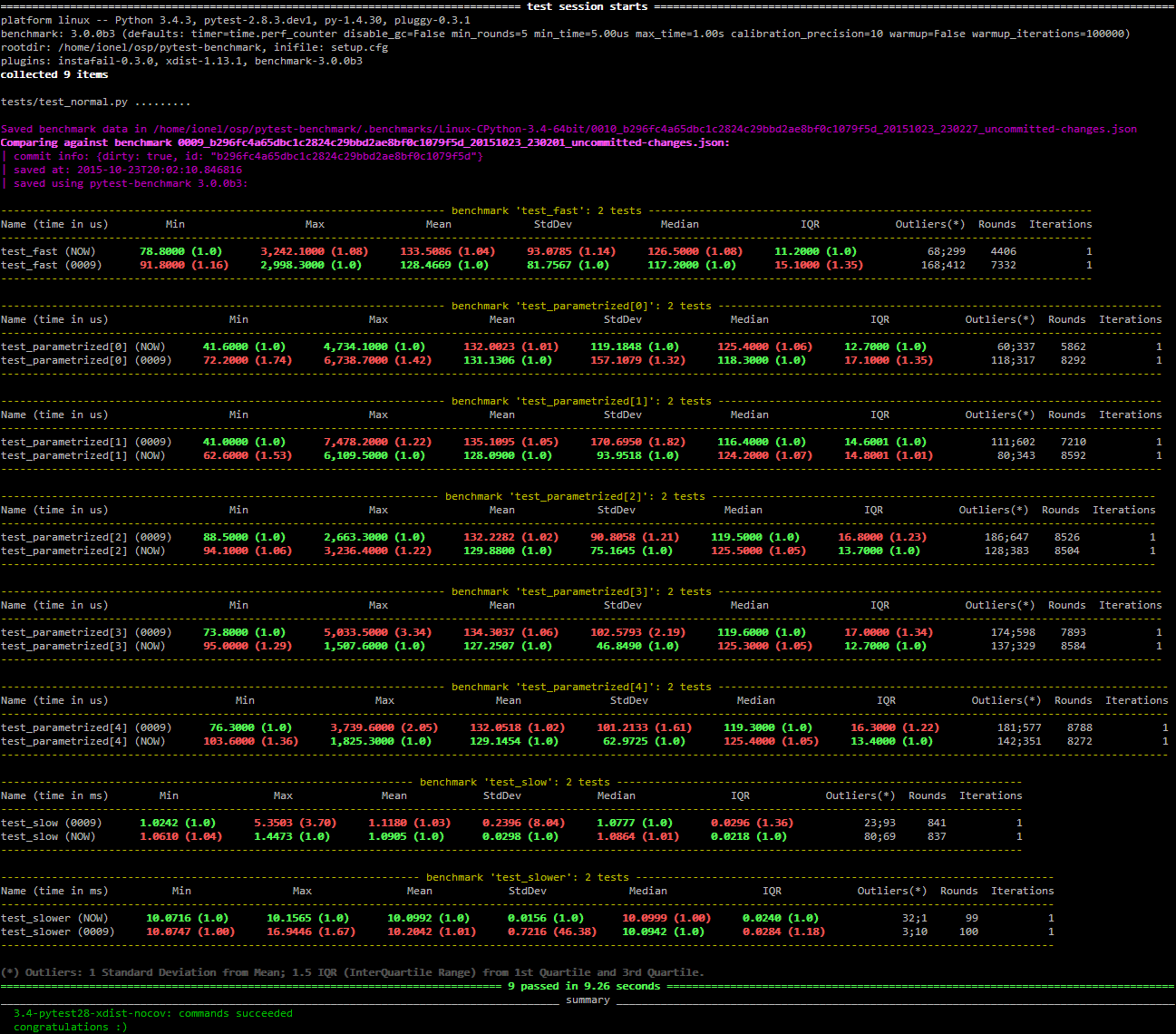

Compare mode (--benchmark-compare):

Histogram (--benchmark-histogram):

Also, it has nice tooltips.

Development

To run the all tests run:

tox

Credits

Timing code and ideas taken from: https://bitbucket.org/haypo/misc/src/tip/python/benchmark.py

Changelog

3.0.0b1 (2015-10-13)

Tests are sorted alphabetically in the results table.

Failing to import statistics doesn’t create hard failures anymore. Benchmarks are automatically skipped if import failure occurs. This would happen on Python 3.2 (or earlier Python 3).

3.0.0a4 (2015-10-08)

Changed how failures to get commit info are handled: now they are soft failures. Previously it made the whole test suite fail, just because you didn’t have git/hg installed.

3.0.0a3 (2015-10-02)

Added progress indication when computing stats.

3.0.0a2 (2015-09-30)

Fixed accidental output capturing caused by capturemanager misuse.

3.0.0a1 (2015-09-13)

Added JSON report saving (the --benchmark-json command line arguments).

Added benchmark data storage(the --benchmark-save and --benchmark-autosave command line arguments).

Added comparison to previous runs (the --benchmark-compare command line argument).

Added performance regression checks (the --benchmark-compare-fail command line argument).

Added possibility to group by various parts of test name (the –benchmark-compare-group-by` command line argument).

Added historical plotting (the --benchmark-histogram command line argument).

Added option to fine tune the calibration (the --benchmark-calibration-precision command line argument and calibration_precision marker option).

Changed benchmark_weave to no longer be a context manager. Cleanup is performed automatically. BACKWARDS INCOMPATIBLE

Added benchmark.weave method (alternative to benchmark_weave fixture).

Added new hooks to allow customization:

pytest_benchmark_generate_machine_info(config)

pytest_benchmark_update_machine_info(config, info)

pytest_benchmark_generate_commit_info(config)

pytest_benchmark_update_commit_info(config, info)

pytest_benchmark_group_stats(config, benchmarks, group_by)

pytest_benchmark_generate_json(config, benchmarks, include_data)

pytest_benchmark_update_json(config, benchmarks, output_json)

pytest_benchmark_compare_machine_info(config, benchmarksession, machine_info, compared_benchmark)

Changed the timing code to:

Tracers are automatically disabled when running the test function (like coverage tracers).

Fixed an issue with calibration code getting stuck.

Added pedantic mode via benchmark.pedantic(). This mode disables calibration and allows a setup function.

2.5.0 (2015-06-20)

Improved test suite a bit (not using cram anymore).

Improved help text on the --benchmark-warmup option.

Made warmup_iterations available as a marker argument (eg: @pytest.mark.benchmark(warmup_iterations=1234)).

Fixed --benchmark-verbose’s printouts to work properly with output capturing.

Changed how warmup iterations are computed (now number of total iterations is used, instead of just the rounds).

Fixed a bug where calibration would run forever.

Disabled red/green coloring (it was kinda random) when there’s a single test in the results table.

2.4.1 (2015-03-16)

Fix regression, plugin was raising ValueError: no option named 'dist' when xdist wasn’t installed.

2.4.0 (2015-03-12)

Add a benchmark_weave experimental fixture.

Fix internal failures when xdist plugin is active.

Automatically disable benchmarks if xdist is active.

2.3.0 (2014-12-27)

Moved the warmup in the calibration phase. Solves issues with benchmarking on PyPy.

Added a --benchmark-warmup-iterations option to fine-tune that.

2.2.0 (2014-12-26)

Make the default rounds smaller (so that variance is more accurate).

Show the defaults in the --help section.

2.1.0 (2014-12-20)

Simplify the calibration code so that the round is smaller.

Add diagnostic output for calibration code (--benchmark-verbose).

2.0.0 (2014-12-19)

Replace the context-manager based API with a simple callback interface. BACKWARDS INCOMPATIBLE

Implement timer calibration for precise measurements.

1.0.0 (2014-12-15)

Use a precise default timer for PyPy.

? (?)

Readme and styling fixes (contributed by Marc Abramowitz)

Lots of wild changes.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file pytest-benchmark-3.0.0b1.tar.gz.

File metadata

- Download URL: pytest-benchmark-3.0.0b1.tar.gz

- Upload date:

- Size: 215.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4ac6492f38da485b114a8a05e7779a72733e39cc85f57fe9b5866124a399c11e

|

|

| MD5 |

e8075c73e1aa490b1e71f953351b287f

|

|

| BLAKE2b-256 |

21a622ba11a9bfb5dadaaab1d9ef893e89b70f8d2cb56e7f22aaa1475a40d4ee

|

File details

Details for the file pytest_benchmark-3.0.0b1-py2.py3-none-any.whl.

File metadata

- Download URL: pytest_benchmark-3.0.0b1-py2.py3-none-any.whl

- Upload date:

- Size: 32.2 kB

- Tags: Python 2, Python 3

- Uploaded using Trusted Publishing? No

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

52c7e9f0d5e8c1df1318a85a9ddcc52470121cb774f6f61340a0775e5df5d956

|

|

| MD5 |

20324d96829048f573130d747a9a4e5d

|

|

| BLAKE2b-256 |

2c89bf19bfd587d3bcc07514bee7399ad512e26ea15ea616358fa19e0460711b

|