Modifies OpenAI's Whisper to produce more reliable timestamps.

Project description

Stabilizing Timestamps for Whisper

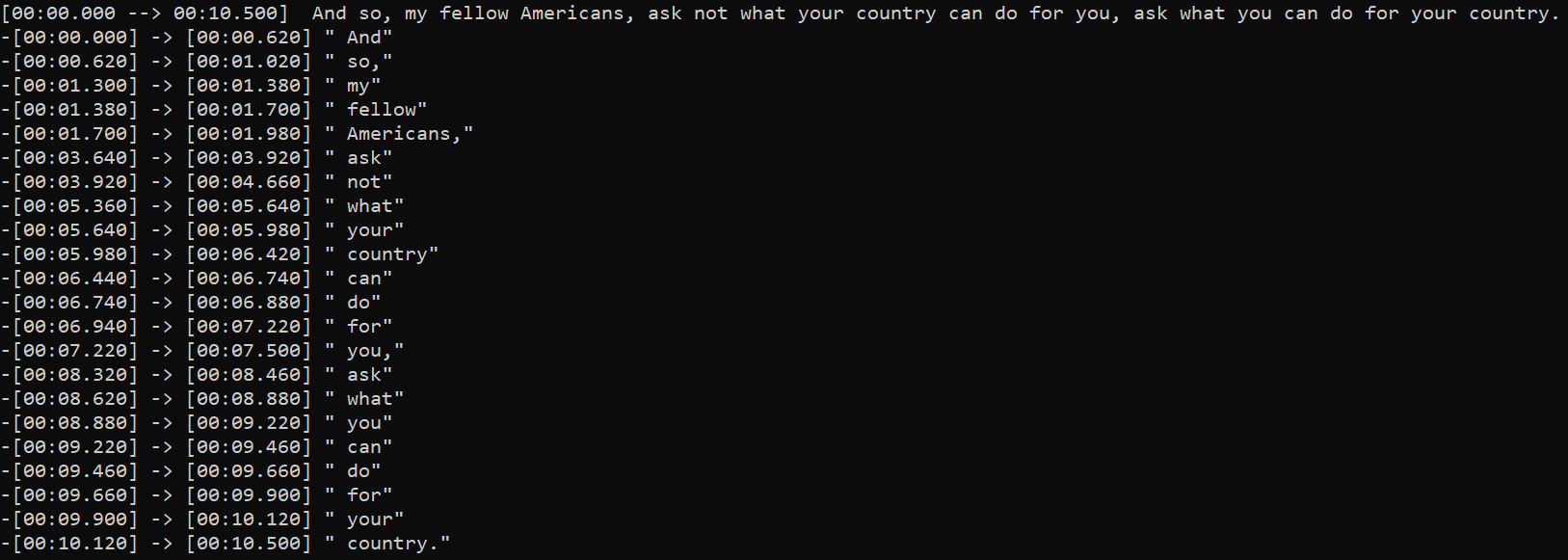

This script modifies OpenAI's Whisper to produce more reliable timestamps.

What's new in 2.0.0 ?

- updated to use Whisper's more reliable word-level timestamps method.

- the more reliable word timestamps allows regrouping all words into segments with more natural boundaries.

- can now suppress silence with Silero VAD (requires PyTorch 1.2.0+)

- non-VAD silence suppression is also more robust

- see Quick 1.X → 2.X Guide

Features

- more control over the timestamps than default Whisper

- supports direct preprocessing with Demucs to isolate voice

- support dynamic quantization to decrease memory usage for inference on CPU

- lower memory usage than default Whisper when transcribing very long input audio tracks

Setup

pip install -U stable-ts

To install the lastest commit:

pip install -U git+https://github.com/jianfch/stable-ts.git

Command-line usage

Transcribe audio then save result as JSON file which contains the original inference results.

This allows results to be reprocessed different without having to redo inference.

Change audio.json to audio.srt to process it directly into SRT.

stable-ts audio.mp3 -o audio.json

Processing JSON file of the results into SRT.

stable-ts audio.json -o audio.srt

Transcribe multiple audio files then process the results directly into SRT files.

stable-ts audio1.mp3 audio2.mp3 audio3.mp3 -o audio1.srt audio2.srt audio3.srt

Python usage

import stable_whisper

model = stable_whisper.load_model('base')

# this modified model run just like the original model but accepts additional arguments

result = model.transcribe('audio.mp3')

result.to_srt_vtt('audio.srt')

result.to_ass('audio.ass')

# word_level=False : use only segment timestamps (i.e without the green highlight)

# segment_level=False : use only word timestamps

result.save_as_json('audio.json')

# save inference result for later processing

Tips

- for reliable segment timestamps, do not disable word timestamps with

word_timestamps=Falsebecause word timestamps is also used to correct segment timestamps - use

demucs=Trueandvad=Truefor music - if audio is not transcribing properly compared to whisper, try

mel_first=Trueat cost of more memory usuage for long audio tracks

Quick 1.X → 2.X Guide

results_to_sentence_srt(result, 'audio.srt')→result.to_srt_vtt('audio.srt', word_level=False)results_to_word_srt(result, 'audio.srt')→result.to_srt_vtt('output.srt', segment_level=False)results_to_sentence_word_ass(result, 'audio.srt')→result.to_ass('output.ass')- there's no need to stabilize segment after inference because they're already stabilized during inference

transcribe()returns aWhisperResultobject which can be converted todictwith.to_dict(). e.gresult.to_dict()

Regrouping Words

Stable-ts has a preset for regrouping words into different into segments with more natural boundaries.

This preset is enabled by regroup=True. But there are other built-in regrouping methods that allow you to customize the regrouping logic.

This preset is just a predefined a combination of those methods.

result0 = model.transcribe('audio.mp3', regroup=True) # regroup is True by default

# regroup=True is same as below

result1 = model.transcribe('audio.mp3', regroup=False)

(

result1

.split_by_punctuation([('.', ' '), '。', '?', '?', ',', ','])

.split_by_gap(.5)

.merge_by_gap(.15, max_words=3)

.split_by_punctuation([('.', ' '), '。', '?', '?'])

)

# result0 == result1

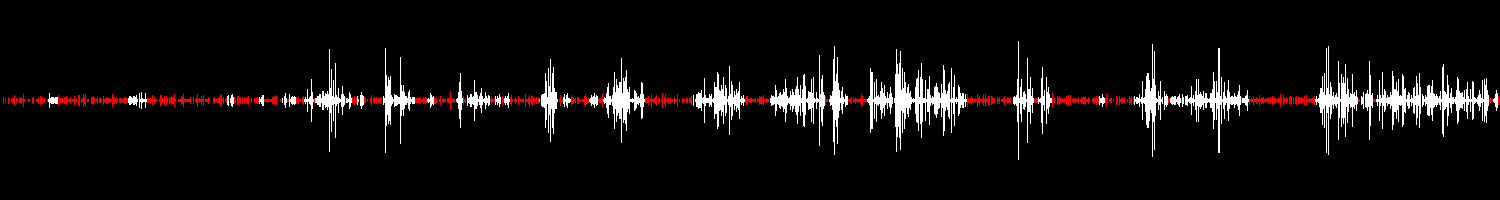

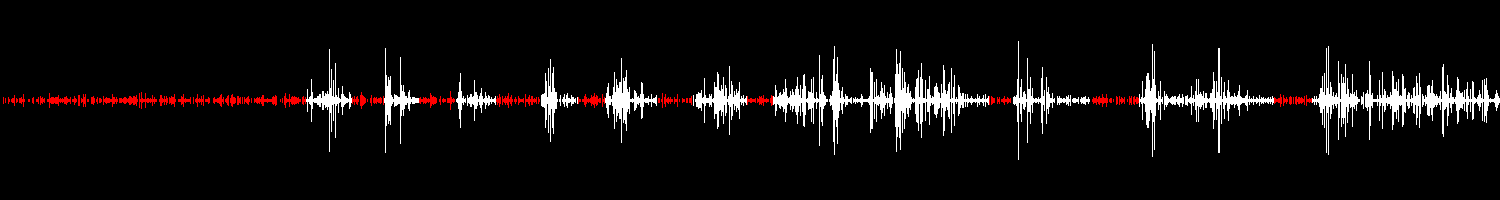

Visualizing Suppression

- Requirement: Pillow or opencv-python

Non-VAD Suppression

import stable_whisper

# regions on the waveform colored red is where it will be likely be suppressed and marked to as silent

# [q_levels=20] and [k_size=5] are defaults for non-VAD.

stable_whisper.visualize_suppression('audio.mp3', 'image.png', q_levels=20, k_size = 5)

VAD Suppression

# [vad_threshold=0.35] is defaults for VAD.

stable_whisper.visualize_suppression('audio.mp3', 'image.png', vad=True, vad_threshold=0.35)

Encode Comparison

import stable_whisper

stable_whisper.encode_video_comparison(

'audio.mp3',

['audio_sub1.srt', 'audio_sub2.srt'],

output_videopath='audio.mp4',

labels=['Example 1', 'Example 2']

)

License

This project is licensed under the MIT License - see the LICENSE file for details

Acknowledgments

Includes slight modification of the original work: Whisper

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

File details

Details for the file stable-ts-2.1.2.tar.gz.

File metadata

- Download URL: stable-ts-2.1.2.tar.gz

- Upload date:

- Size: 32.2 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.8.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9c566b34fd0cefd683e8fa5a37a72f58261cd55dbaeb7950e447f37adc7db148

|

|

| MD5 |

bbdf43c583b269b74bf285363819cc4c

|

|

| BLAKE2b-256 |

2930b3ee0a104eac0bbcfe8a03f117bac50a7586eab5f91b410dae0ac56fb627

|