Embedded vector database using the TurboQuant algorithm (arXiv:2504.19874) — zero training, 2-4 bit compression, fast inner-product search

Project description

TurboQuantDB

TurboQuantDB

An embedded vector database with a Python API, built around the TurboQuant algorithm (arXiv:2504.19874) — two-stage quantization that achieves near-optimal vector compression with zero training time.

Goal: make massive embedding datasets practical on lightweight hardware. A 100k-vector, 1536-dim collection that would occupy 586 MB as raw float32 fits in 108 MB on disk with TQDB b=4, or just 59 MB with b=2 — enabling laptop-scale RAG over millions of documents without a dedicated server.

Two deployment modes:

- Embedded —

tqdbPython package (pip install tqdb), runs in-process (no daemon) - Server — Axum HTTP service in

server/, with multi-tenancy, RBAC, quotas, and async jobs

Key Properties

- Zero training — No

train()step. Vectors are quantized and stored immediately on insert. - 5–10× compression — b=4 reduces 1536-dim float32 embeddings from 586 MB to 108 MB (5.4×); b=2 reaches 59 MB (9.9×) at 100k vectors.

- Two quantizer modes — default (

dense, best recall) and a faster ingest variant (srht) for streaming/high-d workloads. See docs/QUANTIZER_MODES.md for a full breakdown. - Optional ANN index — Build an HNSW graph after loading data for fast approximate search.

- Metadata filtering — MongoDB-style filter operators on any metadata field.

- Crash recovery — Write-ahead log (WAL) ensures durability without explicit flushing.

- Python native —

pip install tqdb; no server or sidecar required.

Installation

pip install tqdb

Building from source (Rust toolchain required): see DEVELOPMENT.md.

Config Advisor

The interactive Config Advisor selects the best configuration for your embedding dimension and use case (RAG, search-at-scale, edge deployment, etc.), scored against real benchmark data with adjustable priority weights for recall, compression, and speed.

Recommended Setup

rerank=True stores raw INT8 vectors alongside compressed codes for exact second-pass rescoring. fast_mode=True (default) uses MSE-only quantization — optimal for d < 1536.

from tqdb import Database

# Best recall, any dimension — brute-force

db = Database.open(path, dimension=DIM, bits=4, rerank=True) # INT8 rerank storage

results = db.search(query, top_k=10)

# GloVe-200 (d=200): R@1 ≈ 1.00 | ~30 MB disk

# arXiv-768 (d=768): R@1 ≈ 0.98 | ~116 MB disk

# DBpedia-1536 (d=1536): R@1 ≈ 0.95 | ~231 MB disk

# Best recall, high-d (d ≥ 1536) — also enable QJL residuals

db = Database.open(path, dimension=1536, bits=4, rerank=True, fast_mode=False)

# Minimum disk — MSE codes only (library default, no extra vector storage)

db = Database.open(path, dimension=DIM, bits=4)

# Low latency at N ≥ 100k — HNSW index

db = Database.open(path, dimension=DIM, bits=4, rerank=True)

db.create_index()

results = db.search(query, top_k=10, _use_ann=True) # p50 < 10ms

# Tune rerank oversampling at query time (default 10×)

results = db.search(query, top_k=10, rerank_factor=20) # higher recall, higher latency

Full configuration guide: docs/CONFIGURATION.md | Python API: docs/PYTHON_API.md

Quick Start

import numpy as np

from tqdb import Database

db = Database.open("./my_db", dimension=1536, bits=4, metric="ip", rerank=True)

db.insert("doc-1", np.random.randn(1536).astype("f4"), metadata={"topic": "ml"}, document="Machine learning intro")

db.insert("doc-2", np.random.randn(1536).astype("f4"), metadata={"topic": "systems"}, document="Rust memory model")

results = db.search(np.random.randn(1536).astype("f4"), top_k=5)

for r in results:

print(r["id"], r["score"], r["document"])

Python API

Full reference:

docs/PYTHON_API.md

# Open / create

db = Database.open(path, dimension, bits=4, seed=42, metric="ip",

rerank=True, fast_mode=False, rerank_precision=None,

collection=None, wal_flush_threshold=None,

quantizer_type=None) # None/"dense" = default (Haar QR + Gaussian); "srht" = fast O(d log d) ingest

# NOTE: rerank=True with rerank_precision=None uses per-vector-scaled INT8 reranking (default),

# which is approximate. Use rerank_precision="f16" or "f32" for higher-precision rescoring.

# rerank_factor (default 10× brute / 20× ANN) controls oversampling.

# Write

db.insert(id, vector, metadata=None, document=None)

db.insert_batch(ids, vectors, metadatas=None, documents=None, mode="insert") # "insert"|"upsert"|"update"

db.upsert(id, vector, metadata=None, document=None)

db.update(id, vector, metadata=None, document=None) # RuntimeError if not found

db.update_metadata(id, metadata=None, document=None) # RuntimeError if not found

# Delete & retrieve

db.delete(id) # → bool

db.delete_batch(ids) # → int (count deleted)

db.get(id) # → {id, metadata, document} | None

db.get_many(ids) # → list[dict | None]

db.list_all() # → list[str]

db.list_ids(where_filter=None, limit=None, offset=0) # paginated

db.count(filter=None) # → int

db.stats() # → dict

len(db) / "id" in db # container protocol

# Search — brute-force by default; pass _use_ann=True to use HNSW index

results = db.search(query, top_k=10, filter=None, _use_ann=False,

ann_search_list_size=None, rerank_factor=None, include=None)

# include: list of "id"|"score"|"metadata"|"document" (default all)

# ann_search_list_size: HNSW ef_search override (only used when _use_ann=True)

# rerank_factor: candidate oversampling multiplier (default 10 brute / 20 ANN)

all_results = db.query(query_embeddings, n_results=10, where_filter=None,

rerank_factor=None, include=None)

# query_embeddings: np.ndarray (N, D) — returns list[list[dict]]

# Manual maintenance checkpoint (WAL flush + segment compaction)

db.checkpoint()

# Index

db.create_index(max_degree=32, ef_construction=200, n_refinements=5,

search_list_size=128, alpha=1.2)

# Metadata filter operators

# $eq $ne $gt $gte $lt $lte $in $nin $exists $and $or

db.search(query, top_k=5, filter={"year": {"$gte": 2023}})

db.search(query, top_k=5, filter={"$and": [{"topic": "ml"}, {"year": {"$gte": 2023}}]})

Benchmarks

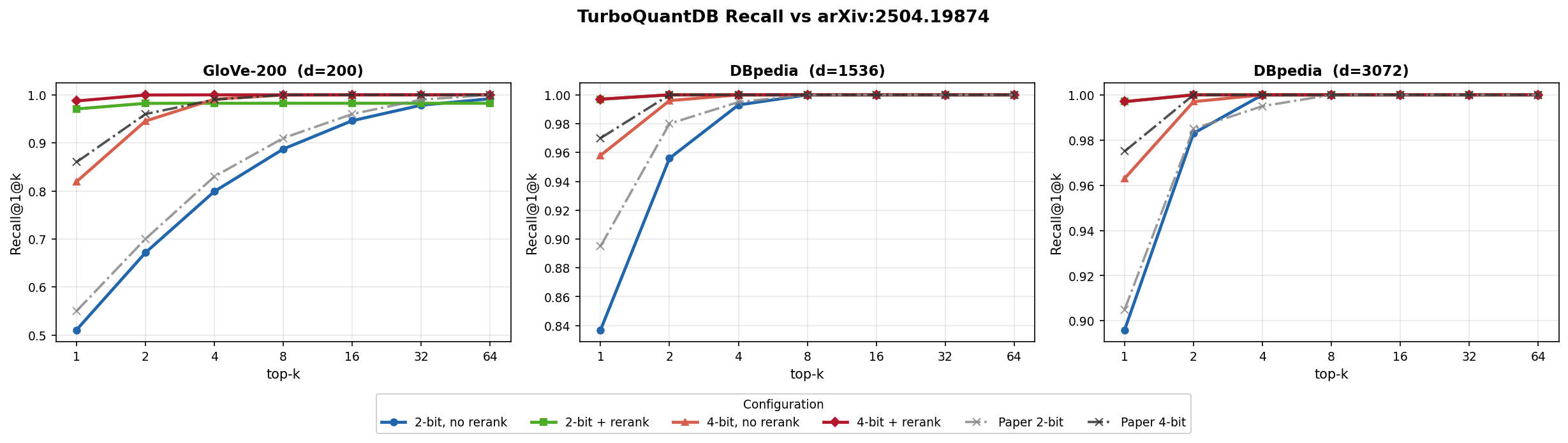

Three datasets, 100k vectors each, matching arXiv:2504.19874 Figure 5. Benchmark config: quantizer_type=None (dense), fast_mode=True, rerank=True (MSE-only, matching paper Figure 5 bit allocation).

Key results at 100k × d=1536 (DBpedia), brute-force, b=4, rerank=True:

| Metric | Value |

|---|---|

| Recall@1 | 92.2% |

| Recall@4 | 99.9% |

| Disk | 108 MB (5.4× compression) |

| p50 latency | ~51ms |

Full tables (all 8 configs × 3 datasets), ANN guidance, and reproduction steps: docs/BENCHMARKS.md

Rerank unlocks recall at any bit depth

bits=2, rerank=True matches bits=4, rerank=True recall while using ~10% less disk, and outperforms bits=4, rerank=False at lower disk cost. (bit_sweep, n=10k, brute-force, fast_mode=True)

| Dataset | b=2, no rerank | b=4, no rerank | b=2 + rerank | b=4 + rerank |

|---|---|---|---|---|

| GloVe-200 (d=200) | 0.528 (1.8 MB) | 0.822 (2.3 MB) | 0.992 (3.8 MB) | 0.992 (4.2 MB) |

| arXiv-768 (d=768) | 0.426 (7.4 MB) | 0.696 (9.2 MB) | 0.978 (14.7 MB) | 0.978 (16.6 MB) |

| GIST-960 (d=960) | 0.294 (10.4 MB) | 0.566 (12.7 MB) | 0.974 (19.6 MB) | 0.974 (21.9 MB) |

Coverage across d=65–3072

R@1 ≥ 0.87 across all 9 benchmark datasets at b=4, rerank=True, brute-force, fast_mode=True, n=10k:

| Dataset | d | R@1 | Disk | p50 |

|---|---|---|---|---|

| lastfm-64 | 65 | 0.874 | 2.0 MB | 1.1 ms |

| deep-96 | 96 | 0.980 | 2.5 MB | 1.2 ms |

| glove-100 | 100 | 0.990 | 2.6 MB | 1.4 ms |

| glove-200 | 200 | 0.992 | 4.2 MB | 1.7 ms |

| nytimes-256 | 256 | 0.992 | 5.2 MB | 2.0 ms |

| arXiv-768 | 768 | 0.978 | 16.6 MB | 7.6 ms |

| GIST-960 | 960 | 0.974 | 21.9 MB | 7.3 ms |

| DBpedia-1536 | 1536 | 0.998 | 41.1 MB | 10.3 ms |

| DBpedia-3072 | 3072 | 1.000 | 117.0 MB | 46.8 ms |

RAG Integration

from tqdb.rag import TurboQuantRetriever

retriever = TurboQuantRetriever(db_path="./rag_db", dimension=1536, bits=4)

retriever.add_texts(texts=texts, embeddings=embeddings, metadatas=metadatas)

results = retriever.similarity_search(query_embedding=query_vec, k=5)

for r in results:

print(r["score"], r["text"])

Server Mode

An optional Axum HTTP server in server/ adds multi-tenancy, RBAC, and async jobs. See docs/SERVER_API.md for setup, launch, and the full API reference.

Research Basis

This is an independent implementation of ideas from the TurboQuant paper. The algorithm itself was authored by the original researchers.

Zandieh, A., Daliri, M., Hadian, M., & Mirrokni, V. (2025). TurboQuant: Online Vector Quantization with Near-optimal Distortion Rate. arXiv:2504.19874

@article{zandieh2025turboquant,

title={TurboQuant: Online Vector Quantization with Near-optimal Distortion Rate},

author={Zandieh, Amir and Daliri, Majid and Hadian, Majid and Mirrokni, Vahab},

journal={arXiv preprint arXiv:2504.19874},

year={2025}

}

License

Apache License 2.0 — see LICENSE.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file tqdb-0.5.2.tar.gz.

File metadata

- Download URL: tqdb-0.5.2.tar.gz

- Upload date:

- Size: 1.1 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2f262857b4aa8536c0b892bad96e23065706f016d0c1287db303f47536aef7c4

|

|

| MD5 |

49d4f8a12baa92ca84bc0317f41d2199

|

|

| BLAKE2b-256 |

cb2a2cbecbf75f3b9b2f3bb2d125402204c7dd7e41e4f0a274ec0a1e73c60f94

|

File details

Details for the file tqdb-0.5.2-cp313-cp313-win_amd64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp313-cp313-win_amd64.whl

- Upload date:

- Size: 4.1 MB

- Tags: CPython 3.13, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

270eb5a8ff6a8fb6d4cc6df82d72058c92946f8dac90f321f2cea54aea049211

|

|

| MD5 |

b50dfa6b2f18e3db856840ad8ce1b664

|

|

| BLAKE2b-256 |

eecc2704d5d41722aec1e8fc32780db77b39065a30e3f1556f750f1a5178559e

|

File details

Details for the file tqdb-0.5.2-cp313-cp313-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp313-cp313-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 1.4 MB

- Tags: CPython 3.13, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6170ffe480428c2e6ba64bf849cf7f47f51519a93142feac7108af6c05d95d41

|

|

| MD5 |

b6c60310b34369427863c05c52dc45cb

|

|

| BLAKE2b-256 |

a4bc40e84f6b5b101986d701d5d0cdbcee18370f79414514e11a82a50d624c7e

|

File details

Details for the file tqdb-0.5.2-cp313-cp313-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp313-cp313-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 4.5 MB

- Tags: CPython 3.13, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0b68d827991f39d00ce6b34d2052c35a4018d2855857620008c89338f609dca4

|

|

| MD5 |

e2064bc70c2f849bbaf1070e362a471f

|

|

| BLAKE2b-256 |

fd3c126b94a36d2823dadbeb2cf0025511f66d0890474eb180da3b52016b97d6

|

File details

Details for the file tqdb-0.5.2-cp313-cp313-macosx_10_12_x86_64.macosx_11_0_arm64.macosx_10_12_universal2.whl.

File metadata

- Download URL: tqdb-0.5.2-cp313-cp313-macosx_10_12_x86_64.macosx_11_0_arm64.macosx_10_12_universal2.whl

- Upload date:

- Size: 5.4 MB

- Tags: CPython 3.13, macOS 10.12+ universal2 (ARM64, x86-64), macOS 10.12+ x86-64, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e40eb9bef4241a39a7bca8a67a6c60092529f9ff8a5e13df6fe64aef6bd0325c

|

|

| MD5 |

4a4110faead9c3fa6bdfdbf1d589ee8c

|

|

| BLAKE2b-256 |

ea8af1ce85e18993cf86884de07fbf87652f0a6037e5198d036e37bcd682b8a9

|

File details

Details for the file tqdb-0.5.2-cp312-cp312-win_amd64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp312-cp312-win_amd64.whl

- Upload date:

- Size: 4.1 MB

- Tags: CPython 3.12, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b29a5a6c01e655748b1b2bb4bd84a613d591a85b688bfca8b86fab3b108a1364

|

|

| MD5 |

90505a9b43847aefc4bedf622d5f6107

|

|

| BLAKE2b-256 |

8ad604c47098ef048ebc7773e18839af3f4fb81baa397684b01eab49c3f21daa

|

File details

Details for the file tqdb-0.5.2-cp312-cp312-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp312-cp312-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 1.4 MB

- Tags: CPython 3.12, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8246ea19f4e6c60a10f37330e220269c4d8636bf24520cf06221c96f870c4dc5

|

|

| MD5 |

382d37e417cd4c58da717adf5769420c

|

|

| BLAKE2b-256 |

5ce72d55e337891309602e390ce06c681d7bbf7baed14c494b7bb00f5c33f442

|

File details

Details for the file tqdb-0.5.2-cp312-cp312-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp312-cp312-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 4.5 MB

- Tags: CPython 3.12, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

eb54c7e841de148e8e1b272994a851e2660766271a21ab41a83dc2c3d01127ea

|

|

| MD5 |

0954b4d0c273194d90d05f750b950188

|

|

| BLAKE2b-256 |

6a349b75af8acfb4dd957ffa8b7bd5f49dfb79471a1ab2cba3747de0225246c2

|

File details

Details for the file tqdb-0.5.2-cp312-cp312-macosx_10_12_x86_64.macosx_11_0_arm64.macosx_10_12_universal2.whl.

File metadata

- Download URL: tqdb-0.5.2-cp312-cp312-macosx_10_12_x86_64.macosx_11_0_arm64.macosx_10_12_universal2.whl

- Upload date:

- Size: 5.4 MB

- Tags: CPython 3.12, macOS 10.12+ universal2 (ARM64, x86-64), macOS 10.12+ x86-64, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f4bbcd609be3834f4be15278d60e3f50a8b9e50b455c56d23d0636721ac49898

|

|

| MD5 |

2a07857da44492504db852fb1268799c

|

|

| BLAKE2b-256 |

9452401ced724aa302d6b1d5d1e0a5af601b9b1ce51b24aadcd5e79acab23578

|

File details

Details for the file tqdb-0.5.2-cp311-cp311-win_amd64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp311-cp311-win_amd64.whl

- Upload date:

- Size: 4.1 MB

- Tags: CPython 3.11, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

23151f9db7542cf933fc505693363af20fad6bf153e6432e792c52ff0aa063a7

|

|

| MD5 |

699a3c040ef87e24956747135c2b6942

|

|

| BLAKE2b-256 |

060318c90afccfe67a41ab0272bbe118ffd7bc7121430bc1cab4063351a95920

|

File details

Details for the file tqdb-0.5.2-cp311-cp311-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp311-cp311-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 1.4 MB

- Tags: CPython 3.11, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b8df3ff68cb76c8beb8f569b776cc354e79cac2c9de630297de6e6dbee3dbad6

|

|

| MD5 |

21c0eb90f41d509a061921d5eb1ff637

|

|

| BLAKE2b-256 |

ac4453b9a0fbad4eb3e6f2ca4f672a9d6d70b49e092388d18ae4c307c84d3672

|

File details

Details for the file tqdb-0.5.2-cp311-cp311-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp311-cp311-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 4.5 MB

- Tags: CPython 3.11, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

fc2af1a766f704f3dbfe932f1dbb5b5a0e61ec1b9e83c32fa470c971fcdcc520

|

|

| MD5 |

2e14deb10f899044b4b95aad14a946cc

|

|

| BLAKE2b-256 |

213b5afbf091640ad43f3217132658e026de550a52747254fb75a554117d5216

|

File details

Details for the file tqdb-0.5.2-cp311-cp311-macosx_10_12_x86_64.macosx_11_0_arm64.macosx_10_12_universal2.whl.

File metadata

- Download URL: tqdb-0.5.2-cp311-cp311-macosx_10_12_x86_64.macosx_11_0_arm64.macosx_10_12_universal2.whl

- Upload date:

- Size: 5.4 MB

- Tags: CPython 3.11, macOS 10.12+ universal2 (ARM64, x86-64), macOS 10.12+ x86-64, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

93d677fae308dccbf6c5d1e3f387231d90642157962730f5cc1d7046e7cfb916

|

|

| MD5 |

b1c4b2f2431c32e670d5155b290523ca

|

|

| BLAKE2b-256 |

b958de455fcbe3d38e5ade58632ee8034f14510dc3e582ec88d730b04736141d

|

File details

Details for the file tqdb-0.5.2-cp310-cp310-win_amd64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp310-cp310-win_amd64.whl

- Upload date:

- Size: 4.1 MB

- Tags: CPython 3.10, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a11f56a0c1c7276f7a36cf3afc9867d738c4e526f1482e1e3a2e6847b889d329

|

|

| MD5 |

c11339e3106f923eaa10cf35d7ab6f12

|

|

| BLAKE2b-256 |

4296a873e0dd1a60204407ed847d1296b3f28e19aa2369bb6c9f160ba3f7e15f

|

File details

Details for the file tqdb-0.5.2-cp310-cp310-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp310-cp310-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 1.4 MB

- Tags: CPython 3.10, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

79518c3bba30c019fb28a559be63fbb293295ff852483e44f1847bdba8dc1b28

|

|

| MD5 |

3144f11ccffa4d5f9de71b90d43a1b3d

|

|

| BLAKE2b-256 |

8e3273467c00f4d9430e5f82e11b859bf835428be99c04a0fc2bd95d8a1804e5

|

File details

Details for the file tqdb-0.5.2-cp310-cp310-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: tqdb-0.5.2-cp310-cp310-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 4.5 MB

- Tags: CPython 3.10, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

955fa3d01f0071b6a66427b341badd346e50c2892af7bddf9658f6129df52e61

|

|

| MD5 |

919163ae1c8a921c661de91dfe2556a5

|

|

| BLAKE2b-256 |

50539e93ac657374e614ab4b870959f92f3fcbc99b0720c6f63803611d325d7b

|

File details

Details for the file tqdb-0.5.2-cp310-cp310-macosx_10_12_x86_64.macosx_11_0_arm64.macosx_10_12_universal2.whl.

File metadata

- Download URL: tqdb-0.5.2-cp310-cp310-macosx_10_12_x86_64.macosx_11_0_arm64.macosx_10_12_universal2.whl

- Upload date:

- Size: 5.4 MB

- Tags: CPython 3.10, macOS 10.12+ universal2 (ARM64, x86-64), macOS 10.12+ x86-64, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.13.1

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f0a93dcb40cdd429b22ee8fb8db0e24cec88215e0efcb70e4ff6b16b1fe5059a

|

|

| MD5 |

7ecdc2a60870f5177ce3a91696bdc2ba

|

|

| BLAKE2b-256 |

f706363c03228026bf904b07e1315fc14db8d495f950f08ffbb186f945911f51

|