An agentic orchestration framework for building agent networks that handle task automation without human interaction.

Project description

Overview

Agentic orchestration framework to deploy agent network and handle complex task automation.

Visit:

Table of Content

- Key Features

- Quick Start

- Technologies Used

- Project Structure

- Setup

- Contributing

- Trouble Shooting

- Frequently Asked Questions (FAQ)

Key Features

versionhq is a Python framework for agent networks that handle complex task automation without human interaction.

Agents are model-agnostic, and will improve task output, while oprimizing token cost and job latency, by sharing their memory, knowledge base, and RAG tools with other agents in the network.

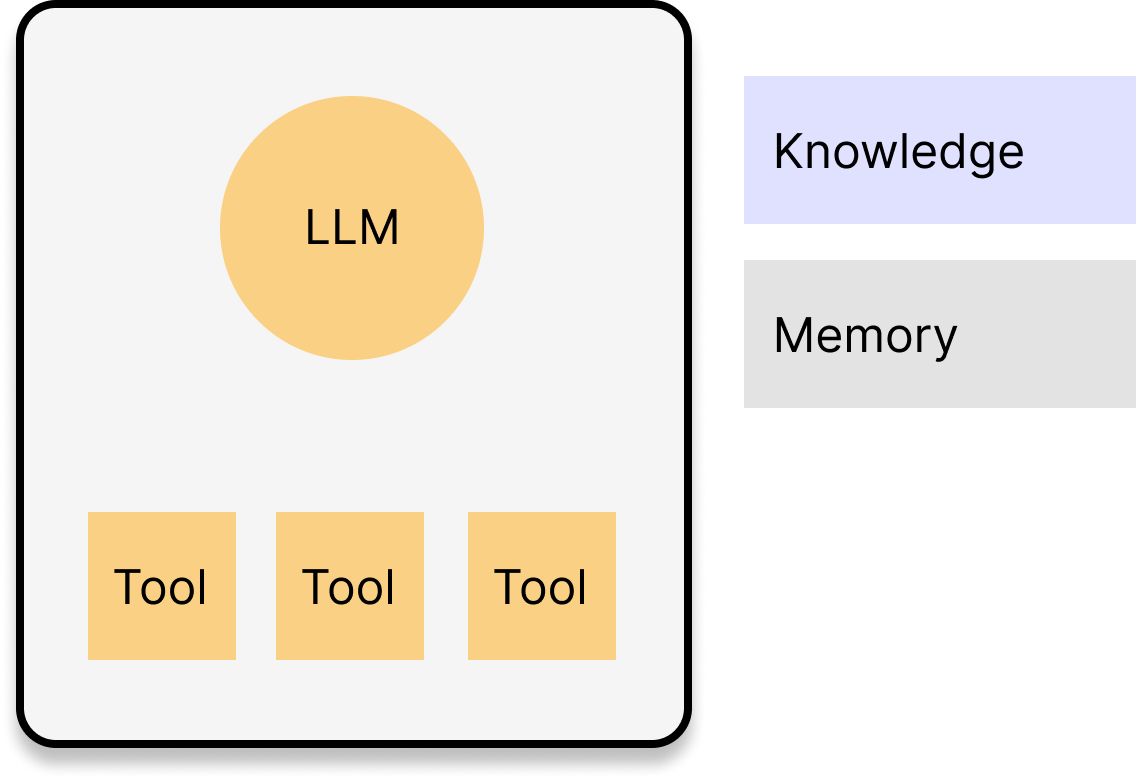

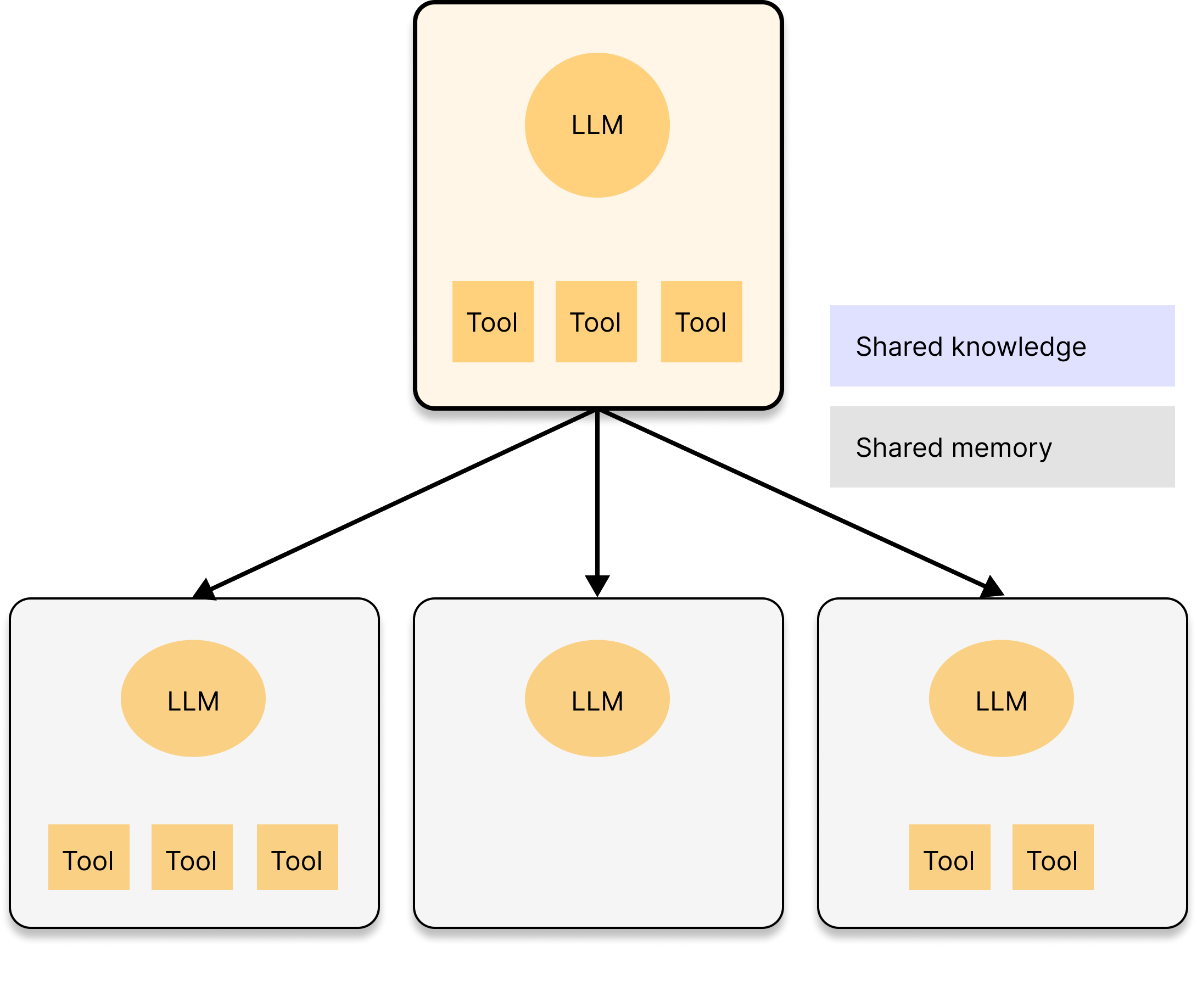

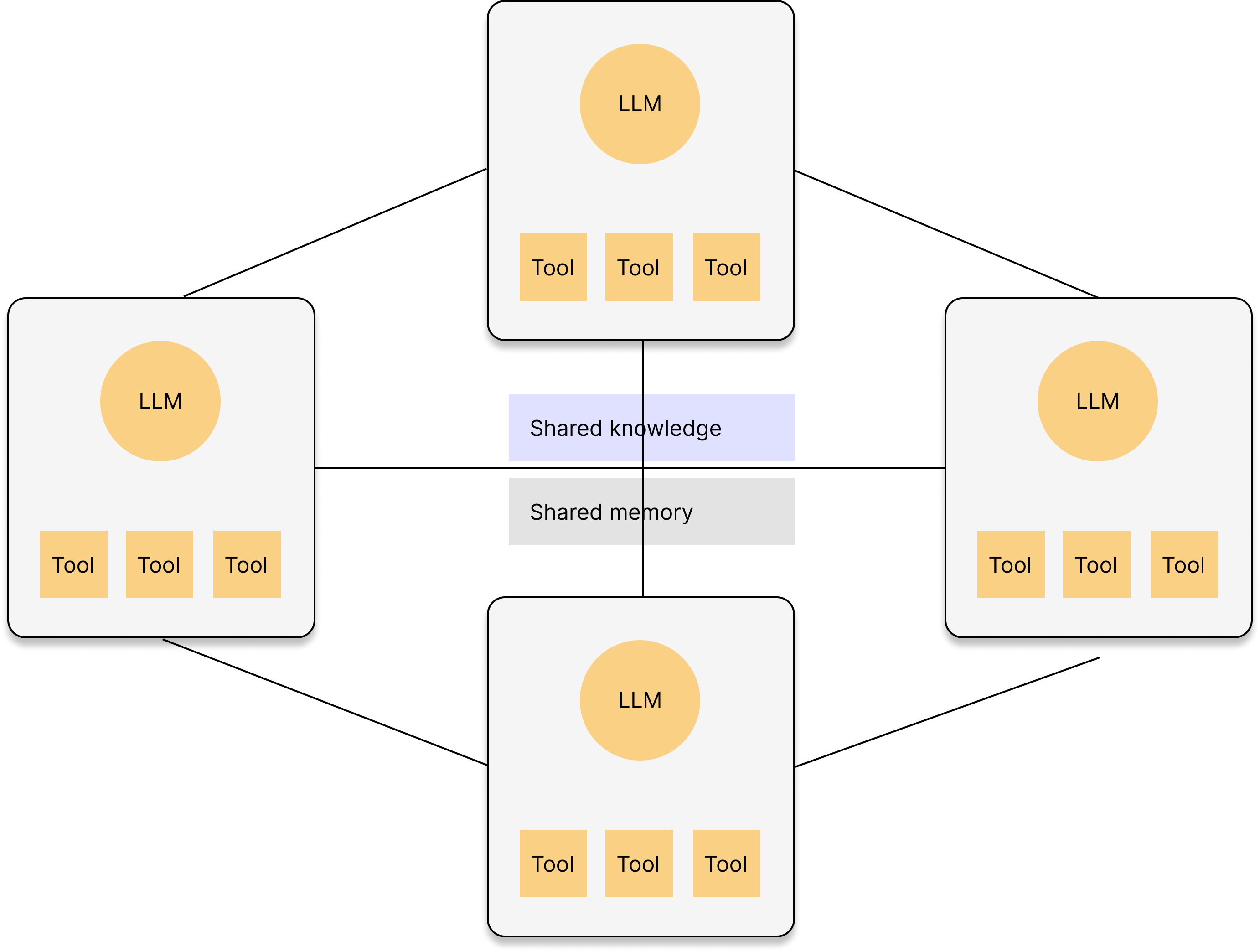

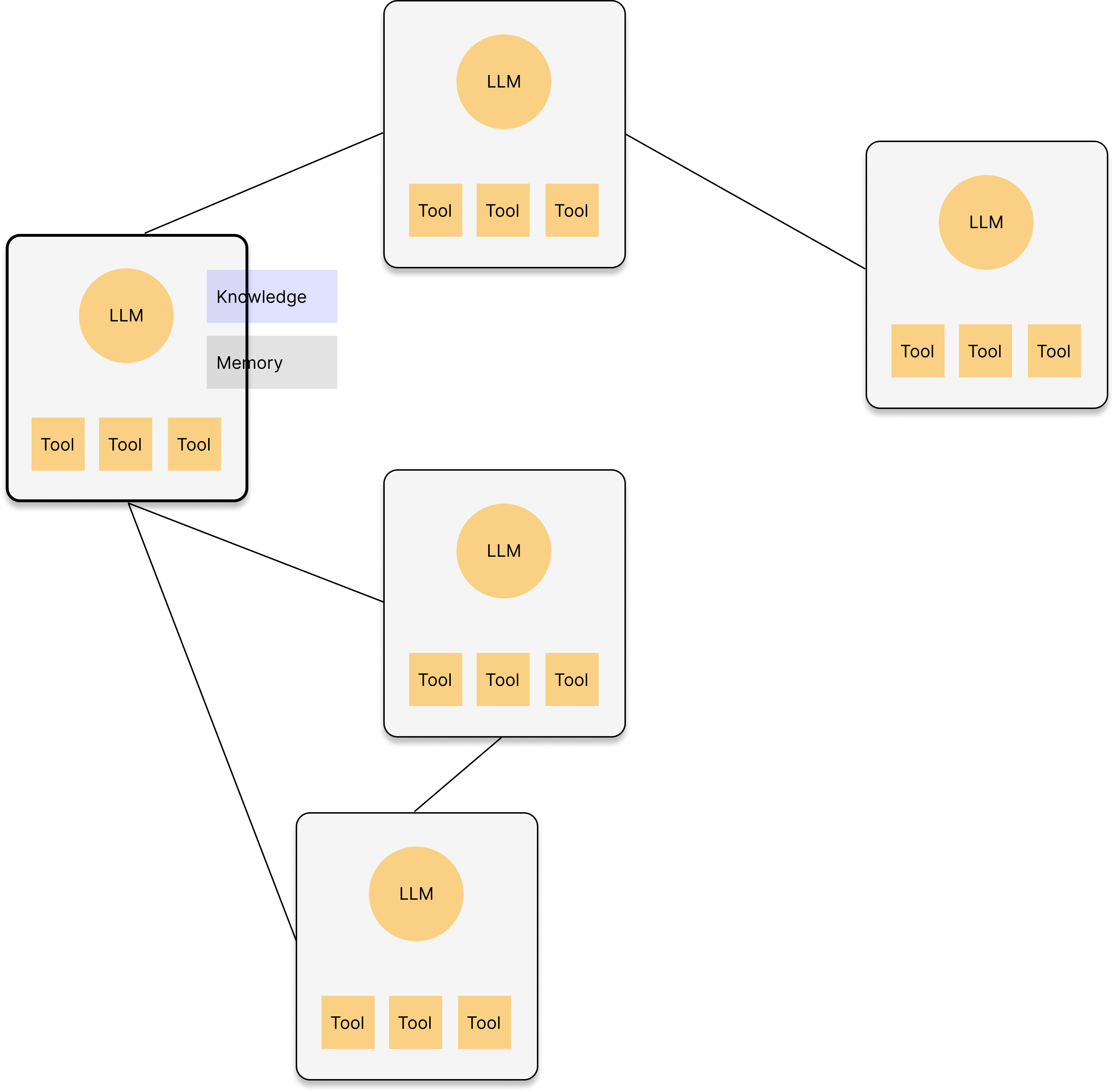

Agent formation

Agents adapt their formation based on task complexity.

You can specify a desired formation or allow the agents to determine it autonomously (default).

| Solo Agent | Supervising | Network | Random | |

|---|---|---|---|---|

| Formation |  |

|

|

|

| Usage |

|

|

|

|

| Use case | An email agent drafts promo message for the given audience. | The leader agent strategizes an outbound campaign plan and assigns components such as media mix or message creation to subordinate agents. | An email agent and social media agent share the product knowledge and deploy multi-channel outbound campaign. | 1. An email agent drafts promo message for the given audience, asking insights on tones from other email agents which oversee other clusters. 2. An agent calls the external agent to deploy the campaign. |

Quick Start

Install versionhq package:

pip install versionhq

(Python 3.11 or higher)

Generate agent networks and launch task execution:

from versionhq import form_agent_network

network = form_agent_network(

task="YOUR AMAZING TASK OVERVIEW",

expected_outcome="YOUR OUTCOME EXPECTATION",

)

res = network.launch()

This will form a network with multiple agents on Formation and return TaskOutput object with output in JSON, plane text, Pydantic model format with evaluation.

Solo Agent:

Solo Agent:

You can simply build an agent using Agent model.

By default, the agent prioritize JSON serializable output.

But you can add a plane text summary of the structured output by using callbacks.

from pydantic import BaseModel

from versionhq import Agent, Task

class CustomOutput(BaseModel):

test1: str

test2: list[str]

def dummy_func(message: str, test1: str, test2: list[str]) -> str:

return f"{message}: {test1}, {", ".join(test2)}"

agent = Agent(role="demo", goal="amazing project goal")

task = Task(

description="Amazing task",

pydantic_output=CustomOutput,

callback=dummy_func,

callback_kwargs=dict(message="Hi! Here is the result: ")

)

res = task.execute_sync(agent=agent, context="amazing context to consider.")

print(res)

This will return a TaskOutput object that stores response in plane text, JSON, and Pydantic model: CustomOutput formats with a callback result, tool output (if given), and evaluation results (if given).

res == TaskOutput(

task_id=UUID('<TASK UUID>'),

raw='{\"test1\":\"random str\", \"test2\":[\"str item 1\", \"str item 2\", \"str item 3\"]}',

json_dict={'test1': 'random str', 'test2': ['str item 1', 'str item 2', 'str item 3']},

pydantic=<class '__main__.CustomOutput'>,

tool_output=None,

callback_output='Hi! Here is the result: random str, str item 1, str item 2, str item 3', # returned a plain text summary

evaluation=None

)

Supervising:

from versionhq import Agent, Task, ResponseField, Team, TeamMember

agent_a = Agent(role="agent a", goal="My amazing goals", llm="llm-of-your-choice")

agent_b = Agent(role="agent b", goal="My amazing goals", llm="llm-of-your-choice")

task_1 = Task(

description="Analyze the client's business model.",

response_fields=[ResponseField(title="test1", data_type=str, required=True),],

allow_delegation=True

)

task_2 = Task(

description="Define the cohort.",

response_fields=[ResponseField(title="test1", data_type=int, required=True),],

allow_delegation=False

)

team = Team(

members=[

TeamMember(agent=agent_a, is_manager=False, task=task_1),

TeamMember(agent=agent_b, is_manager=True, task=task_2),

],

)

res = team.kickoff()

This will return a list with dictionaries with keys defined in the ResponseField of each task.

Tasks can be delegated to a team manager, peers in the team, or completely new agent.

Technologies Used

Schema, Data Validation

- Pydantic: Data validation and serialization library for Python.

- Upstage: Document processer for ML tasks. (Use

Document Parser APIto extract data from documents) - Docling: Document parsing

Storage

- mem0ai: Agents' memory storage and management.

- Chroma DB: Vector database for storing and querying usage data.

- SQLite: C-language library to implements a small SQL database engine.

LLM-curation

- LiteLLM: Curation platform to access LLMs

Tools

- Composio: Conect RAG agents with external tools, Apps, and APIs to perform actions and receive triggers. We use tools and RAG tools from Composio toolset.

Deployment

- Python: Primary programming language. v3.13 is recommended.

- uv: Python package installer and resolver

- pre-commit: Manage and maintain pre-commit hooks

- setuptools: Build python modules

Project Structure

.

.github

└── workflows/ # Github actions

│

docs/ # Documentation built by MkDocs

│

src/

└── versionhq/ # Orchestration framework package

│ ├── agent/ # Core components

│ └── llm/

│ └── task/

│ └── tool/

│ └── ...

│

└──tests/ # Pytest - by core component and use cases in the docs

│ └── agent/

│ └── llm/

│ └── ...

│

└── uploads/ # Local directory that stores uloaded files

Setup

-

Install

uvpackage manager:For MacOS:

brew install uvFor Ubuntu/Debian:

sudo apt-get install uv -

Install dependencies:

uv venv source .venv/bin/activate uv lock --upgrade uv sync --all-extras

- In case of AssertionError/module mismatch, run Python version control using

.pyenvpyenv install 3.12.8 pyenv global 3.12.8 (optional: `pyenv global system` to get back to the system default ver.) uv python pin 3.12.8 echo 3.12.8 > .python-version

- Set up environment variables:

Create a

.envfile in the project root and add the following:LITELLM_API_KEY=your-litellm-api-key OPENAI_API_KEY=your-openai-api-key COMPOSIO_API_KEY=your-composio-api-key COMPOSIO_CLI_KEY=your-composio-cli-key [LLM_INTERFACE_PROVIDER_OF_YOUR_CHOICE]_API_KEY=your-api-key

Contributing

-

Create your feature branch (

git checkout -b feature/your-amazing-feature) -

Create amazing features

-

Test the features using the

testsdirectory.- Add a test function to respective components in the

testsdirectory. - Add your

LITELLM_API_KEY,OPENAI_API_KEY,COMPOSIO_API_KEY,DEFAULT_USER_IDto the Githubrepository secretslocated at settings > secrets & variables > Actions. - Run a test.

uv run pytest tests -vv --cache-clear

pytest

- When adding a new file to

tests, name the file ended with_test.py. - When adding a new feature to the file, name the feature started with

test_.

- Add a test function to respective components in the

-

Pull the latest version of source code from the main branch (

git pull origin main) *Address conflicts if any. -

Commit your changes (

git add ./git commit -m 'Add your-amazing-feature') -

Push to the branch (

git push origin feature/your-amazing-feature) -

Open a pull request

Optional

- Flag with

#! REFINEMEfor any improvements needed and#! FIXMEfor any errors.

productionuse case is available athttps://versi0n.io. Currently, we are running alpha test.

Documentation

-

To edit the documentation, see

docsrepository and edit the respective component. -

We use

mkdocsto update the docs. You can run the doc locally at http://127.0.0.1:8000/:uv run python3 -m mkdocs serve --clean -

To add a new page, update

mkdocs.ymlin the root. Refer to MkDocs official docs for more details.

Customizing AI Agent

To add an agent, use sample directory to add new project. You can define an agent with a specific role, goal, and set of tools.

Your new agent needs to follow the Agent model defined in the verionhq.agent.model.py.

You can also add any fields and functions to the Agent model universally by modifying verionhq.agent.model.py.

Modifying RAG Functionality

The RAG system uses Chroma DB to store and query past campaign dataset. To update the knowledge base:

- Add new files to the

uploads/directory. (This will not be pushed to Github.) - Modify the

tools.pyfile to update the ingestion process if necessary. - Run the ingestion process to update the Chroma DB.

Package Management with uv

- Add a package:

uv add <package> - Remove a package:

uv remove <package> - Run a command in the virtual environment:

uv run <command>

- After updating dependencies, update

requirements.txtaccordingly or runuv pip freeze > requirements.txt

Pre-Commit Hooks

-

Install pre-commit hooks:

uv run pre-commit install -

Run pre-commit checks manually:

uv run pre-commit run --all-files

Pre-commit hooks help maintain code quality by running checks for formatting, linting, and other issues before each commit.

- To skip pre-commit hooks (NOT RECOMMENDED)

git commit --no-verify -m "your-commit-message"

Trouble Shooting

Common issues and solutions:

- API key errors: Ensure all API keys in the

.envfile are correct and up to date. Make sure to addload_dotenv()on the top of the python file to apply the latest environment values. - Database connection issues: Check if the Chroma DB is properly initialized and accessible.

- Memory errors: If processing large contracts, you may need to increase the available memory for the Python process.

- Issues related to the Python version: Docling/Pytorch is not ready for Python 3.13 as of Jan 2025. Use Python 3.12.x as default by running

uv venv --python 3.12.8anduv python pin 3.12.8. - Issues related to dependencies:

rm -rf uv.lock,uv cache clean,uv venv, and runuv pip install -r requirements.txt -v. - Issues related to the AI agents or RAG system: Check the

output.logfile for detailed error messages and stack traces. - Issues related to

Python quit unexpectedly: Check this stackoverflow article. reportMissingImportserror from pyright after installing the package: This might occur when installing new libraries while VSCode is running. Open the command pallete (ctrl + shift + p) and run the Python: Restart language server task.

Frequently Asked Questions (FAQ)

Q. Where can I see if the agent is working?

A. Visit playground.

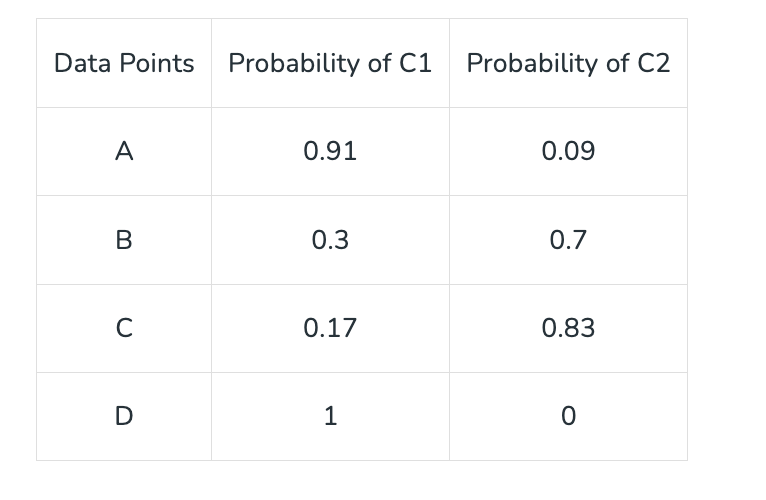

Q. How do you analyze the customer?

A. We employ soft clustering for each customer.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file versionhq-1.1.12.5.tar.gz.

File metadata

- Download URL: versionhq-1.1.12.5.tar.gz

- Upload date:

- Size: 482.5 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

62461e66bf66bca706f49cc3ad5c35e4105d5e6878828dda40228b19125465a7

|

|

| MD5 |

8fb61d6d51c83ba0ab6d1d3f521fe269

|

|

| BLAKE2b-256 |

00e6cc7ea4685544ff91f7026dbbdb1b6b4f3d63445ab849445036e45a8a635a

|

File details

Details for the file versionhq-1.1.12.5-py3-none-any.whl.

File metadata

- Download URL: versionhq-1.1.12.5-py3-none-any.whl

- Upload date:

- Size: 85.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c0d1d677536e8815647634cf63ae58ac0636647905bb40d8a6fa7927af283f44

|

|

| MD5 |

a63cc4af73e9b6d8f9d6883f7393a1f5

|

|

| BLAKE2b-256 |

f133267f2448fc5a0686ac4e81c7a07c85d41a0ac4a3e949274ff1be0e610879

|