Library to easily interface with LLM API providers

Project description

🚅 LiteLLM

Call all LLM APIs using the OpenAI format [Anthropic, Huggingface, Cohere, Azure OpenAI etc.]

100+ Supported Models | Docs | Demo Website

LiteLLM manages

- Translating inputs to the provider's completion and embedding endpoints

- Guarantees consistent output, text responses will always be available at

['choices'][0]['message']['content'] - Exception mapping - common exceptions across providers are mapped to the OpenAI exception types

Usage

pip install litellm

from litellm import completion

## set ENV variables

os.environ["OPENAI_API_KEY"] = "openai key"

os.environ["COHERE_API_KEY"] = "cohere key"

os.environ["ANTHROPIC_API_KEY"] = "anthropic key"

messages = [{ "content": "Hello, how are you?","role": "user"}]

# openai call

response = completion(model="gpt-3.5-turbo", messages=messages)

# cohere call

response = completion(model="command-nightly", messages=messages)

# anthropic

response = completion(model="claude-2", messages=messages)

Stable version

pip install litellm==0.1.424

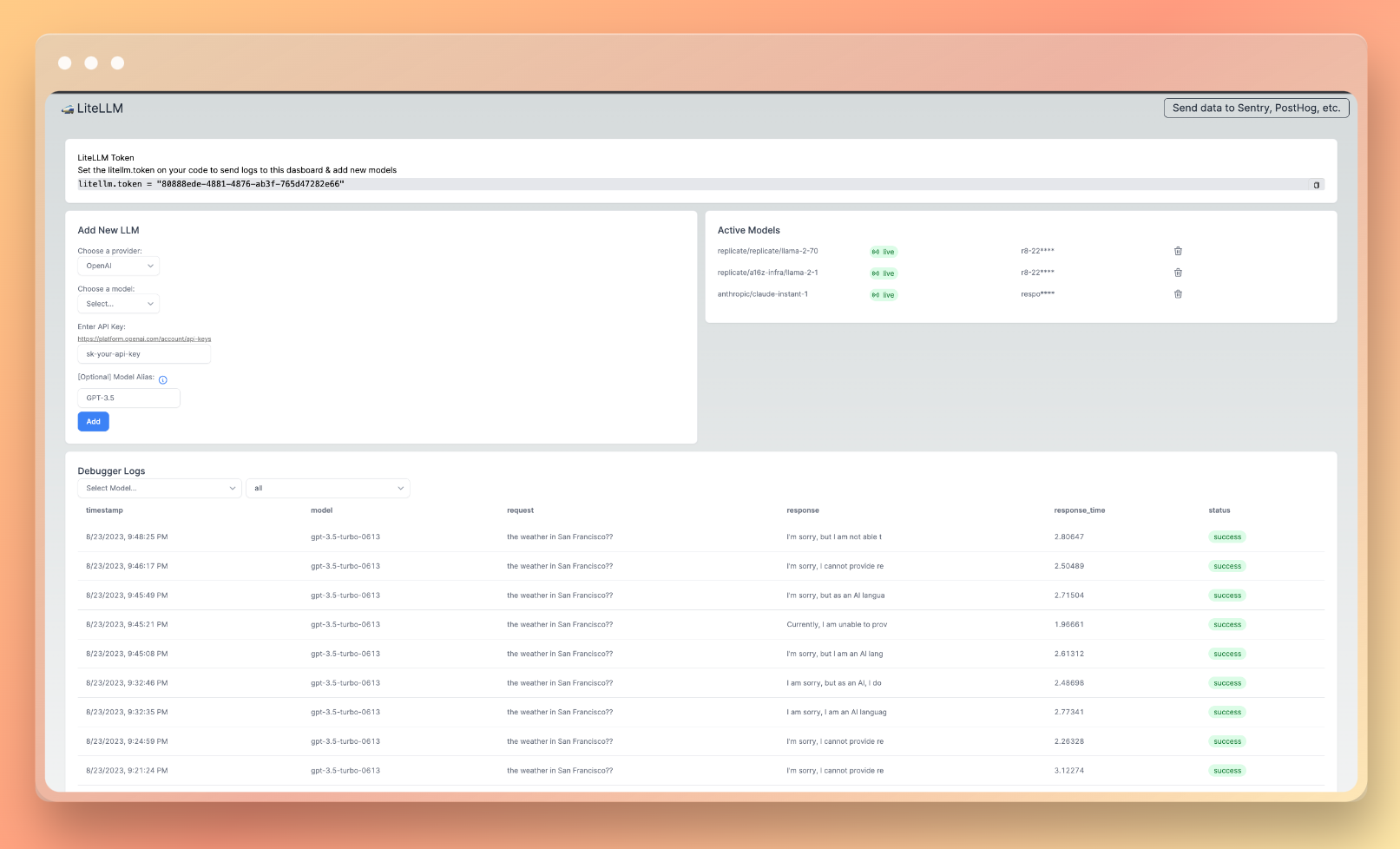

LiteLLM Client - debugging & 1-click add new LLMs

Debugging Dashboard 👉 https://docs.litellm.ai/docs/debugging/hosted_debugging

Streaming

liteLLM supports streaming the model response back, pass stream=True to get a streaming iterator in response.

Streaming is supported for OpenAI, Azure, Anthropic, Huggingface models

response = completion(model="gpt-3.5-turbo", messages=messages, stream=True)

for chunk in response:

print(chunk['choices'][0]['delta'])

# claude 2

result = completion('claude-2', messages, stream=True)

for chunk in result:

print(chunk['choices'][0]['delta'])

support / talk with founders

- Schedule Demo 👋

- Community Discord 💭

- Our numbers 📞 +1 (770) 8783-106 / +1 (412) 618-6238

- Our emails ✉️ ishaan@berri.ai / krrish@berri.ai

why did we build this

- Need for simplicity: Our code started to get extremely complicated managing & translating calls between Azure, OpenAI, Cohere

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file litellm-0.1.492.tar.gz.

File metadata

- Download URL: litellm-0.1.492.tar.gz

- Upload date:

- Size: 62.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.8.18

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

73eec37467635dd4f2fe5cb07838f3b4391a252b40f0413abdd128c54d3e789f

|

|

| MD5 |

385e907d6aa9e56ad5c262ae08a95fbd

|

|

| BLAKE2b-256 |

691a546392604fb96dfc4216b03ea67ebd8f91c933bf6b2dab18e4bb64c929b4

|

File details

Details for the file litellm-0.1.492-py3-none-any.whl.

File metadata

- Download URL: litellm-0.1.492-py3-none-any.whl

- Upload date:

- Size: 72.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.8.18

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ab46af5b8dadd5835ea5d6609a9cc8f162e4cc181bd4a6cd1c31436e42e35a8d

|

|

| MD5 |

eb9b6208f518b6f49e12e5deadae229c

|

|

| BLAKE2b-256 |

bbe43135e27e7bb466e6db575ab7c49a51c4d79632be845996d6682b9a3c58de

|