A command-line interface for Ollama API

Project description

mdllama

A CLI tool that lets you chat with Ollama and OpenAI models right from your terminal, with built-in Markdown rendering.

mdllama makes it easy to interact with AI models directly from your command line, meanwhile providing you with real-time Markdown rendering.

Features

- Chat with Ollama models from the terminal

- Built-in Markdown rendering

- Simple installation and removal (see below)

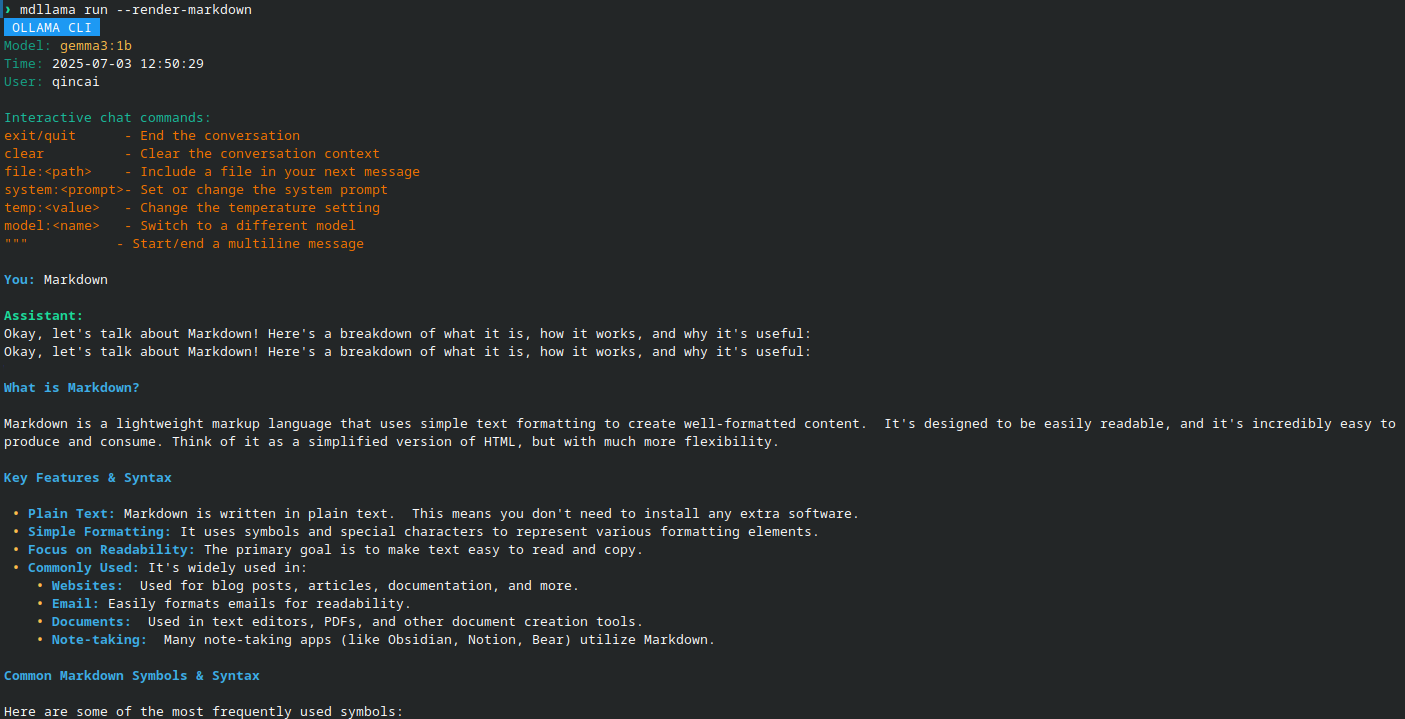

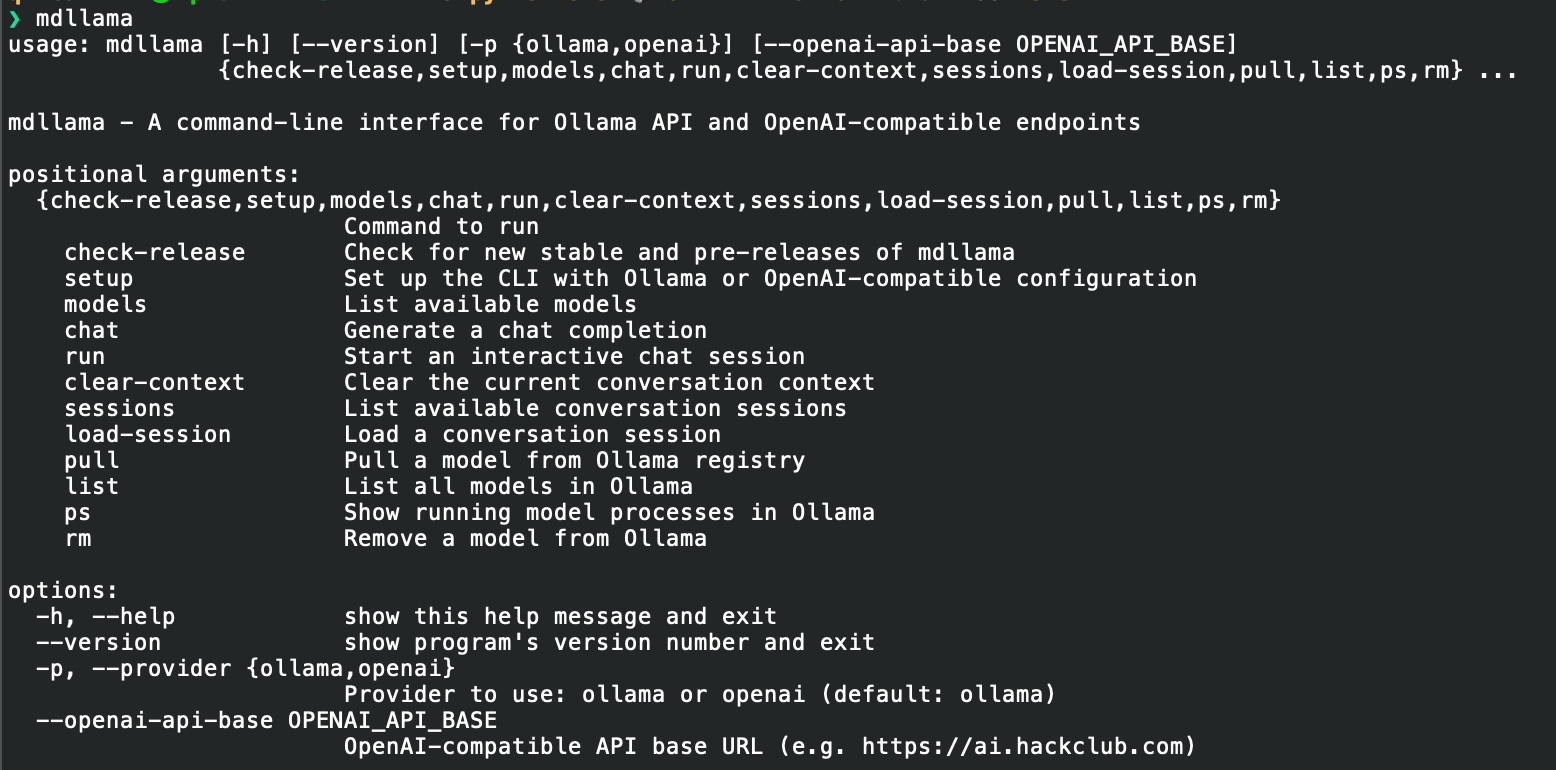

Screenshots

Chat Interface

Help

Live Demo

Go to this mdllama demo to try it out live in your browser. The API key is 9c334d5a0863984b641b1375a850fb5d

[!NOTE] Try asking the model to give you some markdown-formatted text, like:

Give me a markdown-formatted text about the history of AI.

So try it out and see how it works!

Installation

Install using package manager (recommended)

Debian/Ubuntu Installation

-

Add the PPA to your sources list:

echo 'deb [trusted=yes] https://packages.qincai.xyz/debian stable main' | sudo tee /etc/apt/sources.list.d/qincai-ppa.list sudo apt update

-

Install mdllama:

sudo apt install python3-mdllama

Fedora Installation

-

Download the latest RPM from: https://packages.qincai.xyz/fedora/

Or, to install directly:

sudo dnf install https://packages.qincai.xyz/fedora/mdllama-<version>.noarch.rpm

Replace

<version>with the latest version number. -

(Optional, highly recommended) To enable as a repository for updates, create

/etc/yum.repos.d/qincai-ppa.repo:[qincai-ppa] name=Raymont's Personal RPMs baseurl=https://packages.qincai.xyz/fedora/ enabled=1 metadata_expire=0 gpgcheck=0

Then install with:

sudo dnf install mdllama

3, Install the ollama library from pip:

pip install ollama

You can also install it globally with:

sudo pip install ollama

[!NOTE] The

ollamalibrary is not installed by default in the RPM package since there is no systemollamapackage avaliable (python3-ollama). You need to install it manually using pip in order to usemdllamawith Ollama models.

Traditional Bash Script Installation (Linux)

To install mdllama using the traditional bash script, run:

bash <(curl -fsSL https://raw.githubusercontent.com/QinCai-rui/mdllama/refs/heads/main/install.sh)

To uninstall mdllama, run:

bash <(curl -fsSL https://raw.githubusercontent.com/QinCai-rui/mdllama/refs/heads/main/uninstall.sh)

Windows & macOS Installation

Install via pip (recommended for Windows/macOS):

pip install mdllama

License

This project is licensed under the GNU General Public License v3.0. See the LICENSE file for details.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file mdllama-4.2.0.tar.gz.

File metadata

- Download URL: mdllama-4.2.0.tar.gz

- Upload date:

- Size: 43.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

77482b08babf426d498cce4659a2713ecbfe0dfbf6d70df890cecde9c071262c

|

|

| MD5 |

865020e9eb37ff9801e88bf04dcda95f

|

|

| BLAKE2b-256 |

05aeb8111a1e74364028eaadd01274bf2f7186c3cfa193ec0fee4ec697047479

|

Provenance

The following attestation bundles were made for mdllama-4.2.0.tar.gz:

Publisher:

publish-to-pypi.yml on QinCai-rui/mdllama

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

mdllama-4.2.0.tar.gz -

Subject digest:

77482b08babf426d498cce4659a2713ecbfe0dfbf6d70df890cecde9c071262c - Sigstore transparency entry: 361157550

- Sigstore integration time:

-

Permalink:

QinCai-rui/mdllama@3beeeb600a52969da1fdb1d3b9f6d8dd47a32744 -

Branch / Tag:

refs/heads/main - Owner: https://github.com/QinCai-rui

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish-to-pypi.yml@3beeeb600a52969da1fdb1d3b9f6d8dd47a32744 -

Trigger Event:

workflow_run

-

Statement type:

File details

Details for the file mdllama-4.2.0-py3-none-any.whl.

File metadata

- Download URL: mdllama-4.2.0-py3-none-any.whl

- Upload date:

- Size: 47.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

207907a0ef2e6a818883d969a03ce97395f310fa9552e9b7a242cb6754ed9dd5

|

|

| MD5 |

1db96d7f598fb4b9b6f50c5daa3f63bf

|

|

| BLAKE2b-256 |

42be4c2f2d180b4cced4b281e7869a6cc2ac727909ee50d629aa67552cad0203

|

Provenance

The following attestation bundles were made for mdllama-4.2.0-py3-none-any.whl:

Publisher:

publish-to-pypi.yml on QinCai-rui/mdllama

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

mdllama-4.2.0-py3-none-any.whl -

Subject digest:

207907a0ef2e6a818883d969a03ce97395f310fa9552e9b7a242cb6754ed9dd5 - Sigstore transparency entry: 361157580

- Sigstore integration time:

-

Permalink:

QinCai-rui/mdllama@3beeeb600a52969da1fdb1d3b9f6d8dd47a32744 -

Branch / Tag:

refs/heads/main - Owner: https://github.com/QinCai-rui

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish-to-pypi.yml@3beeeb600a52969da1fdb1d3b9f6d8dd47a32744 -

Trigger Event:

workflow_run

-

Statement type: