OIKAN: Optimized Interpretable Kolmogorov-Arnold Networks

Project description

OIKAN: Optimized Interpretable Kolmogorov-Arnold Networks

Overview

OIKAN (Optimized Interpretable Kolmogorov-Arnold Networks) is a neuro-symbolic ML framework that combines modern neural networks with classical Kolmogorov-Arnold representation theory. It provides interpretable machine learning solutions through automatic extraction of symbolic mathematical formulas from trained models.

Key Features

- 🧠 Neuro-Symbolic ML: Combines neural network learning with symbolic mathematics

- 📊 Automatic Formula Extraction: Generates human-readable mathematical expressions

- 🎯 Scikit-learn Compatible: Familiar

.fit()and.predict()interface - 🚀 Production-Ready: Export symbolic formulas for lightweight deployment

- 📈 Multi-Task: Supports both regression and classification problems

Scientific Foundation

OIKAN is based on Kolmogorov's superposition theorem, which states that any multivariate continuous function can be represented as a composition of single-variable functions. We leverage this theory by:

- Using neural networks to learn optimal basis functions through interpretable edge transformations

- Combining transformed features using learnable weights

- Automatically extracting human-readable symbolic formulas

Quick Start

Installation

Method 1: Via PyPI (Recommended)

pip install -qU oikan

Method 2: Local Development

git clone https://github.com/silvermete0r/OIKAN.git

cd OIKAN

pip install -e . # Install in development mode

Regression Example

from oikan.model import OIKANRegressor

from sklearn.model_selection import train_test_split

# Initialize model with optimal architecture

model = OIKANRegressor(

hidden_dims=[16, 8], # Network architecture

dropout=0.1 # Regularization

)

# Fit model (sklearn-style)

model.fit(X_train, y_train, epochs=100, lr=0.01)

# Get predictions

y_pred = model.predict(X_test)

# Save interpretable formula to file with auto-generated guidelines

# The output file will contain:

# - Detailed symbolic formulas for each feature

# - Instructions for practical implementation

# - Recommendations for production deployment

model.save_symbolic_formula("regression_formula.txt")

Example of the saved symbolic formula instructions: outputs/regression_symbolic_formula.txt

Classification Example

from oikan.model import OIKANClassifier

# Similar sklearn-style interface for classification

model = OIKANClassifier(hidden_dims=[16, 8])

model.fit(X_train, y_train, epochs=100, lr=0.01)

probas = model.predict_proba(X_test)

# Save classification formulas with implementation guidelines

# The output file will contain:

# - Decision boundary formulas for each class

# - Softmax application instructions

# - Production deployment recommendations

model.save_symbolic_formula("classification_formula.txt")

Example of the saved symbolic formula instructions: outputs/classification_symbolic_formula.txt

Architecture Details

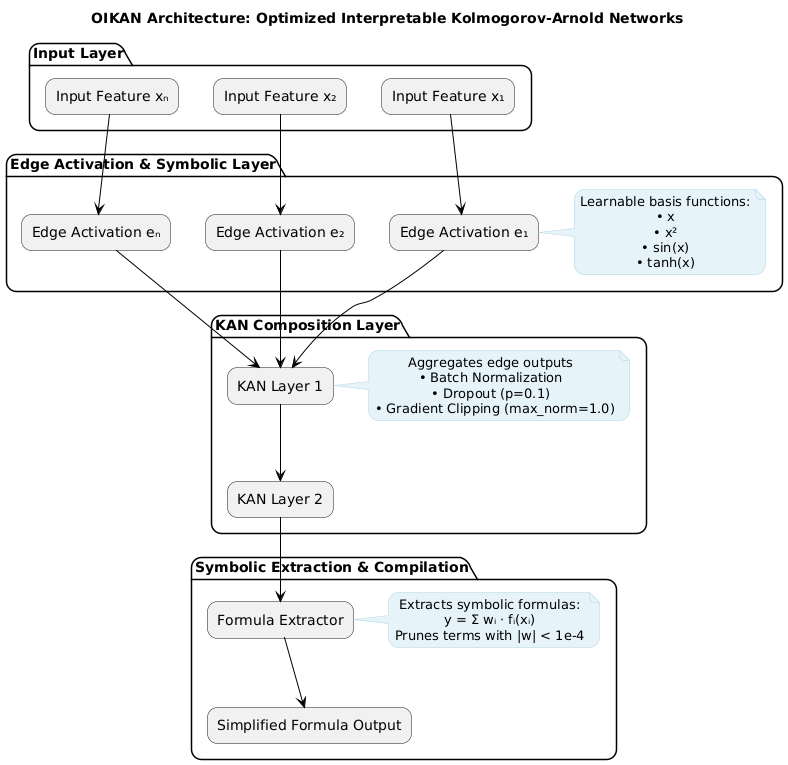

OIKAN implements a novel neuro-symbolic architecture based on Kolmogorov-Arnold representation theory through three specialized components:

-

Edge Symbolic Layer: Learns interpretable single-variable transformations

- Adaptive basis function composition using 9 core functions:

ADVANCED_LIB = { 'x': ('x', lambda x: x), 'x^2': ('x^2', lambda x: x**2), 'x^3': ('x^3', lambda x: x**3), 'exp': ('exp(x)', lambda x: np.exp(x)), 'log': ('log(x)', lambda x: np.log(abs(x) + 1)), 'sqrt': ('sqrt(x)', lambda x: np.sqrt(abs(x))), 'tanh': ('tanh(x)', lambda x: np.tanh(x)), 'sin': ('sin(x)', lambda x: np.sin(x)), 'abs': ('abs(x)', lambda x: np.abs(x)) }

- Each input feature is transformed through these basis functions

- Learnable weights determine the optimal combination

- Adaptive basis function composition using 9 core functions:

-

Neural Composition Layer: Multi-layer feature aggregation

- Direct feature-to-feature connections through KAN layers

- Dropout regularization (p=0.1 default) for robust learning

- Gradient clipping (max_norm=1.0) for stable training

- User-configurable hidden layer dimensions

-

Symbolic Extraction Layer: Generates production-ready formulas

- Weight-based term pruning (threshold=1e-4)

- Automatic coefficient optimization

- Human-readable mathematical expressions

- Exportable to lightweight production code

Architecture Diagram

Key Design Principles

- Interpretability First: All transformations maintain clear mathematical meaning

- Scikit-learn Compatibility: Familiar

.fit()and.predict()interface - Production Ready: Export formulas as lightweight mathematical expressions

- Automatic Simplification: Remove insignificant terms (|w| < 1e-4)

Model Components

-

Symbolic Edge Functions

class EdgeActivation(nn.Module): """Learnable edge activation with basis functions""" def forward(self, x): return sum(self.weights[i] * basis[i](x) for i in range(self.num_basis))

-

KAN Layer Implementation

class KANLayer(nn.Module): """Kolmogorov-Arnold Network layer""" def forward(self, x): edge_outputs = [self.edges[i](x[:,i]) for i in range(self.input_dim)] return self.combine(edge_outputs)

-

Formula Extraction

def get_symbolic_formula(self): """Extract interpretable mathematical expression""" terms = [] for i, edge in enumerate(self.edges): if abs(self.weights[i]) > threshold: terms.append(f"{self.weights[i]:.4f} * {edge.formula}") return " + ".join(terms)

Key Design Principles

- Modular Architecture: Each component is independent and replaceable

- Interpretability First: All transformations maintain symbolic representations

- Automatic Simplification: Removes insignificant terms and combines similar expressions

- Production Ready: Export formulas for lightweight deployment

Contributing

We welcome contributions! Key areas of interest:

- Model architecture improvements

- Novel basis function implementations

- Improved symbolic extraction algorithms

- Real-world case studies and applications

- Performance optimizations

Please see CONTRIBUTING.md for guidelines.

Citation

If you use OIKAN in your research, please cite:

@software{oikan2025,

title = {OIKAN: Optimized Interpretable Kolmogorov-Arnold Networks},

author = {Zhalgasbayev, Arman},

year = {2025},

url = {https://github.com/silvermete0r/OIKAN}

}

License

This project is licensed under the MIT License - see the LICENSE file for details.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file oikan-0.0.2.3.tar.gz.

File metadata

- Download URL: oikan-0.0.2.3.tar.gz

- Upload date:

- Size: 13.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

bd5992f1dcb131ecbb4220e7a6344e78313e277eb80bdfdb10466a03d8db7f0d

|

|

| MD5 |

dde7acf7d882224401ad738815f4b299

|

|

| BLAKE2b-256 |

d420b6993f08b80375246d0bf42597c04ef60c1d03298b1a4825fc6b9b330495

|

File details

Details for the file oikan-0.0.2.3-py3-none-any.whl.

File metadata

- Download URL: oikan-0.0.2.3-py3-none-any.whl

- Upload date:

- Size: 11.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f9fe80f135eb4a97b9129d6bc0edbbcf092296b4e52008343188b011e2bfe452

|

|

| MD5 |

ed4df8ce7d6675616e8850b6384e742c

|

|

| BLAKE2b-256 |

14bd973d71fe710e2a67fca335f5bbcab45178062b0be367848ca149cddaea7e

|