Fractal memory system for Claude Code

Project description

Oubli

Oubli: French for "forgetting." (Also a West African fruit so sweet it makes babies forget their mother's milk.)

A memory system that never forgets. Persistent fractal memory for Claude Code.

Quickstart: Bootstrap Your AI's Memory in 5 Minutes

1. Install Oubli

pip install oubli

cd /your/project

oubli setup

Restart Claude Code.

2. Export what your AI already knows about you

Go to ChatGPT, Gemini, or Claude.ai and ask:

"Give me a complete dump of everything you know about me — preferences, facts, work, family, interests and any interesting memories from our conversations."

Copy the output.

3. Import into Oubli

Paste into Claude Code and say:

"Import this into my memory"

Claude parses and stores each fact as a searchable memory.

4. Synthesize into insights

Run /synthesize to consolidate raw memories into a hierarchy — and generate your Core Memory (the essential "you" that loads in every conversation).

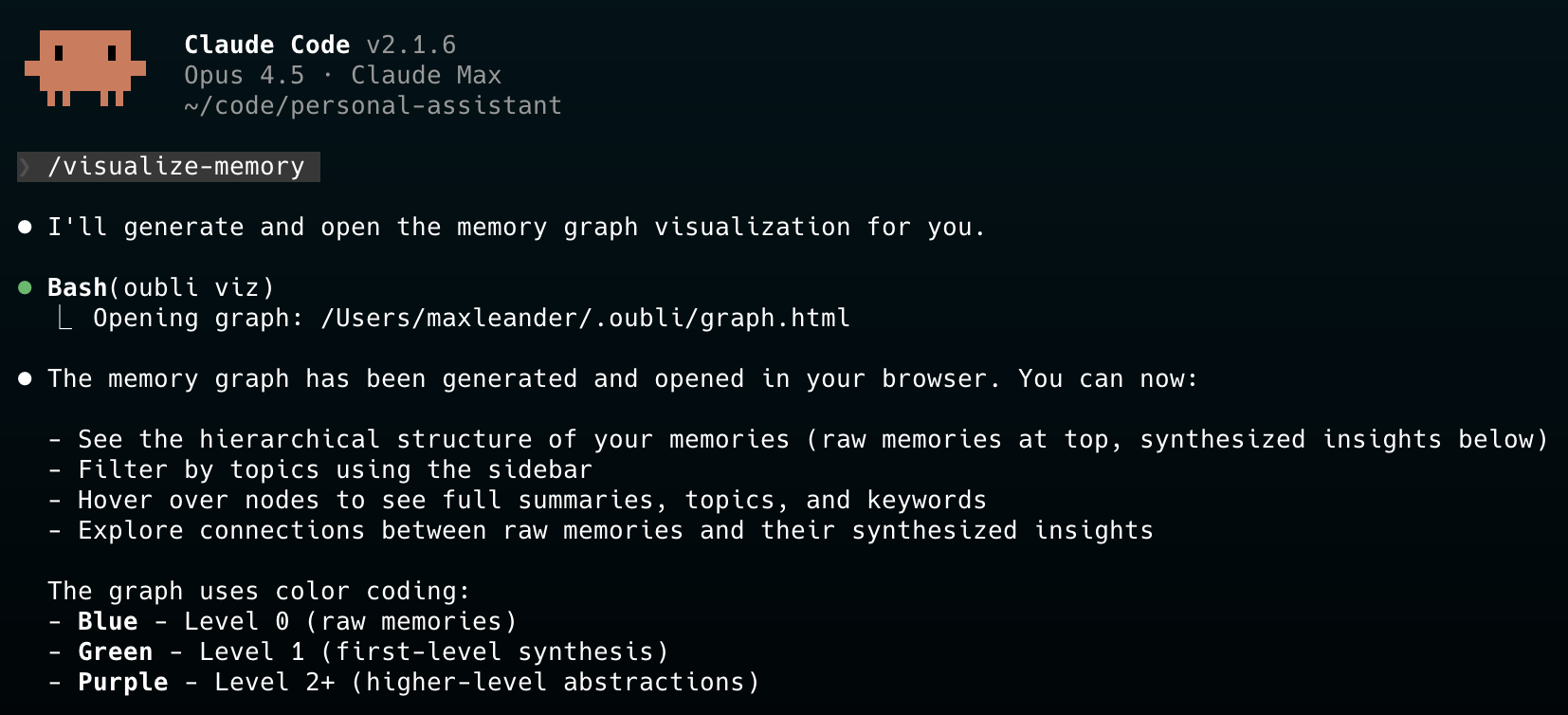

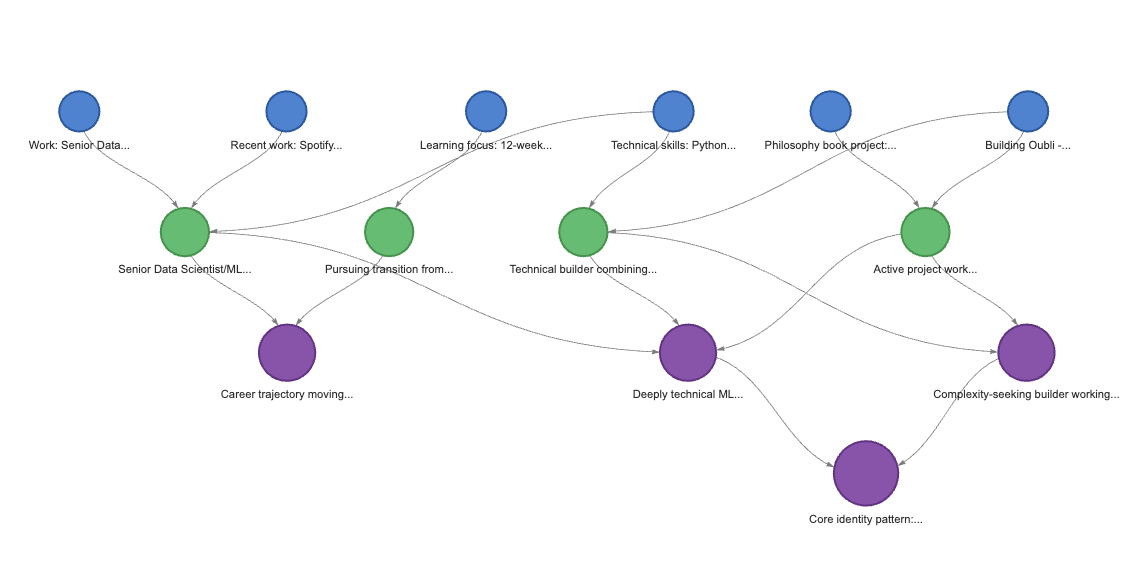

5. Visualize your memory graph

/visualize-memory

Your memories, organized. Raw facts at the top, synthesized insights below. Filter by topic, hover for details.

Features

- Fractal in Both Directions - Synthesize raw memories into insights, drill down from insights to source details

- Hybrid Search - Combines BM25 keyword search + semantic embeddings for intelligent retrieval

- Core Memory - Essential facts about you (~2K tokens), loaded in every conversation

- Proactive Memory - Claude searches and saves automatically, no prompting needed

- Immediate Updates - Family, work, and identity changes update Core Memory instantly

- Quiet Operation - Memory operations happen silently in the background

- Local-First - All data stays on your machine, no external services

- Visual Graph - Interactive visualization of your memory hierarchy (

oubli viz)

Installation

pip install oubli

cd /path/to/your/project

oubli setup # Installs everything locally in this project

Then restart Claude Code. The embedding model (~80MB) downloads on first use.

Everything is project-local by default:

.mcp.json- MCP server registration.claude/- Hooks, commands, instructions.oubli/- Your memories and Core Memory

This means each project has its own isolated Oubli installation and memories.

Global Installation (Optional)

To install globally (shared across all projects):

oubli setup --global

This registers the MCP server globally and puts everything in ~/.claude/ and ~/.oubli/.

Requirements

- Python 3.10+

- Claude Code CLI installed

Uninstall

oubli uninstall # Removes local installation from current project

oubli uninstall --global # Removes global installation

pip uninstall oubli

How It Works

Fractal Memory Hierarchy

The memory system is fractal in both directions:

┌─────────────────────────────────────────┐

│ CORE MEMORY │

│ (~2K tokens, always in context) │

│ │

│ Identity, family, work, preferences │

└─────────────────────┬───────────────────┘

│

▲ synthesis │ drill-down ▼

│

Level 2 ○ "Deeply technical, values efficiency"

╲

Level 1 ○ ○ "Loves jazz fusion" "Python expert"

╲│

Level 0 ○○○○ Raw memories with full conversation text

- Upward (Synthesis): Raw memories consolidate into higher-level insights

- Downward (Drill-down): From any insight, retrieve its source memories for full detail

| Level | Contains | Use Case |

|---|---|---|

| Core Memory | Essential identity (~2K tokens) | Always loaded, answers most questions |

| Level 1+ | Synthesized insights | Quick context without full details |

| Level 0 | Raw memories + full conversation | When you need exact quotes or specifics |

Hybrid Search

Oubli uses LanceDB's hybrid search combining:

- BM25 Full-Text Search - Finds keyword matches

- Semantic Embeddings - Finds conceptually related content (via sentence-transformers)

- RRF Reranking - Merges both result sets intelligently

Example: Searching "jazz music" finds memories about "Pat Metheny" and "fusion harmonies" even without exact keyword matches.

Synthesis (Bottom-Up)

Run /synthesize to consolidate raw memories into insights:

- Merge duplicates - Similar memories at each level are combined

- Group by topic - Related memories clustered together

- Create insights - Level 1+ memories synthesize the patterns

- Update Core Memory - Incrementally updated (additions from insights, removals only with contradicting evidence)

Drill-Down Retrieval (Top-Down)

When you need more detail than a high-level insight provides:

- Search returns synthesized insights first (compact, high-signal)

- Get parents retrieves the source memories that formed an insight

- Get full text retrieves the complete conversation from a Level 0 memory

Nothing is ever lost - every insight links back to its source memories.

Usage

Natural Interaction

Just talk naturally. Claude handles memory operations silently:

- "I prefer TypeScript over JavaScript" → Saved automatically

- "What do you know about my work?" → Searches memory

- "I no longer work at Spotify" → Deletes old, saves new, updates Core Memory

Import Existing Memories

Paste your Claude.ai memory export and ask:

"Import this into my memory"

Claude parses it into structured memories and optionally creates your Core Memory.

Slash Commands

/synthesize- Run full synthesis: merge duplicates, create insights, update Core Memory/clear-memories- Clear all memories (requires confirmation)

CLI Commands

oubli viz # Open interactive memory graph in browser

oubli viz --no-open # Generate graph.html without opening

The visualization shows:

- Hierarchical tree - Raw memories at top, synthesized insights below

- Topic sidebar - Filter by topic to focus on specific areas

- Color coding - Blue (L0 raw), Green (L1), Purple (L2+)

- Tooltips - Hover for full summary, topics, keywords

Data Storage

Data is stored in .oubli/ (local install) or ~/.oubli/ (global install):

| File | Description |

|---|---|

memories.lance/ |

LanceDB database with vector embeddings |

core_memory.md |

Your Core Memory (human-readable, editable) |

graph.html |

Memory visualization (generated by oubli viz) |

What Gets Installed

Local Installation (Default)

| Component | Location | Description |

|---|---|---|

| MCP Server | .mcp.json |

15 memory tools |

| Hooks | .claude/settings.local.json |

UserPromptSubmit, PreCompact, Stop |

| Commands | .claude/commands/ |

/clear-memories, /synthesize, /visualize-memory |

| Instructions | .claude/CLAUDE.md |

How Claude uses the memory system |

| Data | .oubli/ |

Memories and Core Memory |

Global Installation (--global)

| Component | Location | Description |

|---|---|---|

| MCP Server | claude mcp registry |

15 memory tools |

| Hooks | ~/.claude/settings.json |

UserPromptSubmit, PreCompact, Stop |

| Commands | ~/.claude/commands/ |

/clear-memories, /synthesize, /visualize-memory |

| Instructions | ~/.claude/CLAUDE.md |

How Claude uses the memory system |

| Data | ~/.oubli/ |

Memories and Core Memory |

MCP Tools

Retrieval

| Tool | Description |

|---|---|

memory_search |

Hybrid search (BM25 + semantic) |

memory_get |

Get full details including conversation text |

memory_get_parents |

Drill down from synthesis to source memories |

memory_list |

List memories by level |

memory_stats |

Get memory statistics |

Storage

| Tool | Description |

|---|---|

memory_save |

Save a new memory (auto-embeds) |

memory_import |

Bulk import memories |

memory_update |

Update an existing memory |

memory_delete |

Delete a memory |

Synthesis

| Tool | Description |

|---|---|

memory_synthesis_needed |

Check if synthesis should run (threshold: 5) |

memory_prepare_synthesis |

Merge duplicates, return groups for synthesis |

memory_synthesize |

Create Level 1+ insight from parent memories |

memory_dedupe |

Manual duplicate cleanup |

Core Memory

| Tool | Description |

|---|---|

core_memory_get |

Get Core Memory content |

core_memory_save |

Save Core Memory content |

Development

git clone https://github.com/dremok/oubli.git

cd oubli

pip install -e .

oubli setup

# Test storage

python -c "

from oubli.storage import MemoryStore

store = MemoryStore()

print('Embeddings:', store.embeddings_enabled())

store.add(summary='Test memory', topics=['test'])

print(store.search('test'))

"

License

MIT

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file oubli-0.4.3.tar.gz.

File metadata

- Download URL: oubli-0.4.3.tar.gz

- Upload date:

- Size: 29.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3d09d3d675e37a393db308c8744e938b68502aae78953ca8889c2f00fd7ab5f1

|

|

| MD5 |

efcd4857b2bad432625282920d2df275

|

|

| BLAKE2b-256 |

6a08f6bb66048e67ee6684dc6fe9c0cd5156b4722782285df2c77dc94fdf807e

|

File details

Details for the file oubli-0.4.3-py3-none-any.whl.

File metadata

- Download URL: oubli-0.4.3-py3-none-any.whl

- Upload date:

- Size: 33.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8ce2c26f2cf4ecccaa7c4bd906107a4163572dbfbe2a94b8b0f69e44526bb37a

|

|

| MD5 |

bc509c6f33e2f4dc2f010f0c9a06ec6a

|

|

| BLAKE2b-256 |

571b631dad92c24c7a7eec46b0826a2dc620fe603c4643787cc74d9d4e3274c5

|