Task oriented AI agent framework for digital workers and vertical AI agents

Project description

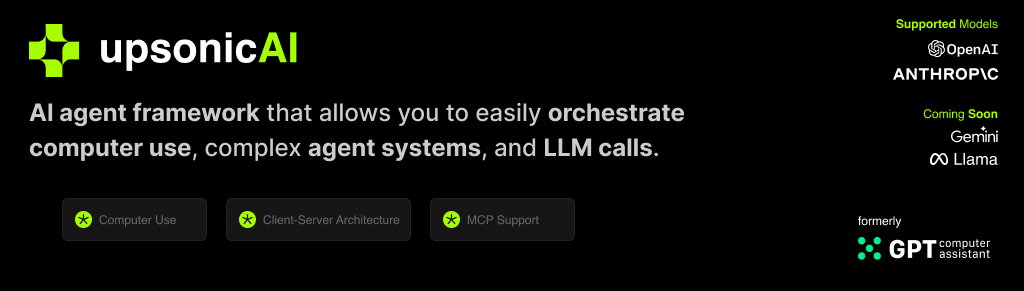

What is Upsonic?

Upsonic offers a cutting-edge enterprise-ready framework where you can orchestrate LLM calls, agents, and computer use to complete tasks cost-effectively. It provides more reliable systems, scalability, and a task-oriented structure that you need while completing real-world cases.

Key features:

- Production-Ready Scalability: Deploy seamlessly on AWS, GCP, or locally using Docker.

- Task-Centric Design: Focus on practical task execution, with options for:

- Basic tasks via LLM calls.

- Advanced tasks with V1 agents.

- Complex automation using V2 agents with MCP integration.

- MCP Server Support: Utilize multi-client processing for high-performance tasks.

- Tool-Calling Server: Exception-secure tool management with robust server API interactions.

- Computer Use Integration: Execute human-like tasks using Anthropic’s ‘Computer Use’ capabilities.

- Easily adding tools: You can add your custom tools and MCP tools with a single line of code.

- Client-server architecture: Production-ready stateless enterprise-ready system

📙 Documentation

You can access our documentation at docs.upsonic.ai. All concepts and examples are available there.

🛠️ Getting Started

Prerequisites

- Python 3.10 or higher

- Access to OpenAI or Anthropic API keys (Azure and Bedrock Supported)

Installation

pip install upsonic

Basic Example

Set your OPENAI_API_KEY

export OPENAI_API_KEY=sk-***

Start the agent

from upsonic import Task, Agent

task = Task("Who developed you?")

agent = Agent("Coder")

agent.print_do(task)

Features

Direct LLM Call

LLMs have always been intelligent. We know exactly when to call an agent or an LLM. This creates a smooth transition from LLM to agent systems. The call method works like an agent, based on tasks and optimizing cost and latency for your requirements. Focus on the task. Don't waste time with complex architectures.

from upsonic import Direct

Direct.do(task1)

Response Format

The output is essential for deploying an AI agent across apps or as a service. In Upsonic, we use Pydantic BaseClass as input for the task system. This allows you to configure the output exactly how you want it, such as a list of news with title, body, and URL. You can create a flexible yet robust output mechanism that improves interoperability between the agent and your app.

from upsonic import ObjectResponse

# Example ObjectResponse usage

class News(ObjectResponse):

title: str

body: str

url: str

tags: list[str]

class ResponseFormat(ObjectResponse):

news_list: list[News]

Tool Integration

Our Framework officially supports Model Context Protocol (MCP) and custom tools. You can use hundreds of MCP servers at https://glama.ai/mcp/servers or https://smithery.ai/ We also support Python functions inside a class as a tool. You can easily generate your integrations with that.

from upsonic.client.tools import Search

# MCP Tool

class HackerNewsMCP:

command = "uvx"

args = ["mcp-hn"]

# Custom Tool

class MyTools:

def our_server_status():

return True

tools = [Search, MyTools] # HackerNewsMCP

Other LLM's

agent = Agent("Coder", llm_model="openai/gpt-4o")

Access other LLMs through the docs

Memory

Humans have an incredible capacity for context length, which reflects their comprehensive context awareness and consistently produces superior results. In Upsonic, our memory system adeptly handles complex workflows, delivering highly personalized outcomes. It seamlessly remembers prior tasks and preferences, ensuring optimal performance. You can confidently set up memory settings within Agent, leveraging the agent_id system. Agents, each with their distinct personality, are uniquely identified by their ID, ensuring precise and efficient execution.

agent_id_ = "product_manager_agent"

agent = Agent(

agent_id_=agent_id_

...

memory=True

)

Knowledge Base

The Knowledge Base provides private or public content to your agent to ensure accurate and context-aware tasks. For example, you can provide a PDF and URL to the agent. The Knowledge Base seamlessly integrates with the Task System, requiring these sources.

from upsonic import KnowledgeBase

my_knowledge_base = KnowledgeBase(files=["sample.pdf", "https://upsonic.ai"])

task1 = Task(

...

context[my_knowledge_base]

)

Connecting Task Outputs

Chaining tasks is essential for complex workflows where one task's output informs the next. You can assign a task to another as context for performing the job. This will prepare the response of task 1 for task 2.

task1 = Task(

...

)

task2 = Task(

...

context[task1]

)

Be an Human

Agent and characterization are based on LLM itself. We are trying to characterize the developer, PM, and marketing. Sometimes, we need to give a human name. This is required for tasks like sending personalized messages or outreach. For these requirements, we have name and contact settings in Agent. The agent will feel like a human as you specify.

product_manager_agent = Agent(

...

name="John Walk"

contact="john@upsonic.ai"

)

Multi Agent

Distribute tasks effectively across agents with our automated task distribution mechanism. This tool matches tasks based on the relationship between agent and task, ensuring collaborative problem-solving across agents and tasks.

from upsonic import MultiAgent

MultiAgent.do([agent2, agent1], [task1, task2])

Reliable Computer Use

Computer use can able to human task like humans, mouse move, mouse click, typing and scrolling and etc. So you can build tasks over non-API systems. It can help your linkedin cases, internal tools. Computer use is supported by only Claude for now.

from upsonic.client.tools import ComputerUse

...

tools = [ComputerUse]

...

Reflection

LLM's by their nature oriented to finish your process. By the way its mean sometimes you can get empty result. Its effect your business logic and your application progress. We support reflection mechanism for that to check the result is staisfying and if not give a feedback. So you can use the reflection for preventing blank messages and other things.

product_manager_agent = Agent(

...

reflection=True

)

Compress Context

The context windows can be small as in OpenAI models. In this kind of situations we have a mechanism that compresses the message, system_message and the contexts. If you are working with situations like deepsearching or writing a long content and giving it as context of another task. The compress_context is full fit with you. This mechanism will only work in context overflow situations otherwise everything is just normal.

product_manager_agent = Agent(

...

compress_context=True

)

Telemetry

We use anonymous telemetry to collect usage data. We do this to focus our developments on more accurate points. You can disable it by setting the UPSONIC_TELEMETRY environment variable to false.

import os

os.environ["UPSONIC_TELEMETRY"] = "False"

Coming Soon

- Dockerized Server Deploy

- Verifiers For Computer Use

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file upsonic-0.42.0a1739099062.tar.gz.

File metadata

- Download URL: upsonic-0.42.0a1739099062.tar.gz

- Upload date:

- Size: 199.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.5.29

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1758d7c5a893fcba1265377d167bc39b58bf2ff7f4d50c19a591246be7a78213

|

|

| MD5 |

610f00487140bedb2b22d78b1ddf043e

|

|

| BLAKE2b-256 |

58dc3b4ddf8ea8377289bed3e55d7ece0a270e3fcc0de0fe838e8d6232311fff

|

File details

Details for the file upsonic-0.42.0a1739099062-py3-none-any.whl.

File metadata

- Download URL: upsonic-0.42.0a1739099062-py3-none-any.whl

- Upload date:

- Size: 75.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: uv/0.5.29

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

56203539bc6173da2371106f4120d19b038dae90e1a1fac9e1d4ba10b2a7ee17

|

|

| MD5 |

75bcc1013f0425110f16ff7f51ef169a

|

|

| BLAKE2b-256 |

163aa7485a536ddf6f66e3c0d548347f58c2d3507785bd092b97d93b75c90d02

|