A toolset for compressing, deploying and serving LLM

Project description

Latest News 🎉

2026

- [2026/04] The LMDeploy project on PyPI has reached its storage quota, so pre-built wheels for new releases cannot be uploaded for the time being. You can download packages from the GitHub Releases page or install from source instead. We will update this notice when wheel uploads to PyPI resume. Affected versions: >=0.12.2

- [2026/02] Support Qwen3.5

- [2026/02] Support vllm-project/llm-compressor 4bit symmetric/asymmetric quantization. Refer here for detailed guide

2025

- [2025/09] TurboMind supports MXFP4 on NVIDIA GPUs starting from V100, achieving 1.5x the performmance of vLLM on H800 for openai gpt-oss models!

- [2025/06] Comprehensive inference optimization for FP8 MoE Models

- [2025/06] DeepSeek PD Disaggregation deployment is now supported through integration with DLSlime and Mooncake. Huge thanks to both teams!

- [2025/04] Enhance DeepSeek inference performance by integration deepseek-ai techniques: FlashMLA, DeepGemm, DeepEP, MicroBatch and eplb

- [2025/01] Support DeepSeek V3 and R1

2024

- [2024/11] Support Mono-InternVL with PyTorch engine

- [2024/10] PyTorchEngine supports graph mode on ascend platform, doubling the inference speed

- [2024/09] LMDeploy PyTorchEngine adds support for Huawei Ascend. See supported models here

- [2024/09] LMDeploy PyTorchEngine achieves 1.3x faster on Llama3-8B inference by introducing CUDA graph

- [2024/08] LMDeploy is integrated into modelscope/swift as the default accelerator for VLMs inference

- [2024/07] Support Llama3.1 8B, 70B and its TOOLS CALLING

- [2024/07] Support InternVL2 full-series models, InternLM-XComposer2.5 and function call of InternLM2.5

- [2024/06] PyTorch engine support DeepSeek-V2 and several VLMs, such as CogVLM2, Mini-InternVL, LlaVA-Next

- [2024/05] Balance vision model when deploying VLMs with multiple GPUs

- [2024/05] Support 4-bits weight-only quantization and inference on VLMs, such as InternVL v1.5, LLaVa, InternLMXComposer2

- [2024/04] Support Llama3 and more VLMs, such as InternVL v1.1, v1.2, MiniGemini, InternLMXComposer2.

- [2024/04] TurboMind adds online int8/int4 KV cache quantization and inference for all supported devices. Refer here for detailed guide

- [2024/04] TurboMind latest upgrade boosts GQA, rocketing the internlm2-20b model inference to 16+ RPS, about 1.8x faster than vLLM.

- [2024/04] Support Qwen1.5-MOE and dbrx.

- [2024/03] Support DeepSeek-VL offline inference pipeline and serving.

- [2024/03] Support VLM offline inference pipeline and serving.

- [2024/02] Support Qwen 1.5, Gemma, Mistral, Mixtral, Deepseek-MOE and so on.

- [2024/01] OpenAOE seamless integration with LMDeploy Serving Service.

- [2024/01] Support for multi-model, multi-machine, multi-card inference services. For usage instructions, please refer to here

- [2024/01] Support PyTorch inference engine, developed entirely in Python, helping to lower the barriers for developers and enable rapid experimentation with new features and technologies.

2023

- [2023/12] Turbomind supports multimodal input.

- [2023/11] Turbomind supports loading hf model directly. Click here for details.

- [2023/11] TurboMind major upgrades, including: Paged Attention, faster attention kernels without sequence length limitation, 2x faster KV8 kernels, Split-K decoding (Flash Decoding), and W4A16 inference for sm_75

- [2023/09] TurboMind supports Qwen-14B

- [2023/09] TurboMind supports InternLM-20B

- [2023/09] TurboMind supports all features of Code Llama: code completion, infilling, chat / instruct, and python specialist. Click here for deployment guide

- [2023/09] TurboMind supports Baichuan2-7B

- [2023/08] TurboMind supports flash-attention2.

- [2023/08] TurboMind supports Qwen-7B, dynamic NTK-RoPE scaling and dynamic logN scaling

- [2023/08] TurboMind supports Windows (tp=1)

- [2023/08] TurboMind supports 4-bit inference, 2.4x faster than FP16, the fastest open-source implementation. Check this guide for detailed info

- [2023/08] LMDeploy has launched on the HuggingFace Hub, providing ready-to-use 4-bit models.

- [2023/08] LMDeploy supports 4-bit quantization using the AWQ algorithm.

- [2023/07] TurboMind supports Llama-2 70B with GQA.

- [2023/07] TurboMind supports Llama-2 7B/13B.

- [2023/07] TurboMind supports tensor-parallel inference of InternLM.

Introduction

LMDeploy is a toolkit for compressing, deploying, and serving LLM, developed by the MMRazor and MMDeploy teams. It has the following core features:

-

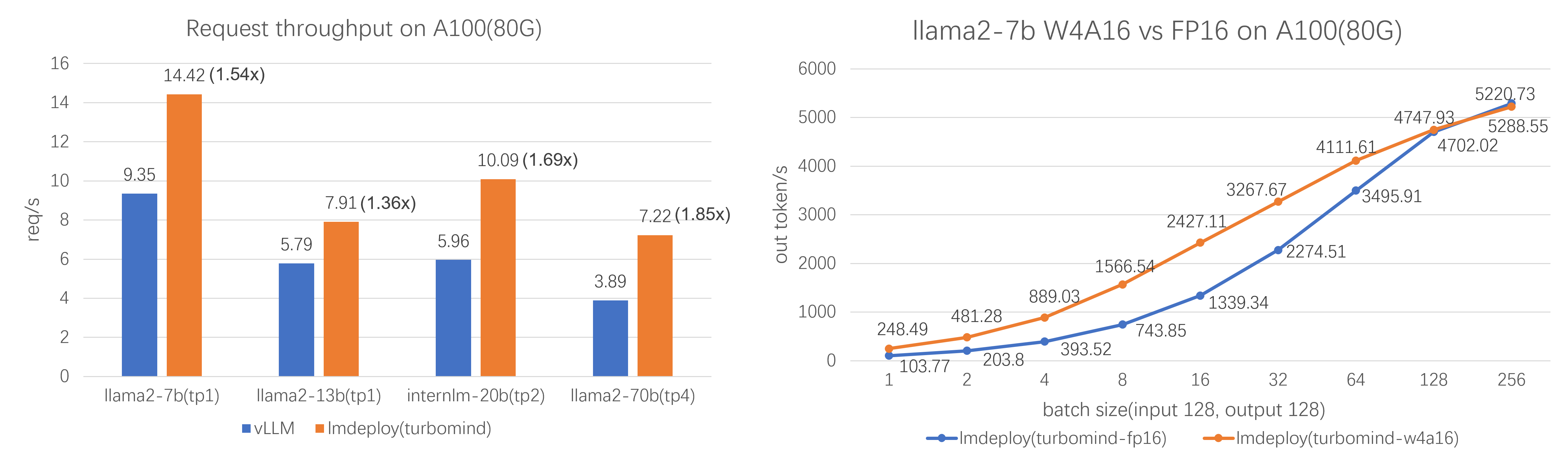

Efficient Inference: LMDeploy delivers up to 1.8x higher request throughput than vLLM, by introducing key features like persistent batch(a.k.a. continuous batching), blocked KV cache, dynamic split&fuse, tensor parallelism, high-performance CUDA kernels and so on.

-

Effective Quantization: LMDeploy supports weight-only and k/v quantization, and the 4-bit inference performance is 2.4x higher than FP16. The quantization quality has been confirmed via OpenCompass evaluation.

-

Effortless Distribution Server: Leveraging the request distribution service, LMDeploy facilitates an easy and efficient deployment of multi-model services across multiple machines and cards.

-

Excellent Compatibility: LMDeploy supports KV Cache Quant, AWQ and Automatic Prefix Caching to be used simultaneously.

Performance

Supported Models

| LLMs | VLMs |

|

|

LMDeploy has developed two inference engines - TurboMind and PyTorch, each with a different focus. The former strives for ultimate optimization of inference performance, while the latter, developed purely in Python, aims to decrease the barriers for developers.

They differ in the types of supported models and the inference data type. Please refer to this table for each engine's capability and choose the proper one that best fits your actual needs.

Quick Start

Installation

It is recommended installing lmdeploy using pip in a conda environment (python 3.10 - 3.13):

conda create -n lmdeploy python=3.12 -y

conda activate lmdeploy

pip install lmdeploy

Since v0.3.0, the default prebuilt package is compiled on CUDA 12. Starting from v0.10.2, LMDeploy no longer supports CUDA 11 series.

If you are using a GeForce RTX 50 series graphics card, please install the LMDeploy prebuilt package compiled with CUDA 12.8 as follows:

export LMDEPLOY_VERSION=0.12.3

export PYTHON_VERSION=312

pip install https://github.com/InternLM/lmdeploy/releases/download/v${LMDEPLOY_VERSION}/lmdeploy-${LMDEPLOY_VERSION}+cu128-cp${PYTHON_VERSION}-cp${PYTHON_VERSION}-manylinux2014_x86_64.whl --extra-index-url https://download.pytorch.org/whl/cu128

Offline Batch Inference

import lmdeploy

with lmdeploy.pipeline("internlm/internlm3-8b-instruct") as pipe:

response = pipe(["Hi, pls intro yourself", "Shanghai is"])

print(response)

[!NOTE] By default, LMDeploy downloads model from HuggingFace. If you would like to use models from ModelScope, please install ModelScope by

pip install modelscopeand set the environment variable:

export LMDEPLOY_USE_MODELSCOPE=TrueIf you would like to use models from openMind Hub, please install openMind Hub by

pip install openmind_huband set the environment variable:

export LMDEPLOY_USE_OPENMIND_HUB=True

For more information about inference pipeline, please refer to here.

Tutorials

Please review getting_started section for the basic usage of LMDeploy.

For detailed user guides and advanced guides, please refer to our tutorials:

- User Guide

- Advance Guide

Third-party projects

-

Deploying LLMs offline on the NVIDIA Jetson platform by LMDeploy: LMDeploy-Jetson

-

Example project for deploying LLMs using LMDeploy and BentoML: BentoLMDeploy

Contributing

We appreciate all contributions to LMDeploy. Please refer to CONTRIBUTING.md for the contributing guideline.

Acknowledgement

Citation

@misc{2023lmdeploy,

title={LMDeploy: A Toolkit for Compressing, Deploying, and Serving LLM},

author={LMDeploy Contributors},

howpublished = {\url{https://github.com/InternLM/lmdeploy}},

year={2023}

}

@article{zhang2025efficient,

title={Efficient Mixed-Precision Large Language Model Inference with TurboMind},

author={Zhang, Li and Jiang, Youhe and He, Guoliang and Chen, Xin and Lv, Han and Yao, Qian and Fu, Fangcheng and Chen, Kai},

journal={arXiv preprint arXiv:2508.15601},

year={2025}

}

License

This project is released under the Apache 2.0 license.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file lmdeploy-0.12.3-cp313-cp313-win_amd64.whl.

File metadata

- Download URL: lmdeploy-0.12.3-cp313-cp313-win_amd64.whl

- Upload date:

- Size: 45.4 MB

- Tags: CPython 3.13, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.20

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

584094ea7e9755ef33ce27c64c64da18afbe0095f98f13590e5fcc8943cc3372

|

|

| MD5 |

e0fa2b58b3bccca838ab358f303cbdb8

|

|

| BLAKE2b-256 |

d43ad12675bb4f8ec6f23847b979bab27a4222e5435ae0b82ca9693820769fda

|

File details

Details for the file lmdeploy-0.12.3-cp313-cp313-manylinux2014_x86_64.whl.

File metadata

- Download URL: lmdeploy-0.12.3-cp313-cp313-manylinux2014_x86_64.whl

- Upload date:

- Size: 123.4 MB

- Tags: CPython 3.13

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.20

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2a7b543767c87a410e63dee4a4ee857394cbf87cca521f9d4f6db0de14ed33ea

|

|

| MD5 |

755f216b16fada6a121f9a46ab23e1b8

|

|

| BLAKE2b-256 |

8f323b1ddf1181fe53411a6f56a9357cd80012f1113cd29f6d76d99d95fc80f3

|

File details

Details for the file lmdeploy-0.12.3-cp312-cp312-win_amd64.whl.

File metadata

- Download URL: lmdeploy-0.12.3-cp312-cp312-win_amd64.whl

- Upload date:

- Size: 45.4 MB

- Tags: CPython 3.12, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.20

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e0ccb8bf6e1ce2b5132ab778bd63f90227965cc4d909451da991ce61f30f8b9b

|

|

| MD5 |

2ae85e36e838767339d98c73db343c96

|

|

| BLAKE2b-256 |

53fe0e474c75db34be6592f1928453712088f4378650565163cb8a4787165f28

|

File details

Details for the file lmdeploy-0.12.3-cp312-cp312-manylinux2014_x86_64.whl.

File metadata

- Download URL: lmdeploy-0.12.3-cp312-cp312-manylinux2014_x86_64.whl

- Upload date:

- Size: 123.4 MB

- Tags: CPython 3.12

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.20

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

51df97ecc51c4cdfb76731d9deff49bf9dca564d0d706ee4a64cbae9c418c8e3

|

|

| MD5 |

7847a66240d57fc579d3029d053b10ef

|

|

| BLAKE2b-256 |

708b0610b5826ab7e4f2653e87ce6cce08e8e60f44023962c9bb4cefcb5083fe

|

File details

Details for the file lmdeploy-0.12.3-cp311-cp311-win_amd64.whl.

File metadata

- Download URL: lmdeploy-0.12.3-cp311-cp311-win_amd64.whl

- Upload date:

- Size: 45.4 MB

- Tags: CPython 3.11, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.20

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1d58eb69149df848d06a614280d943dcba5ed0da99c4b622afbd3e1a646aa0db

|

|

| MD5 |

d91bc2c51f7b854017af48ba3b672b7c

|

|

| BLAKE2b-256 |

89e1a291e89100de4b395a85b96a5a7a609fdd5b10a6534fafecd123ceac468b

|

File details

Details for the file lmdeploy-0.12.3-cp311-cp311-manylinux2014_x86_64.whl.

File metadata

- Download URL: lmdeploy-0.12.3-cp311-cp311-manylinux2014_x86_64.whl

- Upload date:

- Size: 123.4 MB

- Tags: CPython 3.11

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.20

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

35f607f46ce1a90ee0abd3225db374035e951e9de6d525e749fbd71008a402fb

|

|

| MD5 |

12f84f0700e595aa38adc59236469f9a

|

|

| BLAKE2b-256 |

6be99ed8cef3aef8dd2a696973f93d908dbaa588af26adcbdbe82b848f535c7b

|

File details

Details for the file lmdeploy-0.12.3-cp310-cp310-win_amd64.whl.

File metadata

- Download URL: lmdeploy-0.12.3-cp310-cp310-win_amd64.whl

- Upload date:

- Size: 45.4 MB

- Tags: CPython 3.10, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.20

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

99daea8e2d0d562763f6dd0cb932e481bf30e7cbe9a1f88bf071bff2f1845d79

|

|

| MD5 |

7834aa1f8ddc4a4ba33974b9f85df1e1

|

|

| BLAKE2b-256 |

5843b49072fd48d4144fdc348085bab4de4a9a49b72f201f7c6f251959291f2e

|

File details

Details for the file lmdeploy-0.12.3-cp310-cp310-manylinux2014_x86_64.whl.

File metadata

- Download URL: lmdeploy-0.12.3-cp310-cp310-manylinux2014_x86_64.whl

- Upload date:

- Size: 123.4 MB

- Tags: CPython 3.10

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.20

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

24078cd31ec3ba3277a8ec7c0bd310d8f2bef341b26382af1fc9895dc8c02900

|

|

| MD5 |

4dc82b246d4924aa3a7234b218e1c48e

|

|

| BLAKE2b-256 |

19f347fbc3f32eb2ce8f81b60cd9b907668e0572cca15f901a9d6f51a11f8f8d

|